Laurent Ach

877 posts

Laurent Ach

@ach3d

CTO, leveraging artificial and human intelligence @ https://t.co/S7FTM8wYNA - https://t.co/x8nrqGm8Lw

En alliant abstraction théorique et retombées concrètes, Stéphane Mallat, lauréat 2025 de la médaille d’or du CNRS, a marqué de son empreinte les mathématiques appliquées à l’informatique. Du format de compression d’images JPEG 2000 aux fondements ... #Echobox=1757582418" target="_blank" rel="nofollow noopener">lejournal.cnrs.fr/articles/steph…

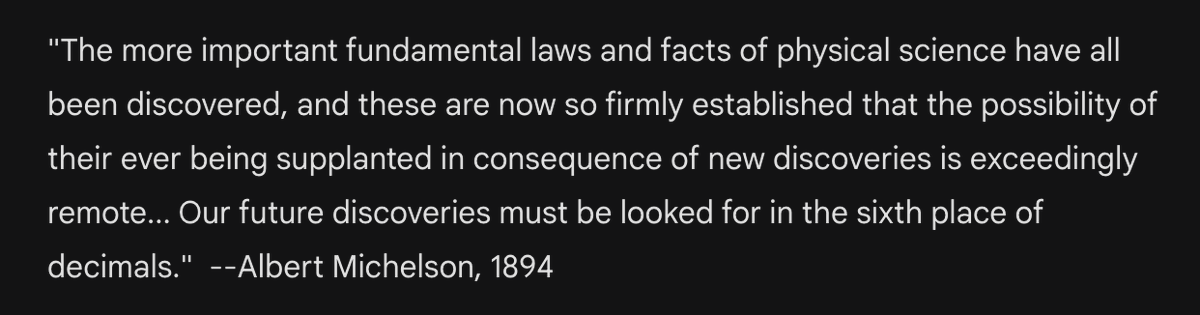

New episode w Adam Brown, a lead of Blueshift at DeepMind & theoretical physicist at Stanford. Stupefying, terrifying, & absolutely fascinating. On destroying the light cone with vacuum decay, mining black holes, holographic principle, & path to LLMs which make Einsteinian conceptual breakthroughs. Enjoy! Links below. 0:00:00 - Changing the laws of physics 0:26:53 - Why our universe is the way it is 0:38:18 - Making Einstein level AGI 1:01:19 - Physics stagnation & particle colliders 1:11:58 - Hitchhiking 1:29:48 - Nagasaki 1:37:07 - Adam’s career 1:44:13 - Mining black holes 2:00:30 - Holographic principle 2:24:13 - Infinities 2:32:30 - Engineering constraints for future civilizations

Today OpenAI announced o3, its next-gen reasoning model. We've worked with OpenAI to test it on ARC-AGI, and we believe it represents a significant breakthrough in getting AI to adapt to novel tasks. It scores 75.7% on the semi-private eval in low-compute mode (for $20 per task in compute ) and 87.5% in high-compute mode (thousands of $ per task). It's very expensive, but it's not just brute -- these capabilities are new territory and they demand serious scientific attention.

✨ L’IA DE QWANT EST EN OPEN WEEK ✨ Notre IA est disponible à toutes et tous pendant une semaine ! Plus besoin d’avoir un compte (même si c’est gratuit 👀) pour l’utiliser. Elle répond à toutes vos questions et requêtes en un clin d’oeil. Plus d’excuses pour tester ;)

🚀 Nouvel épisode de "Monde Numérique" ! 🎙️ L'IA va-t-elle remplacer les développeurs ? Pas demain la veille, selon Laurent Ach @ach3d 🤖💡 #MondeNumérique #IA #Tech smartlink.ausha.co/monde-numeriqu…

🎙️ On a réalisé un petit podcast avec nos amis de chez @vivaldibrowser ! Notre CTO, @ach3d et Jon von Tetzchner, CEO chez Vivaldi, discutent de la manière dont il est possible de concevoir des technos respectueuses de la vie privée en ligne 🔐 youtu.be/EGaNjCLIH-k?si…

@examachine @gargantuandwarf @ylecun What would be the greatest possible scientific discovery of our era, do you think?

The question of whether LLMs can reason is, in many ways, the wrong question. The more interesting question is whether they are limited to memorization / interpolative retrieval, or whether they can adapt to novelty beyond what they know. (They can't, at least until you start doing active inference, or using them in a search loop, etc.) There are two distinct things you can call "reasoning", and no benchmark aside from ARC-AGI makes any attempt to distinguish between the two. First, there is memorizing & retrieving program templates to tackle known tasks, such as "solve ax+b=c" -- you probably memorized the "algorithm" for finding x when you were in school. LLMs *can* do this! In fact, this is *most* of what they do. However, they are notoriously bad at it, because their memorized programs are vector functions fitted to training data, that generalize via interpolation. This is a very suboptimal approach for representing any kind of discrete symbolic program. This is why LLMs on their own still struggle with digit addition, for instance -- they need to be trained on millions of examples of digit addition, but they only achieve ~70% accuracy on new numbers. This way of doing "reasoning" is not fundamentally different from purely memorizing the answers to a set of questions (e.g. 3x+5=2, 2x+3=6, etc.) -- it's just a higher order version of the same. It's still memorization and retrieval -- applied to templates rather than pointwise answers. The other way you can define reasoning is as the ability to *synthesize* new programs (from existing parts) in order to solve tasks you've never seen before. Like, solving ax+b=c without having ever learned to do it, while only knowing about addition, subtraction, multiplication and division. That's how you can adapt to novelty. LLMs *cannot* do this, at least not on their own. They can however be incorporated into a program search process capable of this kind of reasoning. This second definition is by far the more valuable form of reasoning. This is the difference between the smart kids in the back of the class that aren't paying attention but ace tests by improvisation, and the studious kids that spend their time doing homework and get medium-good grades, but are actually complete idiots that can't deviate one bit from what they've memorized. Which one would you hire? LLMs cannot do this because they are very much limited to retrieval of memorized programs. They're static program stores. However, can display some amount of adaptability, because not only are the stored programs capable of generalization via interpolation, the *program store itself* is interpolative: you can interpolate between programs, or otherwise "move around" in continuous program space. But this only yields local generalization, not any real ability to make sense of new situations. This is why LLMs need to be trained on enormous amounts of data: the only way to make them somewhat useful is to expose them to a *dense sampling* of absolutely everything there is to know and everything there is to do. Humans don't work like this -- even the really dumb ones are still vastly more intelligent than LLMs, despite having far less knowledge.