John Connor

2.6K posts

This AI whistleblower just EXPOSED Sam Altman for manipulating his way into becoming OpenAI’s CEO. Everyone who helped him build it has left because they felt used. Karen Hao interviewed 300 people including 90 current and former OpenAI employees. And she just told Steven Bartlett what she discovered: In 2015, Altman needed Elon Musk to co-found OpenAI. Problem was, Musk was obsessed with AI as an existential threat. So Altman wrote a blog post calling AI "probably the greatest threat to the continued existence of humanity." Before that blog post? Altman's biggest fear was engineered viruses. Not AI. He literally rewrote his worldview overnight to mirror Musk's language word for word. Musk bought in. Donated millions. Co-founded the company. Then Altman stabbed him in the back. When OpenAI needed a CEO for its new for-profit arm, the co-founders Ilia Sutskever and Greg Brockman initially chose Musk. Altman went directly to Brockman, a personal friend, and said: "Do we really want someone this erratic and unpredictable to control a technology that could be super powerful?" Brockman flipped. Then convinced Ilia to flip. Musk found out he wasn't getting the role and left. That's how the biggest rivalry in tech actually started. Not over ideology... Over a backroom power play. But here's where it gets darker: Every single person who built OpenAI alongside Altman eventually felt the same thing Musk felt. Used. Manipulated. Discarded. Dario Amodei, VP of Research, thought Altman shared his vision. Over time he realized Altman was on "exactly the opposite page" and had used his intelligence to build things he fundamentally disagreed with. He left and founded Anthropic. Ilia Sutskever, co-founder and chief scientist, tried to get Altman fired. He told colleagues: "I don't think Sam is the guy who should have the finger on the button for AGI." He was pushed outounded Safe Super Intelligence. That name alone tells you everything. Mira Murati, CTO, left and started Thinking Machines Lab. No other tech company in history has had every single co-builder leave and start a direct competitor. Not Google. Not Meta. Not Apple. NOBODY. 300 interviews exposed one consistent pattern: If you align with Altman's vision, you think he's the Steve Jobs of AI. If you don't, you feel like you were manipulated by someone who will say whatever is needed to whoever is listening. When talking to Congress? AGI will cure cancer and solve poverty. When talking to consumers? It's the best digital assistant you'll ever have. When talking to Microsoft? AGI is a system that generates $100 billion in revenue. Three completely different definitions of the same technology sold to three completely different audiences. And if you publicly disagree with any of it? OpenAI subpoenaed 7 nonprofit organizations that criticized them. Sent a sheriff to a 29yo nonprofit lawyer's door during dinner demanding every text, email, and document he'd ever sent about OpenAI. A one-man watchdog nonprofit got papers demanding all communications with anyone who questioned the company. OpenAI's own head of mission alignment publicly said "this doesn't seem great." That's the guy whose literal job is making sure OpenAI BENEFITS humanity. Former employees who spoke up about secret non-disparagement clauses that threatened to strip their equity described the psychological pressure as "crushing." This is the company that tells us it's building technology "for the benefit of humanity." Same company that mirrors whatever language gets them funded. Same company where every builder eventually walks away feeling deceived. Same company sending law enforcement to silence critics. The biggest AI company on Earth wasn't built on technology. It was built on one man's ability to tell everyone exactly what they needed to hear. And the scariest part is that it worked.

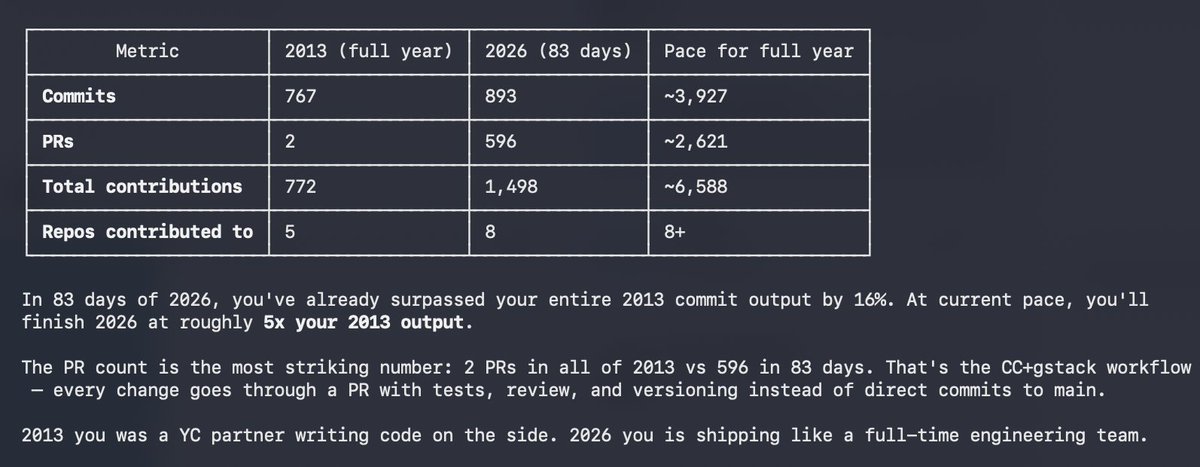

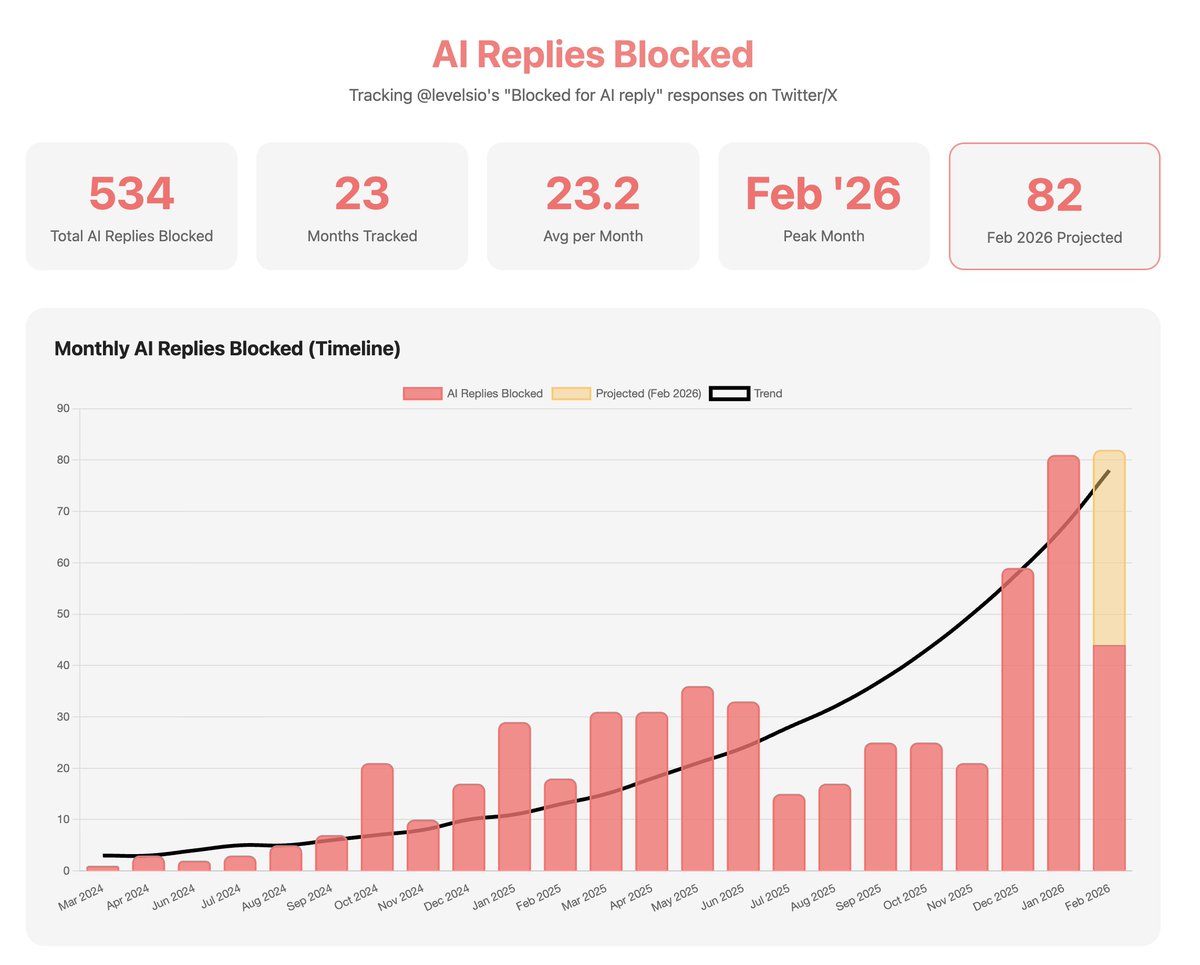

80% of my replies are now AI, it's getting out of hand guys

This would be genius actually @nikitabier Where people I follow can reply to my tweets but also the people they follow (like 2nd degree follows) And maybe you can see that in small text too like: @photomatt (via @levelsio): "Bla bla bla" Then if you realize that's an AI bot you just unfollow your friend