Alex Ratner

1.8K posts

Alex Ratner

@ajratner

@SnorkelAI @uwcse / prev @StanfordAILab – Interested in data management systems for machine learning, weak supervision, and impactful applications.

Congratulations to @ravi_lsvp, @ravirajjain, and @buckymoore on their recognition in the Seed 100 List! The Seed 100 List from @businessinsider highlights early-stage investors with a unique ability to scout the tech stars of tomorrow. Amid the AI boom, the competitiveness and speed of investors getting in before the “seed stage” as we know it have been reinforced. This is the Seed 100’s sixth year, and it is an honor to have 3 Lightspeed team members acknowledged on the list. Early-stage investing has been wired into our team’s DNA for over 26 years. And we are incredibly proud to have backed many teams from their Seed rounds and beyond. As Ravi puts it: "The founders Lightspeed backs don't extrapolate from the present; they derive from first principles and arrive at futures others haven't thought to look for.”

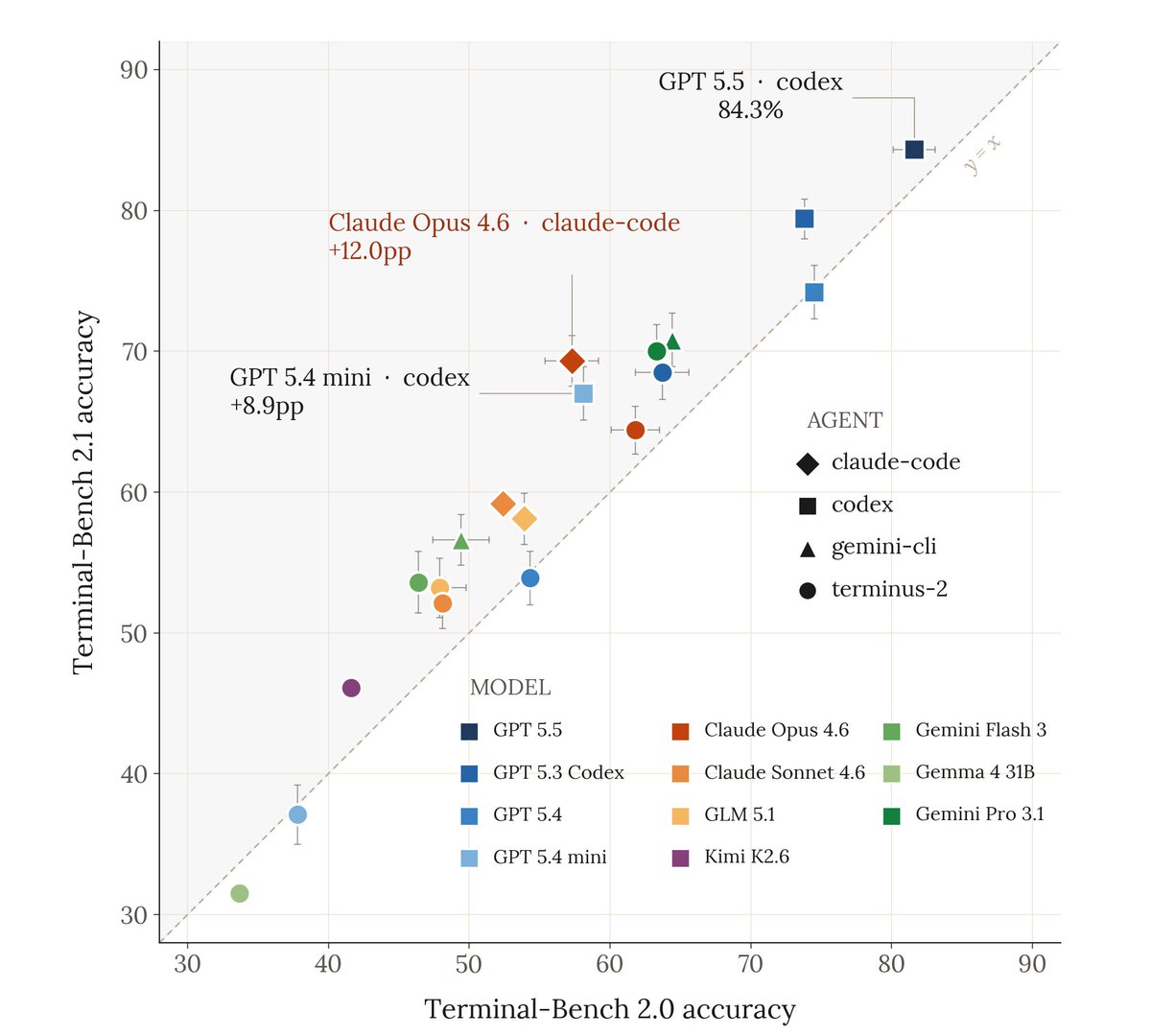

📣 Announcing Terminal-Bench Science: benchmarking AI agents on real scientific workflows – now open for task contributions👇 tbench.ai/news/tb-scienc… @AnthropicAI, @OpenAI, and @GoogleDeepMind use Terminal-Bench to evaluate AI on coding tasks. We're now extending it to scientific workflows. 1/6🧵

The right data mix can deliver 5x better sample efficiency for RLVR. Our paper "Learning from Less" just got an oral at MLSys '26 — we show that how you compose training data (task complexity, diversity) matters more than how much you throw at the model. The @SnorkelAI research team is presenting next week in Bellevue. If you're at MLSys, come hang! We're hosting an after-hours social on May 21.

Today, we’re releasing Continual Learning Bench 1.0: the first, realistic benchmark for measuring how AI systems can improve in online settings. Benchmarks today assume models are stateless. Each example is independent, and once a system finishes a task, it moves on as if nothing happened. But deployed AI systems should learn from experience. We tested 10+ frontier systems against novel, expert-validated tasks and find there’s still plenty of headroom for learning. (1/n)