All Purpose Geek

1.7K posts

@allpurposegeek

Official Twitter account for All Purpose Geek We do #DonationOptional #TechSupport and #TechNews. Just @ us.

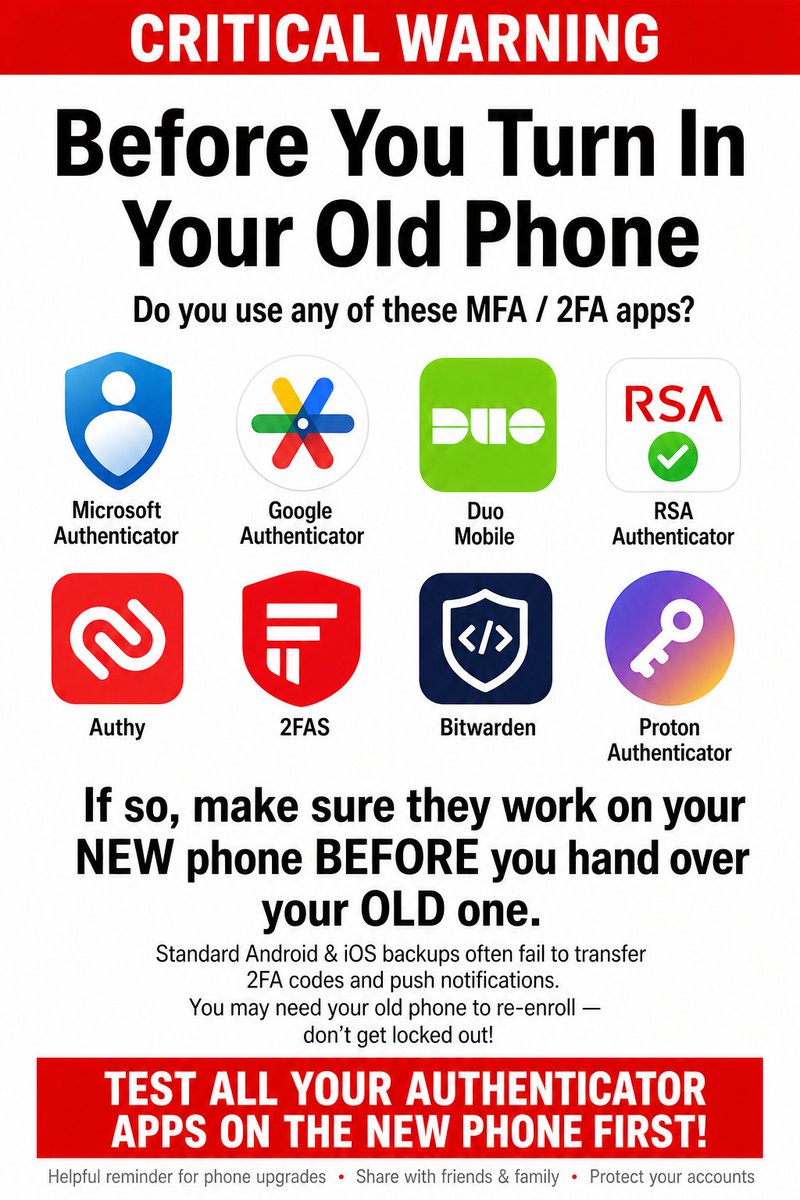

Hey @ATT @TMobile @Verizon @Ask_Spectrum considering how often I have to remediate this after people get new phones, I'm urging you mobile providers develop a flyer like this and hand it out with people swap their phones. If you can't train your customer service agents handling phone swaps to bring this to your customer's attention, then just let this do the talking.

Quoting my earlier post: “Hey @TMobile @Verizon @GetSpectrum — please train your CSRs to ask customers if they use 2FA apps like Duo, Microsoft Authenticator, or Google Authenticator before trading in their phones. These apps don’t always transfer during phone backups.” Now here we are again — I’ve had to help multiple people just this week who were locked out of their accounts after upgrading. @TMobile @Verizon @Ask_Spectrum — what is it going to take for your teams to ask one simple, essential question before a customer hands over their phone and get locked out of their accounts? As more platforms phase out SMS-based MFA in favor of app-based authentication, this problem is only going to escalate. Your reps are the last line of defense. They need to be trained to explain that 2FA apps do not reliably transfer through standard phone backup or restore processes. In most cases, users must still have access to the original app to reauthorize on a new device — or they risk being completely locked out. Techs like me are constantly getting pulled in to fix preventable messes because this isn’t being addressed on your end. Start protecting your customers. This is basic digital hygiene in 2026.

Hey @microsoft / @MSFTExchange It is time for Microsoft to make DNS SRV the first Autodiscover lookup the de-facto standard. Legacy Outlook clients and legacy ActiveSync implementations are no longer supported anyway, yet Autodiscover behavior still prioritizes discovery paths that only exist to accommodate them. This creates unnecessary complexity for modern Exchange, hybrid, and cloud environments. Microsoft Outlook and ActiveSync compatible clients should always query the DNS SRV record first when performing Autodiscover. If the SRV record does not exist, only then should clients proceed to secondary methods. DNS SRV records were designed specifically to solve service discovery without guessing hostnames. Autodiscover still relies on inferred URLs, certificate gymnastics, and HTTP to HTTPS redirects in 2025, which is no longer appropriate. An SRV first approach would immediately eliminate several long standing issues. There would be no forced requirement for "Autodiscover." domain or root domain HTTPS listeners. There would be no need for SAN or UCC certificates purely to satisfy guessed endpoints. There would be no HTTP to HTTPS redirect workarounds (Exactly what Microsoft relies on to redirect autidscover.customdonain.com to autodiscover.outlook.com). There would be no need to block or exclude Microsoft 365 Autodiscover endpoints using registry overrides. Administrators could cleanly redirect to any valid endpoint, including autodiscover.outlook.com. Behavior would become predictable across on prem, hybrid, and cloud environments. Today, Outlook may probe Microsoft 365 endpoints before local discovery, follow stale or incorrect SCP records, guess HTTPS URLs that require additional certificates, fall back to insecure HTTP redirects, and cache broken endpoints long after migrations. This forces administrators to deploy exclusions, redirects, split DNS, and registry hacks simply to make Autodiscover deterministic. This is no longer an edge case. It is routine work. Backward compatibility is no longer a valid justification. Unsupported Outlook versions and deprecated ActiveSync clients should not dictate modern discovery logic. Continuing to prioritize legacy behavior preserves technical debt at the expense of administrators and customers. A reasonable and safe path forward is simple. Always query DNS SRV first. If the SRV record exists, use it and stop. If the SRV record does not exist, then fall back to SCP, cloud probing, and hostname based discovery. Autodiscover does not need more workarounds. It needs a clear, modern default. Microsoft should formally adopt and document SRV first Autodiscover as the standard behavior going forward. If Microsoft makes that the standard, than others who implement ActiveSync or outlook anywhere functionality will do the same.