Anvil

974 posts

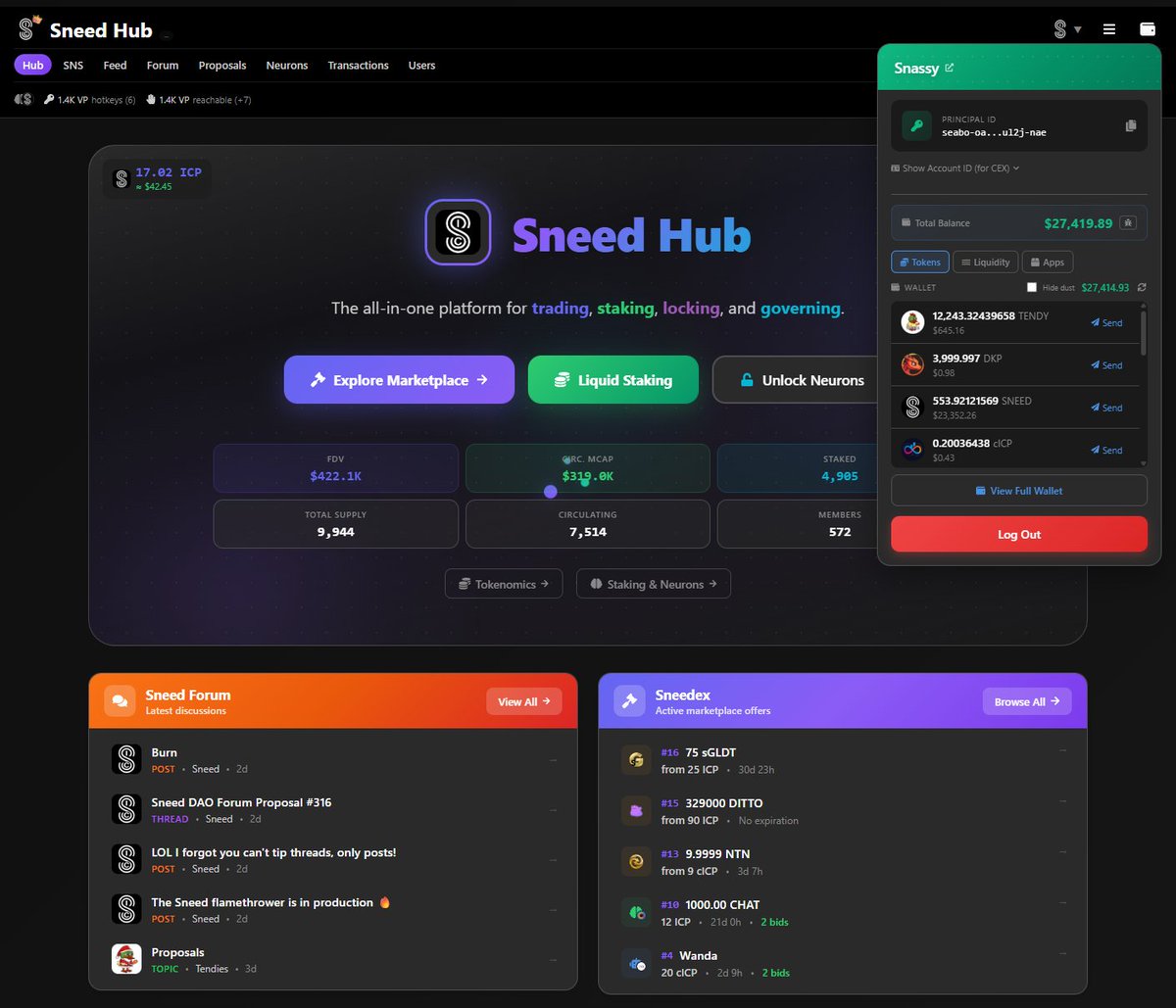

What price is $cICP supposed to be compared to $ICP? Is this not working as it should be? @anvil_ic

#ICP was never about the cheap casino-floor hype—it was the raw crypto physics humming under the hood. That’s what dragged me in. Four years tangled in IC tech, recently pacing like a half-mad code-sheriff in a ’60s noir trench coat, and only now do I see the real beast waking up. I went bull, bear, then deep into the basement—chain-smoking my way through five straight months of AI experiments, trying to push the machines until they squealed. And somewhere in that hallucinatory jungle of code and sleepless nights… the next logical evolution snapped into place like a loaded magical bazooka. Maybe the IC founders smuggled alien tech in their pockets. Maybe it’s just the natural arc of centuries of human obsession. Doesn’t matter. What matters is the secret gear I’m holding now, humming like a reactor in the fog-of-war. And I’ll tell you this straight: I’ve never been more god-tier bullish on IC. This thing is going to tear open the floorboards of the industry. Everyone gets disrupted. ICP → top 3. Sooner than you think. Immediate fallout: 1.IC DeFi becomes sharp, clean, brutal—and actually works. 2.We need more developers, not less. A whole new wave of them. The frontier is wide open and screaming.

All my new code will be closed-source from now on. I've contributed millions of lines of carefully written OSS code over the past decade, spent thousands of hours helping other people. If you want to use my libraries (1M+ downloads/month) in the future, you have to pay. I made good money funneling people through my OSS and being recognized as expert in several fields. This was entirely based on HUMANS knowing and seeing me by USING and INTERACTING with my code. No humans will ever read my docs again when coding agents do it in seconds. Nobody will even know it's me who built it. Look at Tailwind: 75 million downloads/month, more popular than ever, revenue down 80%, docs traffic down 40%, 75% of engineering team laid off. Someone submitted a PR to add LLM-optimized docs and Wathan had to decline - optimizing for agents accelerates his business's death. He's being asked to build the infrastructure for his own obsolescence. Two of the most common OSS business models: - Open Core: Give away the library, sell premium once you reach critical mass (Tailwind UI, Prisma Accelerate, Supabase Cloud...) - Expertise Moat: Be THE expert in your library - consulting gigs, speaking, higher salary Tailwind just proved the first one is dying. Agents bypass the documentation funnel. They don't see your premium tier. Every project relying on docs-to-premium conversion will face the same pressure: Prisma, Drizzle, MikroORM, Strapi, and many more. The core insight: OSS monetization was always about attention. Human eyeballs on your docs, brand, expertise. That attention has literally moved into attention layers. Your docs trained the models that now make visiting you unnecessary. Human attention paid. Artificial attention doesn't. Some OSS will keep going - wealthy devs doing it for fun or education. That's not a system, that's charity. Most popular OSS runs on economic incentives. Destroy them, they stop playing. Why go closed-source? When the monetization funnel is broken, you move payment to the only point that still exists: access. OSS gave away access hoping to monetize attention downstream. Agents broke downstream. Closed-source gates access directly. The final irony: OSS trained the models now killing it. We built our own replacement. My prediction: a new marketplace emerges, built for agents. Want your agent to use Tailwind? Prisma? Pay per access. Libraries become APIs with meters. The old model: free code -> human attention -> monetization. The new model: pay at the gate or your agent doesn't get in.