Bennett Hoffman

15.6K posts

Bennett Hoffman

@bennhoffman

humans are my spirit animal. https://t.co/MZdP929Y8A

Just two humans having a perfectly natural conversation.

games are commercial art built with software. i’m not sure there’s another software industry as resistant to AI as gaming.

Miller's take that "Superintelligence" won't cure cancer and death is going to age really badly. Firstly, it's wrong by definition. "Superintelligence" means an AI that that greatly exceeds the cognitive performance of humans in all domains. That is literally the definition of "Superintelligence". So it must greatly exceed humans at curing cancer specifically. Presumably Miller thinks that humans are capable of curing cancer in principle (otherwise, why do we devote human researchers to this task?), therefore by definition any "Superintelligence" must be able to cure cancer. Secondly, Miller starts bounding the capabilities of "Superintelligence" by comparing it to contemporary LLM-based systems. There are two ways this could go wrong: - either LLM-based-systems are not capable of curing cancer, in which case they will never achieve "Superintelligence". Or, sufficient improvements may yield LLM-based systems that do actually cure cancer in which case they might make it to "Superintelligence" (or might not, if they are bad at some other task). I think people like Geoffrey Miller should just stop talking about "Superintelligence" if they are going to abuse the term like this. But set aside the definitional games: maybe AI systems that we can actually build will be bad at biomedical science? This is certainly the case today. Modern LLM-based systems are good at coding and at commonsense and generic research tasks, but not that good at anything else. LLMs work well when they get fast feedback. But, so do humans. Anyone sufficiently intelligent can get good at math and coding. Getting good at biology requires a lot of equipment. We haven't really connected modern AIs to automated labs yet. When we do, I do expect significant progress just as we saw progress when we connected AI to the internet. In a way, LLMs are just the result of connecting the preexisting AI stuff to large scale data. We already had neural language models in 2015. I used to work on language models, just before LLMs took off. Small language models are not impressive or that useful. So I have seen a full cycle of this playing out over a decade. x.com/gmiller/status…

And then he [squints, checks notes] went back to work and built NeXT and Pixar.

Big headlines the other week about this huge (1.8 million people, 3 continents! Wow!) study out of Oxford looking at the effect of different diets on cancer risk. Vegetarianism cures cancer!!! Just one problem. That's not what the data show. The study (nature.com/articles/s4141…) makes it's big claims based on unadjusted p-values (that aren't even numerically reported anywhere in the main paper). But as anyone with a brain knows, performing 80 different hypothesis tests is bound to produce some false positives. The authors adjust for false discoveries, but don't really take it into account when discussing their data. They also perform sensitivity analysis, but again ignore the findings when discussing their results. Journalists then picked up the narrative-convenient "significant" findings (while simultaneously ignoring inconvenient significant findings): BBC, Sky News, The Independent all reported the same claim: "A vegetarian diet can slash the risk of five types of cancer by as much as 30%, a new study has found.” Okay. But of the original 11 nominally significant findings in study, which made it through both multiple comparisons adjustment and sensitivity analysis? Just the one. Which one? Risk of oesophageal squamous cell carcinoma in vegetarians versus meat eaters. HR=1.93 (95% CI: 1.30-2.87). Yup.

"Every software company in the world, needs to have an @openclaw strategy" - Jensen at @NVIDIAAI GTC Framing OpenClaw as one of the most important open source releases ever, they have announced NemoClaw - a reference platform for enterprise grade secure Openclaw, with OpenShell, Network boundaries, security baked in.

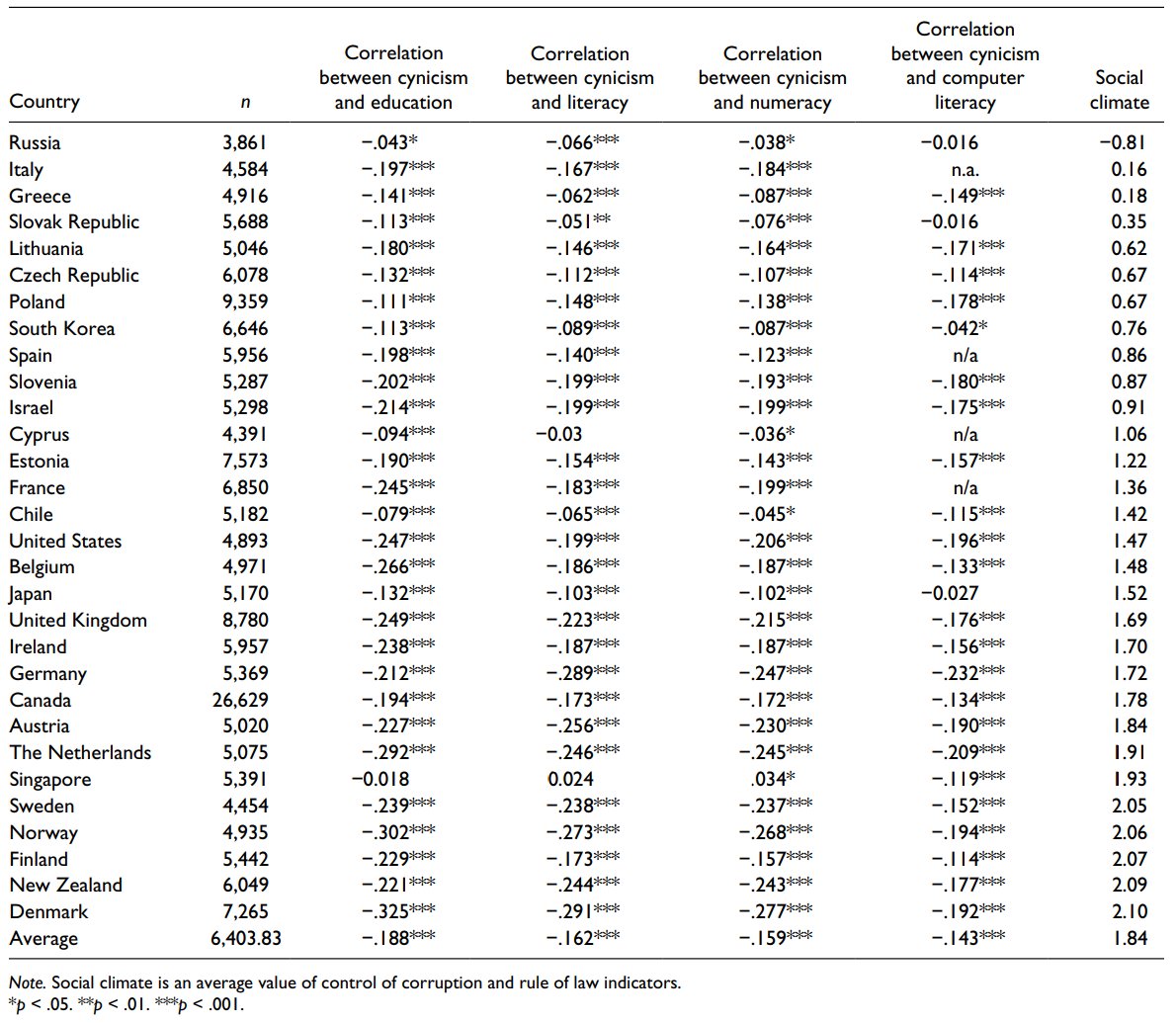

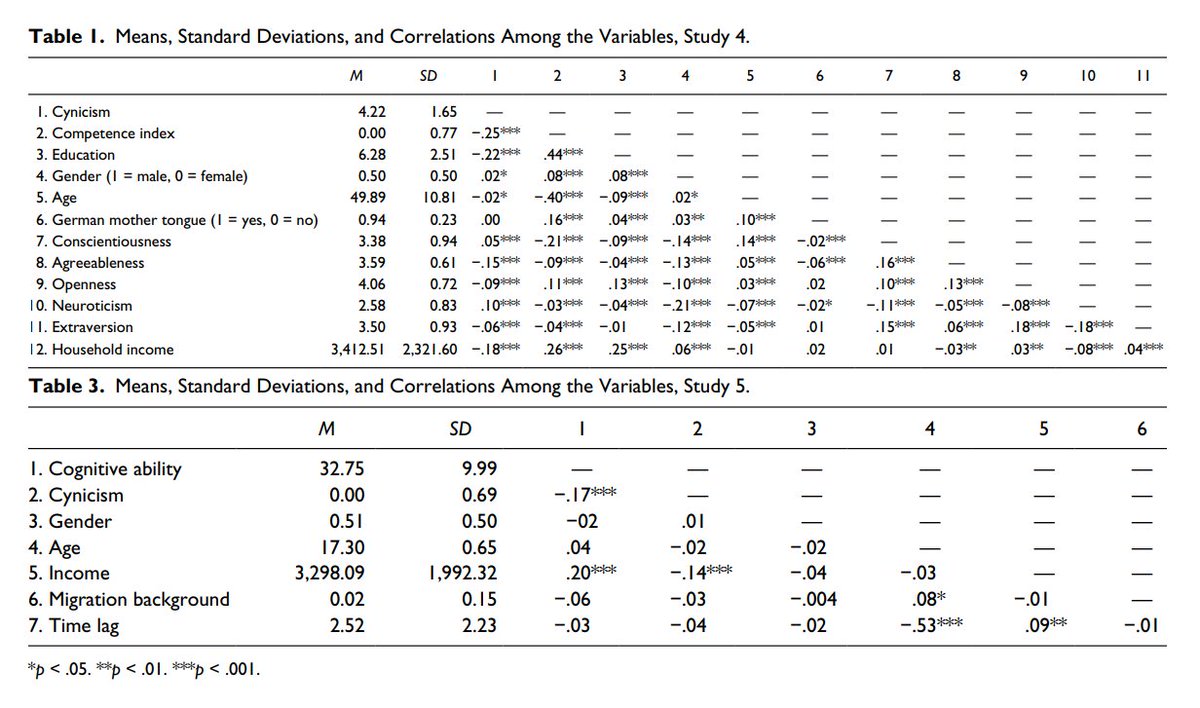

A worldwide survey of 200k people finds cynical people are thought of as smarter... but that, in reality, cynics test lower on cognitive & competency tests. As Stephen Colbert said: “Cynicism masquerades as wisdom, but it is the furthest thing from it.” journals.sagepub.com/doi/pdf/10.117…

• According to the story, the dog's cancer has not been cured. • Absent all regulatory and manufacturing constraints, we could not just synthesize magic mRNA cancer cures. The technology is very promising, but it's not yet any kind of panacea. • The emergent system of regulators and manufacturers is indeed far too conservative, and small-scale experimentation is much harder than it should be. More people should read the first part of The Rise and Fall of Modern Medicine. Recommend @RuxandraTeslo, @PatrickHeizer for more.

Australian tech entrepreneur Paul Conyngham explains how he used ChatGPT/AlphaFold (spent $3,000 with no biology background) to create a custom MRNA vaccine to treat his dog’s cancer tumors. Unreal.

A nice reminder that everything you're worried about is ultimately insignificant.