Aleksandar Botev

26 posts

@botev_mg

Research scientist at Google DeepMind.

The Training team @OpenAI is hiring researchers in London 🚀 Our twin missions are to train better LLMs, and serve them more cheaply Get in touch if you are excited to collaborate on architecture design, reliable scaling, and faster optimization

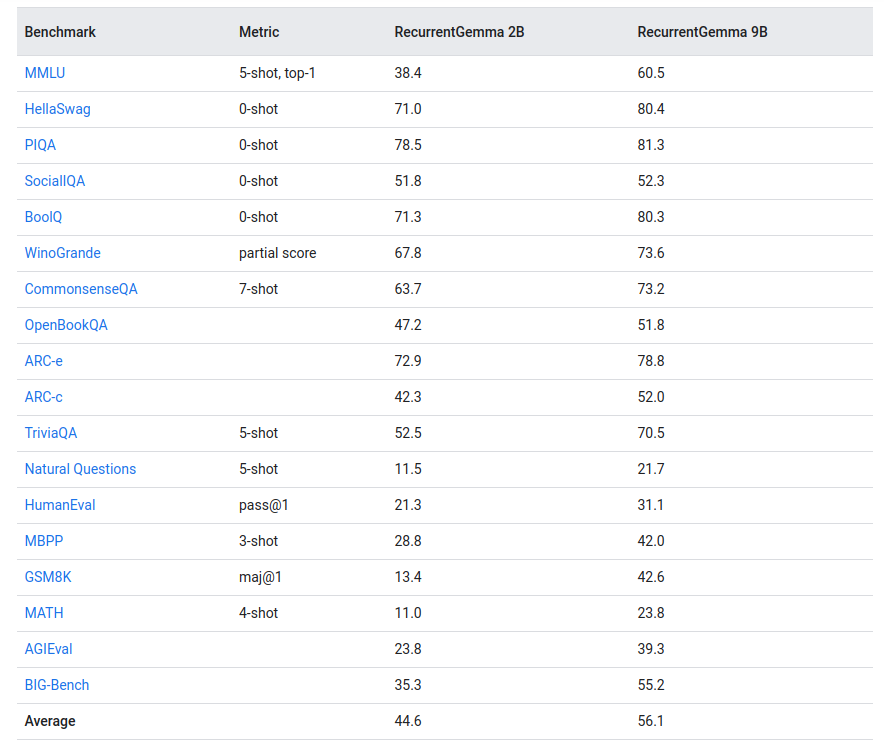

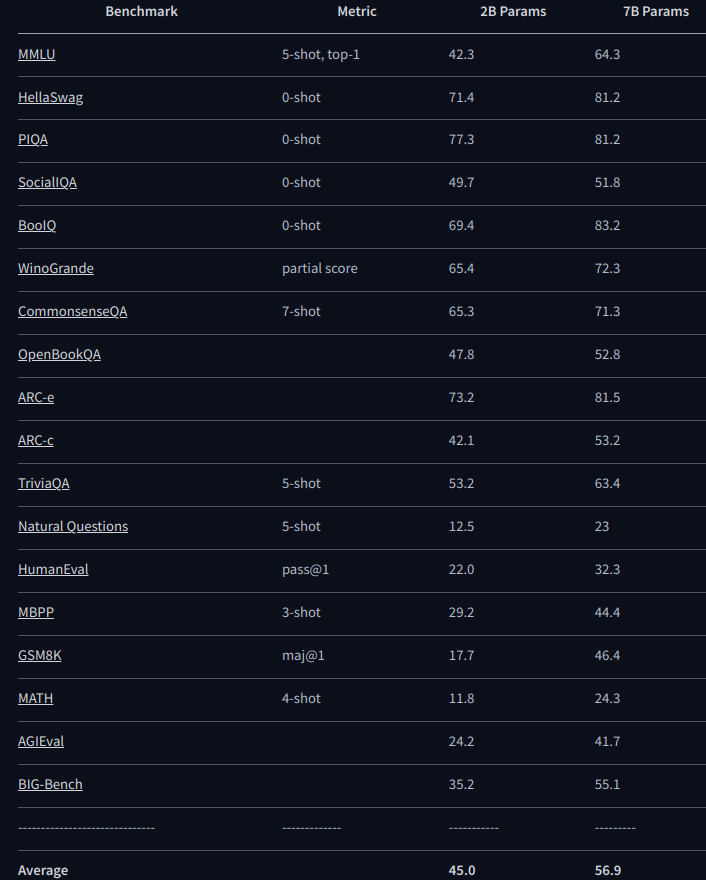

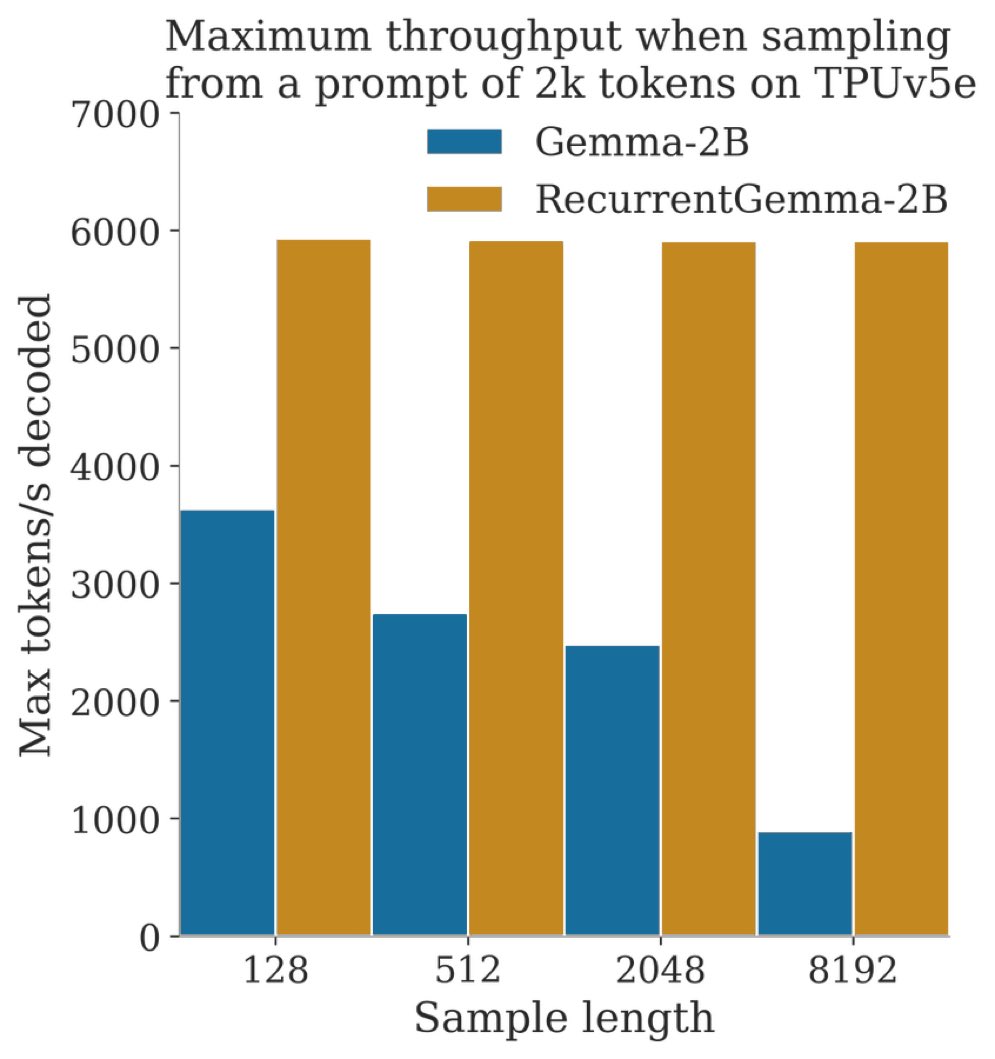

RecurrentGemma-9B is out! kaggle.com/models/google/… huggingface.co/google/recurre… - Uses Griffin architecture, combining linear recurrence with local attention - Downstream evals comparable to Mistral and Gemma - Faster inference, especially for long sequences or large batch sizes 1/n

Releasing RecurrentGemma - one of the strongest 2B-param open models designed for fast inference on long sequences and massive throughput! Both pre-trained and IT checkpoints available + code - try them out here! Code: github.com/google-deepmin… Weights: kaggle.com/models/google/…

Announcing RecurrentGemma! github.com/google-deepmin… - A 2B model with open weights based on Griffin - Replaces transformer with mix of gated linear recurrences and local attention - Competitive with Gemma-2B on downstream evals - Higher throughput when sampling long sequences

From the community's reaction to the Griffin paper, most people are unaware of how long it takes to publish an LLM paper at Google. We already had most of the results in the Griffin paper, including the final model, most of the writeup before I left in September.