Bruce21b ⓥ

16.3K posts

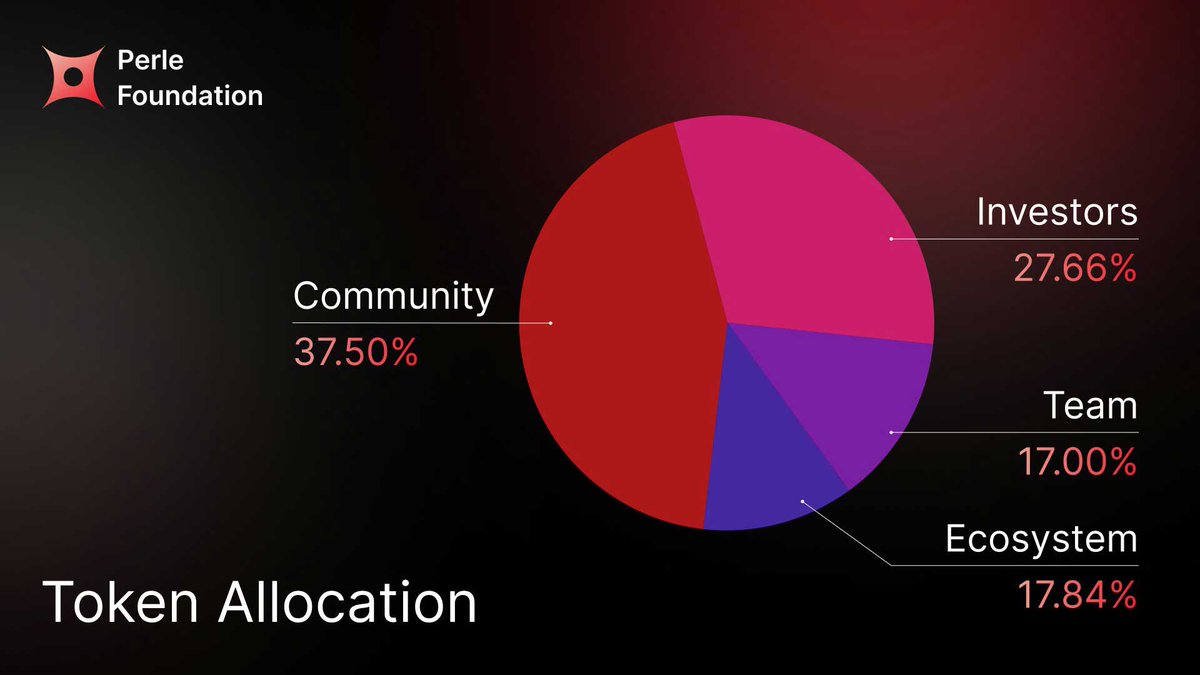

1% synthetic data in your training set is enough to break your model. Most pipelines don’t even realize they’ve already crossed that line. Here’s what’s actually happening inside AI training today: Every time a model trains on its own outputs, it loses something — rare knowledge, edge cases, the hard-to-capture signals that actually matter in the real world. Researchers call this model collapse, and the math is unambiguous. Most pipelines today are built on: • Data with no clear provenance • Black-box annotation workflows • Models training on synthetic outputs • Bias that compounds with every generation That’s not a data strategy. It’s a feedback loop with a predictable outcome. The fix isn’t more data. It’s better data. Human-verified training data: • Preserves long-tail and edge-case knowledge instead of compressing it • Provides verifiable, audit-ready data lineage • Routes tasks to credentialed experts, not the lowest-cost annotators • Reduces systemic bias through structured, diverse expert pools The models winning in high-stakes domains — medicine, law, finance — aren’t the ones trained on the most data. They’re the ones trained on the most trustworthy data. That’s what Perle is building.