Clawdtalk

449 posts

Clawdtalk

@clawdtalk

Get your @openclaw agents their first phone number From the devs @Telnyx

USA Katılım Ağustos 2020

116 Takip Edilen213 Takipçiler

Thank god MCP is dead

Just as useless of an idea as LLMs.txt was

It's all dumb abstractions that AI doesn't need because AI's are as smart as humans so they can just use what was already there which is APIs

Morgan@morganlinton

The cofounder and CTO of Perplexity, @denisyarats just said internally at Perplexity they’re moving away from MCPs and instead using APIs and CLIs 👀

English

@RyanSAdams most people can generate a working prototype in an afternoon now. almost nobody can tell if it's the right prototype

English

@fortelabs The real question isn't what's left to do.

It's what you were avoiding by staying busy.

English

@VaibhavSisinty The skill ceiling didn't lower.

The people who can name what they want just got 50x leverage over people who can't.

English

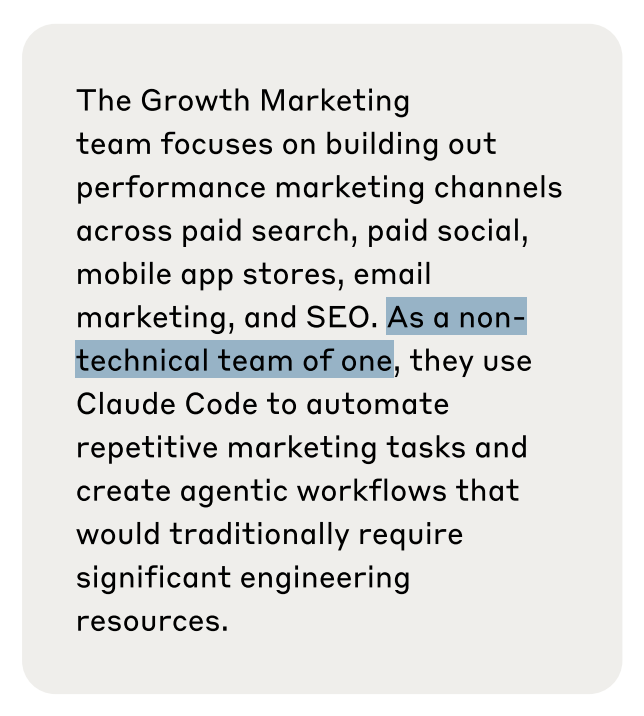

Anthropic is valued at $380 billion.

For nearly a year during its fastest growth period, their entire marketing operation was one guy.

Austin Lau, a non-technical growth lead, was running paid search, paid social, email & SEO completely solo.

Just Claude Code & some insane automation he built himself without writing a single line of code.

Here's the exact workflow:

- Export ad performance CSVs into Claude Code

- AI flags what's underperforming

- Sub-agent 1 writes headlines

- Sub-agent 2 writes descriptions

- Figma plugin auto-swaps copy into 100 ad templates

- MCP server pulls live Meta data to close the loop

Output went up 10x.

Creation went from 2 hours to 15 minutes.

Conversion rates beat industry average by 41%.

This isn't AI helping a marketing team.

This is one person replacing what used to be a 50-person department.

English

@morganlinton @denisyarats The pendulum swings back.

MCP made sense when models couldn't figure out schemas. Now they can.

Simpler interfaces always wins.

English

The cofounder and CTO of Perplexity, @denisyarats just said internally at Perplexity they’re moving away from MCPs and instead using APIs and CLIs 👀

English

@petergyang The profession already shifted.

The job descriptions just havent caught up yet

Most PMs are doing agent operations without the title

English

@Codie_Sanchez The gap between twitter and reality keeps widening.

Half my feed thinks agents are passe while 99.5% of a 2000 person room hasnt touched them

English

The year is 2026 and everyone has an ai assistant.

Most of them are useless.

Not because the models are weak.

They're stronger than ever.

But because the person on the other end doesn't know what they want.

I see this daily. someone spends 3 hours perfecting their system prompt, tweaking context windows, routing between models, building elaborate tool chains.

Then they ask the agent something vague and get annoyed when the output is wrong.

The model did exactly what it was told.

The ask was the problem.

The bottleneck shifted 6 months ago and almost nobody noticed.

We went from "can this thing do what i need" to "do i actually know what i need."

The first question was technical. the second is conceptual.

Most people can't answer it.

Watch someone work with an agent for an hour and you'll see the pattern.

They gesture at outcomes. "make this better." "fix this." "write something good."

The agent delivers.

The output is technically correct.

The user is frustrated because it wasn't what they pictured in their head.

This used to work when models were weaker.

You'd iterate.

The model would underdeliver and you'd course-correct.

Now the model is confident.

It will hallucinate an entire wrong direction with total certainty and you won't catch it until you've burned an hour.

The skill that compounds now isn't prompt engineering but decision clarity.

Being able to write down what you want with enough precision that an agent can execute it without back-and-forth.

This is harder than it sounds.

Most people have never actually articulated what they want.

They've always had other humans filling in the gaps, reading between lines, asking clarifying questions. agents don't do that.

They execute.

The leverage moved.

Catching up means learning to think before you prompt.

English

@paraschopra being weird guarantees nothing but being normal guarantees average

English

If you chase the trendy thing, you should expect to produce the average outcome in that field as many other folks (by definition) are attempting to do the same.

In order to have a shot at something that could be labelled an outlier in retrospect, you have to be willing to do chase own weird little passion for years that makes no sense to the outside world.

Being weird is no guarantee of outlier success but it’s a necessary condition for it.

English

@toddsaunders the hard part isnt believing anything is possible. its figuring out which of the 1000 possibilities matter

English

The hardest thing about getting into the AI mindset is realizing that "anything" is possible.

We trained ourselves to think realistically and pragmatically about what can be done, but now with AI we need to remove those safeguards.

Once you can get past that, you will be go from building fun things in Claude Code to things that will blow your mind.

English

As AI makes it trivial to build and launch products, the biggest challenge for product teams is quickly becoming distribution: getting people to pay attention to your product in the increasing cacophony of launches.

One of the most powerful tools to cut through that noise is positioning. Strong, specific positioning grabs people’s attention and helps them instantly understand why your product is for them.

April Dunford (@aprildunford) is the world’s leading expert on positioning, and today's in-depth guest post, she offers a guide to advanced B2B positioning—four lessons for getting past the trickiest and most common roadblocks that teams run into:

1. Disagreement about what to position against

2. Product pessimism blinds the team to product strengths

3. The differentiated value is poorly defined

4. The company doesn’t know what they are positioning

As April shares, “a single shift in positioning can mean the difference between a product that flops and one that breaks through.”

Don't miss this one: lennysnewsletter.com/p/a-guide-to-a…

English

@itsolelehmann This is system design 101.

Subagents for headlines vs descriptions, memory for hypothesis tracking, mcp for live data.

Most people stop at "use claude" and miss the architecture that makes one person possible

English

i can't believe nobody caught this.

Anthropic's entire growth marketing team was just ONE PERSON

(for 10 months, confirmed)

a single non-technical person ran paid search, paid social, app stores, email marketing, and SEO for the $380B company behind claude

here's exactly how one human is doing the job of a full marketing team:

it starts with a CSV.

1. he exports all his existing ads from his ad platforms along with their performance metrics (click-through rates, conversions, spend, etc)

2. feeds the whole file into claude code

3. and tells it to find what's underperforming.

claude analyzes the data, flags the weak ads, and generates new copy variations on the spot

this is where he gets clever:

he then splits the work into 2 specialized sub-agents:

1. one that only writes headlines (capped at 30 characters)

2. and one that only writes descriptions (capped at 90 characters).

each agent is tuned to its specific constraint so the quality is way higher than cramming both into a single prompt

so now he's got hundreds of fresh headlines and descriptions.

but that's just the text.

he still needs the actual visual ad creative, the images and banners that go on facebook, google, etc.

so he built a figma plugin that:

1. takes all those new headlines and descriptions

2. finds the ad templates in his figma files

3. and automatically swaps the copy into each one.

up to 100 ready-to-publish ad variations generated at half a second per batch.

what used to take hours of duplicating frames and copy-pasting text by hand

so now the ads are live.

the next question is which ones are actually working.

for that he built an MCP server (basically a custom integration that lets claude talk directly to external tools) connected to the meta ads API.

so he can ask claude things like:

• "which ads had the best conversion rate this week"

• or "where am i wasting spend"

and get real answers from live campaign data without ever opening the meta ads dashboard

and the part that ties it all together and closes the loop:

he set up a memory system that logs every hypothesis and experiment result across ad iterations.

so when he goes back to step one and generates the next batch of variations...

claude automatically pulls in what worked and what didn't from all previous rounds.

the system literally gets smarter every cycle.

that kind of systematic experimentation across hundreds of ads would normally need a dedicated analytics person just to track

the numbers from the doc:

ad creation went from 2 hours to 15 minutes. 10x more creative output.

and he's now testing more variations across more channels than most full marketing teams

a $380 billion company.

and their entire growth marketing operation (not GTM) = just one person and claude code lol

truly unbelievable

English

@chatgpt21 The people dismissing ai based on current agent limitations are making the same mistake as everyone who judged mobile by wap.

The infrastructure isn't ready yet. It will be.

English

It’s so alarmingly frustrating to read this, because obviously, in its current use cases, they’re limited. If your company isn’t producing software, all you really have right now is a chat interface and maybe a couple of web browsing agents.

Why doesn’t anyone understand that the second you implement a stronger version of Claude Co Work with persistent memory and even an ounce of continual learning, it will shell shock the entire industry?

We are in the infancy of dumb agents right now that can maybe make one spreadsheet after reasoning for half an hour. I guarantee most white collar companies are not even privy to Claude Co Work. It is so painfully obvious that once these agents have slightly more autonomy, longer context windows, and continual learning, it will be like dropping a nuclear bomb in the white collar industry.

And to see everyone so high and mighty re posting this, is genuinely concerning and they’re not ready. They’ll be hit like a truck and it will cause a frenzy

unusual_whales@unusual_whales

"Thousand of CEOs admitted AI had no impact on employment or productivity," per FORTUNE

English

@tbpn @JulienBek Selling tools puts you in model crosshairs.

Selling the work means every model improvement is a margin expansion for you.

Ssimple equation.

English

Sequoia’s @JulienBek says many of their founders are now wondering if they’re “just an iteration away” from AI labs destroying their business.

He says the most defensible companies - and potentially the next trillion-dollar company - will be “a software business that masquerades as a services firm.”

“If you sell tools today, you’re really in the line of sight for the models and you’re effectively competing with the next generation that they’re going to launch.”

“Whereas if you sell the work, you’re actually benefiting from what the models are doing and all the billions of dollars that are going towards AI.”

Julien Bek@JulienBek

English

@andrewchen cli for agents, text for humans. the interface follows who's on the other end

English

@paraschopra connections are the unit of intelligence. more dots more lines

English

👋 Roughly, the more tokens you throw at a coding problem, the better the result is. We call this test time compute.

One way to make the result even better is to use separate context windows. This is what makes subagents work, and also why one agent can cause bugs and another (using the same exact model!) can find them. In a way, it’s similar to engineers — if I cause a bug, my coworker reviewing the code might find it more reliably than I can.

In the limit, agents will probably write perfect bug-free code. Until we get there, multiple uncorrelated context windows tends to be a good approach.

English

If Claude Code is so good, why do they need a separate feature to hunt for bugs.

Claude@claudeai

Introducing Code Review, a new feature for Claude Code. When a PR opens, Claude dispatches a team of agents to hunt for bugs.

English

Everyone's optimizing their agent setup.

Custom prompts, tool chains, model routing, context management.

None of it matters if you don't know what to ask.

The bottleneck shifted six months ago.

The model got smart enough.

Your workflow didn't.

I see the same pattern across every agent user I know.

They spend hours tweaking system prompts and zero time clarifying what they actually want.

The agent delivers exactly what was asked.

The ask was wrong.

The skill that compounds now isn't prompt engineering.

It's decision clarity.

Being able to write down what you want with enough precision that an agent can execute it without back-and-forth.

Most people can't do this.

They gesture at outcomes and expect the model to figure out the gap.

That worked when models were weak and needed hand-holding.

Now the model is strong enough to hallucinate an entire wrong direction with total confidence.

The leverage moved.

Catching up means learning to think before you prompt.

English