Cristián Llull

29 posts

Cristián Llull

@cllullt

PhD student in Computer Science - U. of Chile Passionate to learn the workings of the world and interact with the environment through computers

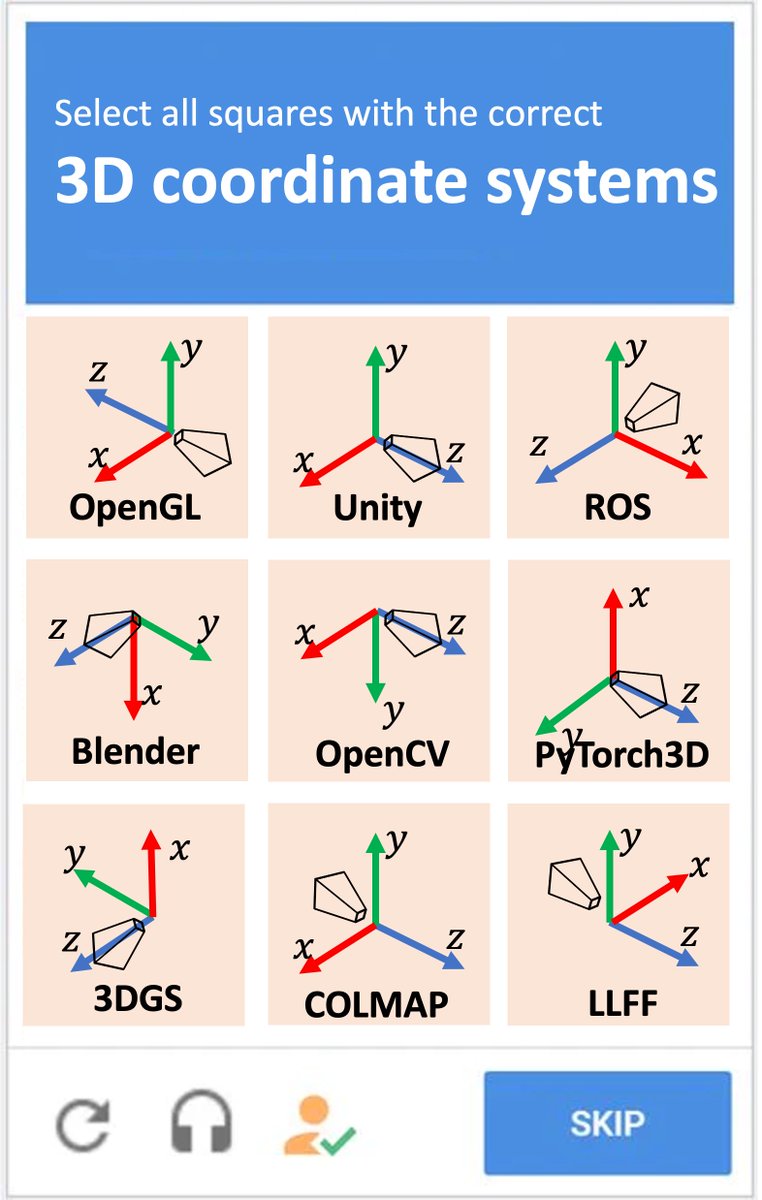

wait wait wait. So a new kind of 3D representation (gaussian splats) was invented and they decided to use Y-DOWN as the standard up-direction ?? In 2023 ?!?!!! Need to have some words w/ my old friends at INRIA...and I guess we need a new chart...

Congratulations to our student Cristián Llull for successfully passing his doctoral qualification exam! This achievement marks an important first step on his path earning a Ph.D. We are confident he will continue to demonstrate dedication, research excellence and academic rigor.

🚀 Exciting news! We’re introducing VGG-T³: a scalable model for offline feed-forward 3D reconstruction that finally tackles the "quadratic bottleneck." Ever wanted to have VGGT reconstruct a 1,000-image scene in seconds instead of 10 minutes and use it for visual localization?

SHREC 2026: reconstruct high-frequency geometry from 90 views (COLMAP poses). Dataset out now. Registration → cllull@dcc.uchile.cl. Submissions due Apr 3, 2026. Details: shapevision.dcc.uchile.cl/cllull-shrec20… #ComputerVision #3DReconstruction #Photogrammetry

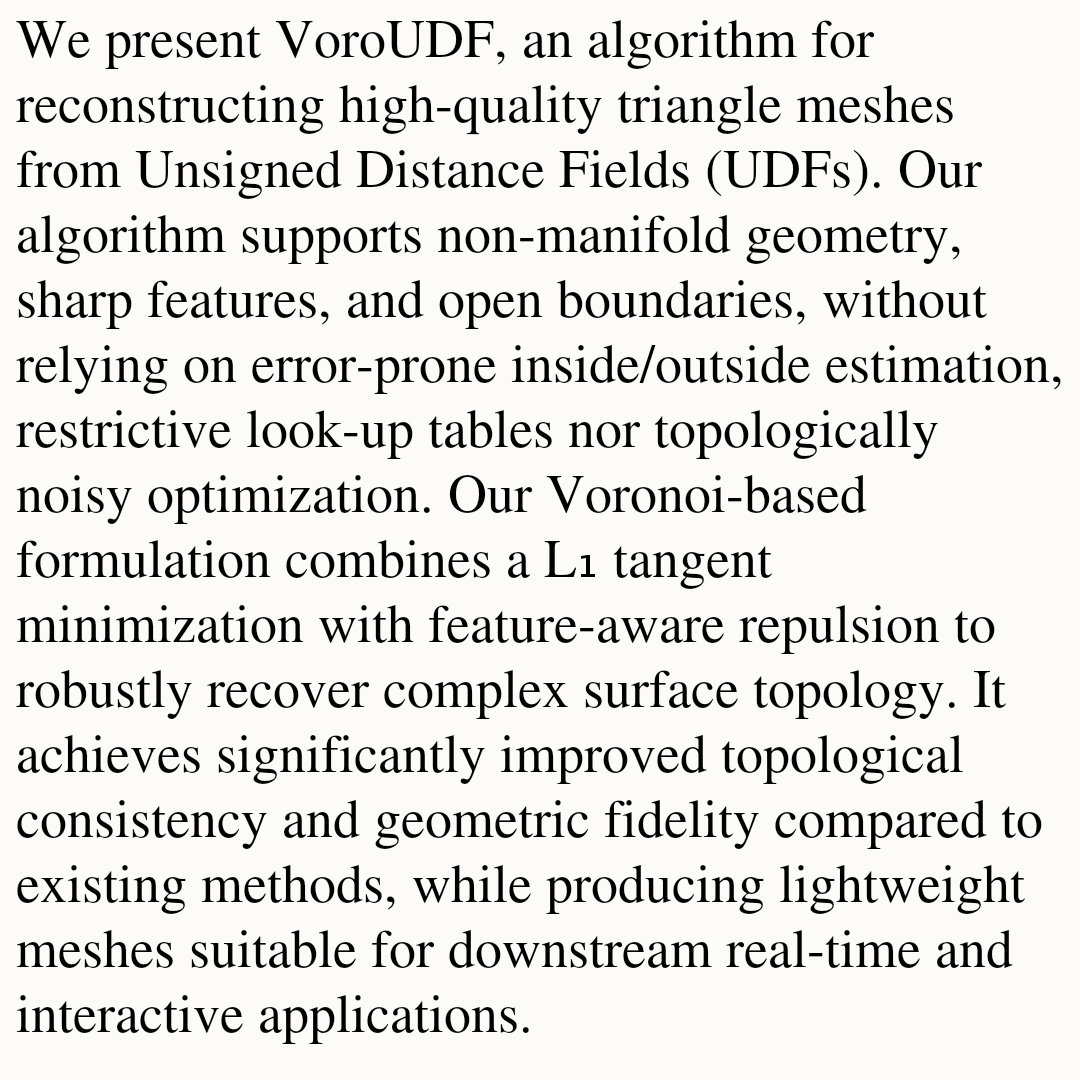

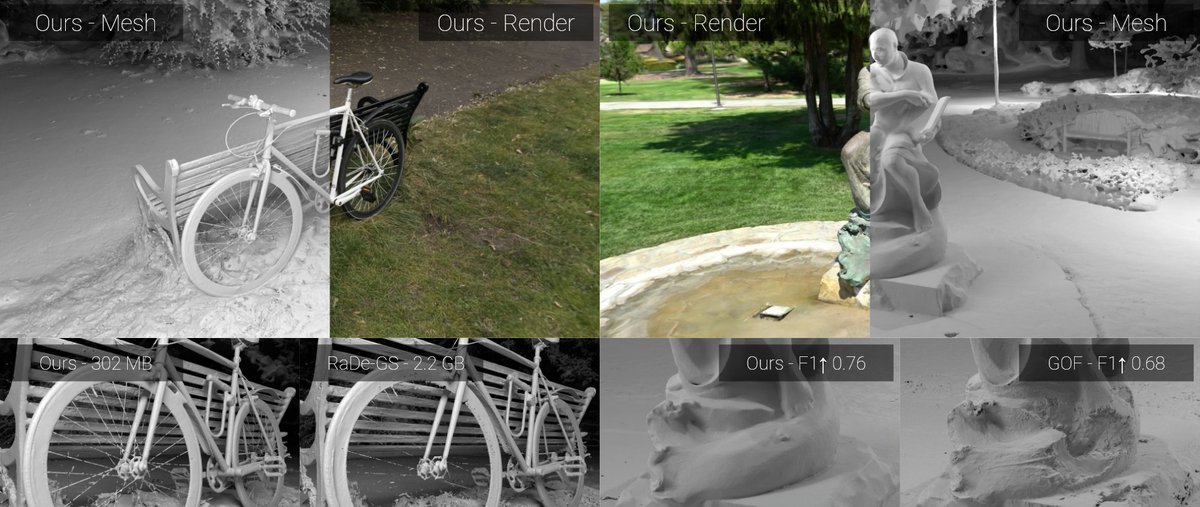

🚀 I’m excited to share my final work as a PhD student: 𝙈𝙚𝙨𝙝𝙎𝙥𝙡𝙖𝙩𝙩𝙞𝙣𝙜: 𝘿𝙞𝙛𝙛𝙚𝙧𝙚𝙣𝙩𝙞𝙖𝙗𝙡𝙚 𝙍𝙚𝙣𝙙𝙚𝙧𝙞𝙣𝙜 𝙬𝙞𝙩𝙝 𝙊𝙥𝙖𝙦𝙪𝙚 𝙈𝙚𝙨𝙝𝙚𝙨 - Arxiv: arxiv.org/abs/2512.06818 - Code: github.com/meshsplatting/… - Project page: meshsplatting.github.io

Excited to share this demo from Over the Reality! Watch our Unitree Go2 robodog navigating our office while reconstructing the 3D space in real-time using the VGGT-based foundation vision model. This is a prime example of machine perception in action, turning raw RGB camera feeds into rich, detailed 3D maps! The robodog's RGB cam generates a dense, textured 3D reconstruction via VGGT from a few photograms, capturing nuances like object shapes and surfaces with impressive fidelity (main view). Compare that to the standard LiDAR system (top right), it's sparser, more point-cloud focused, lacking the visual richness. Vision models are closing the gap fast! What's powering this? VGGT, a cutting-edge foundation model for 3D perception, trained on datasets orders of magnitude smaller than our massive OVER 3D maps dataset. docs.google.com/spreadsheets/d… Imagine the leap when we apply OVER's scale to VGGT-like transformer based architectures, denser reconstructions, better generalization, revolutionary for robotics, machine perception & AR! Stay tuned for more breakthroughs at the intersection of AI, robotics, and DePIN. We're building the future of Physical AI and Spatial Computing at @OVRtheReality What do you think, ready for robodogs in your world? Drop your thoughts! 🤖🌐