crypto_1984

2.4K posts

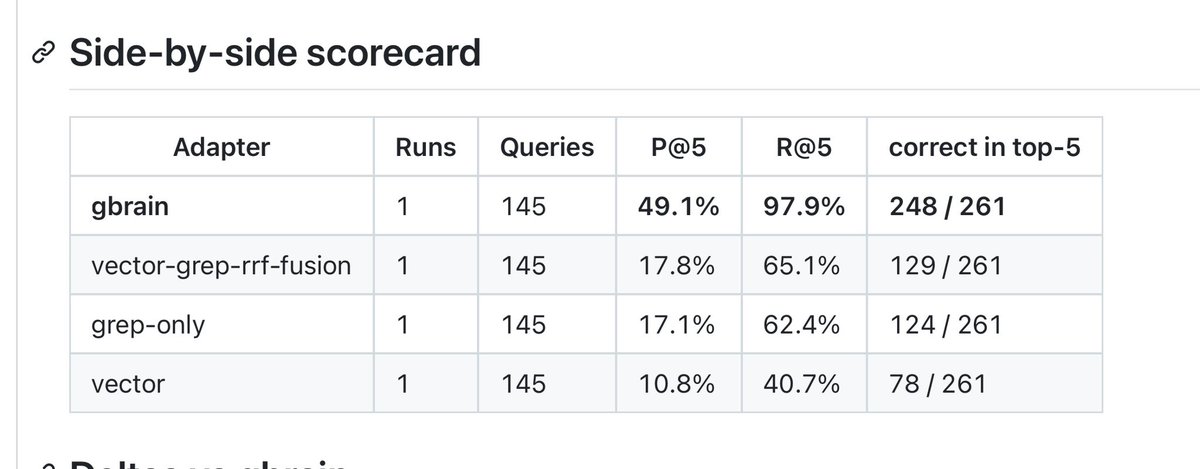

@garrytan this matches what i keep seeing in agent/RAG work: graph, keyword, and vector fail in different ways, so the product win is orchestration + evals, not one retrieval trick. re-embed-on-write is underrated too; stale context is the silent eval killer.

Uranium trade feels like one of the most obvious for the next 1-2 years

@garrytan Can you share your agent.md? You're agent is really articulate.

Jane Street made ~$40B in 2025 with 3,500 employees, a ~2x from the year before. At ~65-70% profit margin, that's $8M profit / employee, the highest for a 1000+ ppl company. High-frequency trading continues to be the most efficient money making engine. I want to share an old story about my Jane Street interview in 2014. Jane Street was known for hiring a lot of math, physics and CS olympiad winners from top universities and putting them through many rounds - including, for trading roles, a gauntlet of mental math. It was my 6th interview and my final round and I recall being asked "What is the next day after today in DD/MM/YYYY where all the digits are unique?" They'd toy with you and say "You can use a pencil and paper, if you want" but you knew that was an instant no. Painstakingly and as quickly as I could, I came to an answer. "How confident are you that this is correct on a 0-1 probability scale?" the interviewer said. "0.95", I blurted out, not fully knowing how to answer that. "Are you sure?" After thinking harder for a few more seconds, I realized I could've flipped the digits around to get a closer date. I gave the interviewer my answer. It was correct. "0.95 huh?" he chuckled. That's when I knew I failed. Note: fwiw, other companies that come close in efficiency are - Tether ($90M+ profit/emp) - Hyperliquid ($80M+ profit/emp) and on revenue: - Valve ($50M/emp) - OnlyFans ($37M/emp) - Craigslist ($14M/emp) - Anthropic ($12M/emp, run rate) - OpenAI ($8M/emp, run rate) For comparison, Nvidia is very efficient at scale and is $4.4M/emp.

Banks used to hold paper silver that didn't actually exist. For every real ounce, there were 8 paper IOUs. A new regulation just forced them to hold 85 cents in real money for every dollar of that paper. Now some banks are exiting the paper silver market entirely (1/13):