Cheng-Yu Hsieh

54 posts

@cydhsieh

PhD student @UWcse

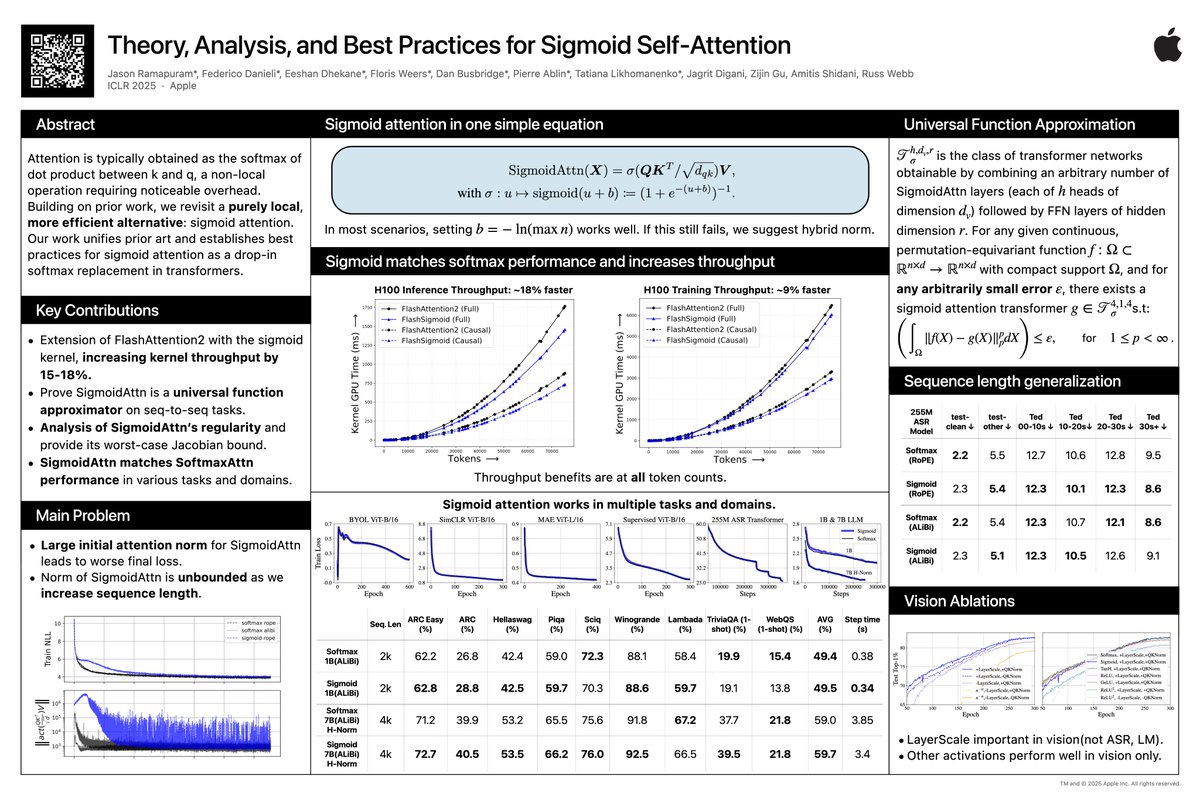

Small update on SigmoidAttn (arXiV incoming). - 1B and 7B LLM results added and stabilized. - Hybrid Norm [on embed dim, not seq dim], `x + norm(sigmoid(QK^T / sqrt(d_{qk}))V)`, stablizes longer sequence (n=4096) and larger models (7B). H-norm used with Grok-1 for example.

Introducing AURORA 🌟: Our new training framework to enhance multimodal language models with Perception Tokens; a game-changer for tasks requiring deep visual reasoning like relative depth estimation and object counting. Let’s take a closer look at how it works.🧵[1/8]

🚨Can we "internally" detect if LLMs are hallucinating facts not present in the input documents? 🤔 Our findings: - 👀Lookback ratio—the extent to which LLMs put attention weights on context versus their own generated tokens—plays a key role - 🔍We propose a hallucination detector based on lookback ratios—Lookback Lens - 📊It accurately detects contextual hallucinations and reduces them during decoding—even better than hidden state-based detectors or NLI models - 💪It transfers across tasks and models Check our paper & code👇 📝arxiv.org/abs/2407.07071 👨💻github.com/voidism/Lookba… #NLProc #NLP #LLMs #LLaMA

Will training on AI-generated synthetic data lead to the next frontier of vision models?🤔 Our new paper suggests NO—for now. Synthetic data doesn't magically enable generalization beyond the generator's original training set. 📜: arxiv.org/abs/2406.05184 Details below🧵(1/n)

🚨Can we "internally" detect if LLMs are hallucinating facts not present in the input documents? 🤔 Our findings: - 👀Lookback ratio—the extent to which LLMs put attention weights on context versus their own generated tokens—plays a key role - 🔍We propose a hallucination detector based on lookback ratios—Lookback Lens - 📊It accurately detects contextual hallucinations and reduces them during decoding—even better than hidden state-based detectors or NLI models - 💪It transfers across tasks and models Check our paper & code👇 📝arxiv.org/abs/2407.07071 👨💻github.com/voidism/Lookba… #NLProc #NLP #LLMs #LLaMA