David Floyer

1.3K posts

David Floyer

@dfloyer

Wide & deep international datacenter experience

San Francisco, CA Katılım Mart 2009

115 Takip Edilen1.1K Takipçiler

Dave/Ben

Yes, the way that Agentic AI is being architected at the moment would lead to more CPUs. And that is not the worst aspect by far. In my analysis below I suggest that NVIDIA and the leading Frontier models will provide the capabilities and architectures to manage this and many other Agentic issues within the Frontier models and Nvidia CUDA extensions to move appropriate parts of the orchestration from CPU to GPU & DPU. This will allow Frontier models to drive Agentic AI faster. It will also allow NVIDA to lower costs, increase TAM with more directly attached Arm & probably the joint Nvidia/Intel CPUs, and provide enterprises with a cheaper, more resilient, better compliant, and lower latency solution. Win, Win, Win.

Below is my earlier posting, which addresses part of where Agentic AI has to go.

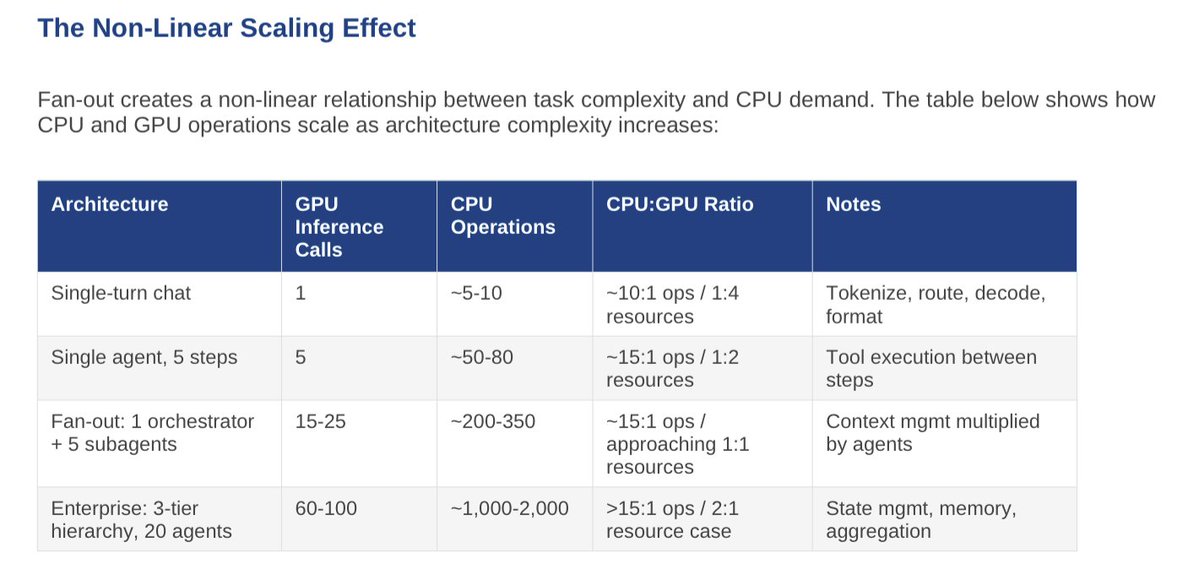

Great chart. The risk it highlights is real: fan-out multiplies coordination burden. If that coordination occurs on a loosely coupled CPU infrastructure, the system spends more time moving state and synchronizing agents than reasoning. Latency goes to hell in a hand-basket.

However, that outcome assumes the agent control plane lives outside the AI infrastructure. NVIDIA's direction is the opposite: keep the coordination layer within the AI-factory fabric itself.

Grace Arm CPUs today and Vera and Nvidia/Intel in the future sit in the same racks and on the same high-bandwidth fabric as the GPUs. They provide a local control plane for orchestration, data preparation, and agent coordination. With CUDA, NVLink/NVSwitch, and GPU-aware cluster schedulers, multi-agent execution can be treated as a native workload rather than a distributed application that calls models via APIs.

At the same time, a growing portion of the infrastructure overhead moves off CPUs entirely. BlueField DPUs already offload networking, storage protocols, security enforcement, and telemetry. That removes a large fraction of the “CPU operations” your chart assumes.

A second shift is architectural: many multi-agent patterns are really parallel reasoning branches followed by aggregation. When those branches run within the inference fabric rather than as separate CPU agents, coordination costs drop dramatically.

In my work, I call this integrated layer the Cognitive Surface, where agents, models, enterprise semantic and causal data, and governance run within the same AI-factory environment. Under that model, the CPU layer remains important for orchestration, but the dominant resource remains the GPU fabric where reasoning is produced.

Over time, the CPU/GPU ratio is more likely to fall than rise.

English

I do get your point about CPU counts exploding to keep up Ben. And appreciate the work you're doing on the stages - excellent. I have to concede that in your diagram on stage 3 and 4 the ratio of CPU:GPU will have to flip. So my question is what's the implication? Counts are one way to define prevalence but do you agree that the role of the CPU is subservient to the accelerators?

English

Great chart. The risk it highlights is real: fan-out multiplies coordination burden. If that coordination occurs on a loosely coupled CPU infrastructure, the system spends more time moving state and synchronizing agents than reasoning. Latency goes to hell in a hand-basket.

However, that outcome assumes the agent control plane lives outside the AI infrastructure. NVIDIA's direction is the opposite: keep the coordination layer within the AI-factory fabric itself.

Grace Arm CPUs today and Vera and Nvidia/Intel in the future sit in the same racks and on the same high-bandwidth fabric as the GPUs. They provide a local control plane for orchestration, data preparation, and agent coordination. With CUDA, NVLink/NVSwitch, and GPU-aware cluster schedulers, multi-agent execution can be treated as a native workload rather than a distributed application that calls models via APIs.

At the same time, a growing portion of the infrastructure overhead moves off CPUs entirely. BlueField DPUs already offload networking, storage protocols, security enforcement, and telemetry. That removes a large fraction of the “CPU operations” your chart assumes.

A second shift is architectural: many multi-agent patterns are really parallel reasoning branches followed by aggregation. When those branches run within the inference fabric rather than as separate CPU agents, coordination costs drop dramatically.

In my work, I call this integrated layer the Cognitive Surface, where agents, models, enterprise semantic and causal data, and governance run within the same AI-factory environment. Under that model, the CPU layer remains important for orchestration, but the dominant resource remains the GPU fabric where reasoning is produced.

Over time, the CPU/GPU ratio is more likely to fall than rise.

English

This still misunderstands the changing workflow coming to the CPU via multi-agent fan-out execution. This scales and executes in parallel the GPU. GPU still reasons/thinks/generates, CPU orchestrates, manages, runs software for workflow automation, etc.,

From something I'm working on per the request of vendors. We are not yet full-scale box 4 nor at box 4 yet.

English

@BenBajarin Good report. I respectfully disagree with your model and conclusions for the reasons I stated.

English

I distinctly address both of those in this report.

Best way to understand this is: one size compute infra will not fit all, architectures will evolve and agents will decide right chip for right workload, and in the inference era these systems will look very different than they do today.

thediligencestack.com/p/nvidia-ces-2…

English

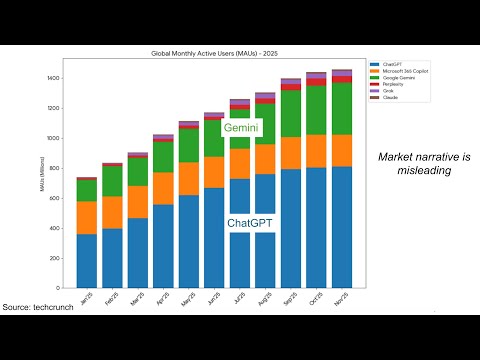

@firstadopter @CNBC @KellyCNBC Great work Tae! It is tough to compete against good marketing of selective information - I together with Dave Vellante just published a similar my similar conclusions to you. youtu.be/1anAzgeQ7pg?si…

David floyer

YouTube

English

I was on @CNBC yesterday to debate Nvidia GPUs vs. Google TPUs. It was a blast. Thank you @CNBC and @KellyCNBC for having me on again.

tae kim@firstadopter

Nvidia's $4 trillion valuation is just the beginning. Here's why AI demand is growing exponentially right now and what it all means. Thank you @CNBC @KellyCNBC for having me on again.

English

Pie in the Sky

How have you accounted for the cost and performance of GPU interconnect in orbit? The model excludes this, but large-scale inference economics are dominated not just by flops and watts, but by the cost of building and operating a low-diameter, high-bisection network across thousands of accelerators.

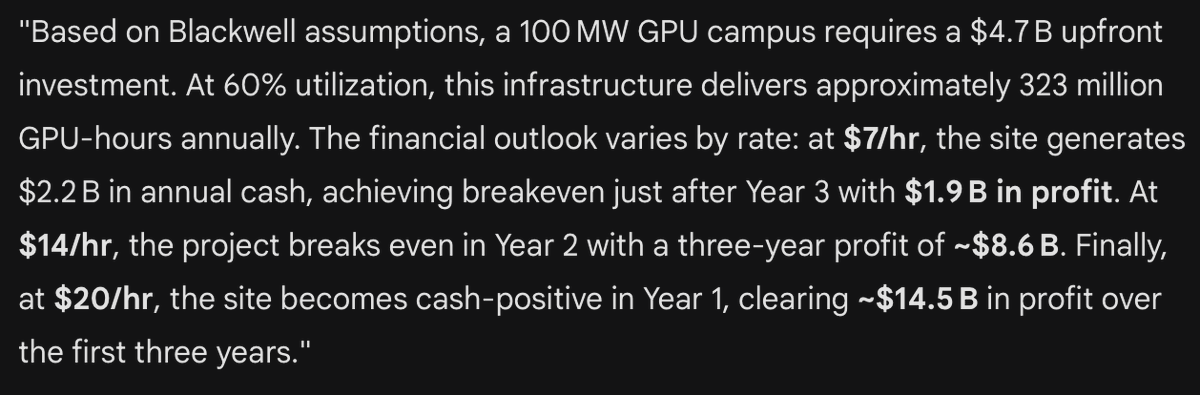

To run state-of-the-art inference (ChatGPT-class APIs, agentic workloads, multi-tool chains) at high utilization, operators deploy clusters of thousands to tens of thousands of GPUs behind a non-blocking or near-non-blocking fabric (fat-tree/dragonfly) with 200–800 Gbps per GPU and single-digit-microsecond switch latency. This keeps step latency tolerable, minimizes stragglers, and keeps model and pipeline-parallel slices busy.

A Starlink-class satellite has on the order of three optical inter-satellite links, each roughly 100–200 Gbps, forming a sparse mesh optimized for routing, not for building a tightly coupled GPU island. Attempting to emulate a terrestrial fat-tree across a swarm of small buses means most GPU-to-GPU paths traverse multiple satellites and laser hops, collapsing effective bisection bandwidth per GPU versus an on-ground leaf–spine fabric built from 400–800 Gbps switches.

In this regime, the network becomes the dominant bottleneck, and utilization is driven not by raw flops or solar power, but by:

•High average path length (multiple LISL hops for collectives and all-reduce).

•Per-link capacity comparable to a single GPU NIC but shared across many nodes.

•A topology fundamentally oversubscribed for tightly coupled inference, versus the near-1:1 bisection of modern clusters.

A realistic orbital design using Starlink-like buses should therefore incur a significant network-induced utilization haircut for workloads requiring strong coupling across more than a handful of GPUs. The economics are not “just power and launch,” but “power × effective utilization,” and utilization is likely to fall well below 10% for LLM-scale model-parallel workloads on a sparse LISL mesh.

If instead you propose special-purpose “GPU barge” satellites with many more optical ports and high-radix switches to recreate a terrestrial fabric in orbit, you pay twice: in mass (optics, power electronics, structure, thermal hardware) and in complexity and NRE. That mass feeds directly into the $/W orbital cost stack and erodes the already-tight gap your model identifies.

There is also an opportunity-cost comparison the model omits. Workloads tolerant of weaker coupling are precisely those that can run on cheaper, older-generation GPUs on Earth, often colocated with stranded or discounted power and very simple networking. The small-model slice is structurally biased against orbital deployment, because Earth offers both cheaper silicon and simpler fabrics without paying a mass tax to launch NICs, switches, and optics.

Suggested changes to the model:

Add a “networking and topology” section with assumptions for GPUs per satellite, LISLs per satellite, per-link bandwidth, path-length distribution, and a utilization factor relative to a terrestrial fat-tree baseline. Run at least two workload modes: (1) tightly coupled large-model inference/training, and (2) embarrassingly parallel or small-model workloads.

The model does an admirable job on power, mass, and thermodynamics, but it implicitly assumes a watt of GPU in orbit yields roughly the same usable flops as a watt in a modern terrestrial cluster. Given the networking realities above, that assumption is optimistic. Making interconnect economics explicit will widen the cost gap versus conventional data centers and clarify the narrow niches where orbital compute might still make sense.

English

Nvidia’s Rubin CPX Announcement

Nvidia's extensive chip design and technology expertise, together with volume and early deliver, will likely put significant pressure on AMD, AWS, and Microsoft’s development of specialized chip strategies.

OpenAI may be in a stronger position due to its potential for integrating hardware and scalable software, likely focusing on a distributed architecture.

Moreover, Nvidia is not done innovating in specialized AI solutions. They handle a large percentage of AI workloads, giving them valuable insights into bottlenecks.

Nvidia is a formidable competitor, and likely to maintain it lead for this decade.

English

Nvidia is a systems company “par excellence.” It has innovated every aspect of the AI platform and partnered with most datacenter vendors to create the Extreme Parallel Platform. Every software and hardware vendor needs to embrace, migrate, and contribute to this EPP platform, or risk irrelevance.

English

@sarbjeetjohal I've always thought of Nvidia as a systems company.

English

“I rarely call us a GPU company, what we make is a GPU, but I think of Nvidia as a computing company.

If you are computing company, the most important thing you think about is developers. If you are chip company, the most important thing you think about is chip."

says Jensen Huang of @NVIDIA

GTC is a developer conference, he added.

@dvellante @furrier @RealStrech @MarshaCollier @matteastwood @Craw @rneelmani @EdLudlow @CPetersen_CS

a16z@a16z

NVIDIA became the default choice for some of the most ambitious AI startups — like Mistral AI. How? Jensen Huang shares the philosophy that made it possible: “We’re not a chip company. We’re a developer-first computing platform.” Hear how that mindset shaped @nvidia's role in the AI ecosystem. 👇

English

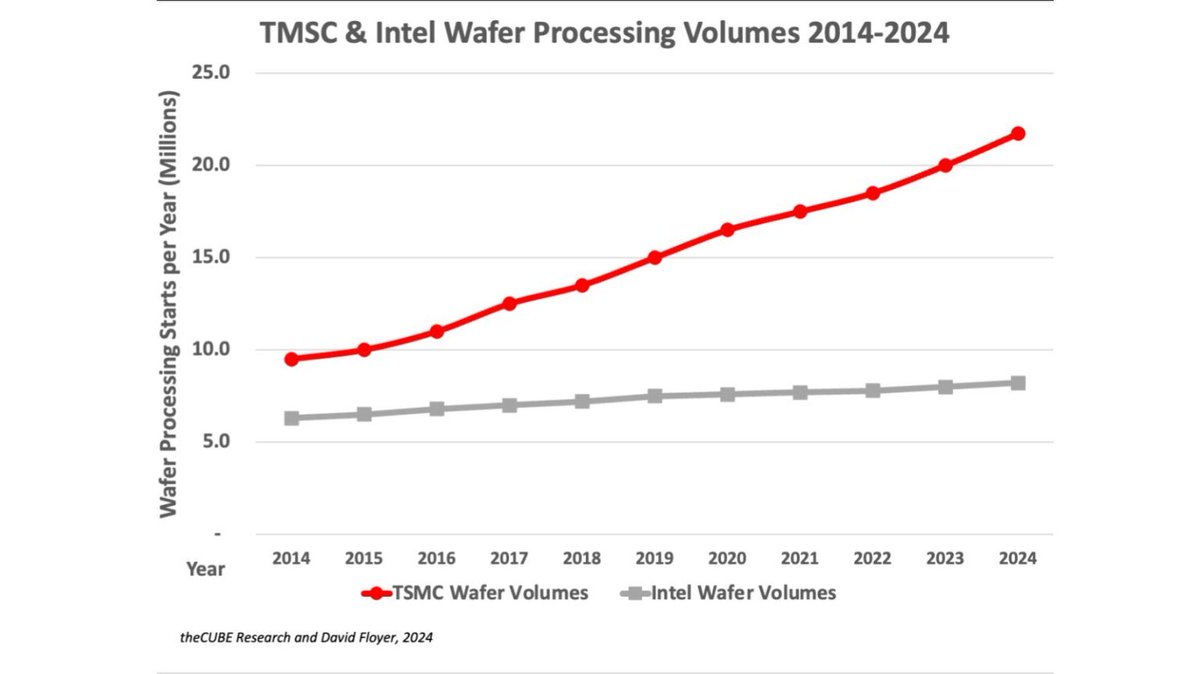

@Jukanlosreve The wafer price of $30,000 per wafer or higher is justified if the value and ease of adoption for TSMC’s customers leads to sufficient increased wafer volume. TSMCs track record says it is a good bet!

English

Intel has contributed so much to computing and America; it is sad to see them decline. x86 CPUs ran the world’s PCs and data centers. Intel rode the wave brilliantly while the x86 volume increased but failed to adapt to decreasing the x86 volume. Arm-based chips and TSMC have far higher volumes, better chips, and far lower costs.

Intel’s response is a decade late. Intel does not have the skills, management, or resources to compete in the foundry business.

The only company that can build leading-edge foundries in the US is TSMC. A partnership between TSMC, Intel, and companies dependent on leading-edge chips is ideal. Otherwise, the US government must persuade TSMC to add more leading-edge foundries in the US to make it independent of Taiwan.

Without a TSMC deal, Intel must treat x86 as a cash cow, focus its products on using TSMC, Samsung, and GF chips, improve x86, and reduce headcount and capital spending by at least 75%.

English

Short-termism of Wall Street makes it hard to pull off Intel’s Foundry vision… says @PGelsinger (I paraphrase) while talking to @jonfortt, moments ago on CNBC. A must listen business TV segment.

@dvellante @MorganLBrennan @Craw @rwang0 @matteastwood @AkwyZ @sandy_carter @PatrickMoorhead @pnashawaty @ShellyKramer

English

I wish your suggestions could work.

When low volumes and late delivery forced AMD and IBM to retreat from the chip fab business, they had to pay what is now Global Foundries large sums of money to take their fabs and significant write-offs.

Intel ruled with higher volumes until 2010.

Smartphones, declining PC volumes, Arm & TSMC, have changed the Fab world. The chart below shows TSMC wafer volumes are much higher than Intels. The number of Arm wafers is now over ten times x86 volumes. These factors resulted in TSMC delivering improved wafers earlier, with higher yields and better quality.

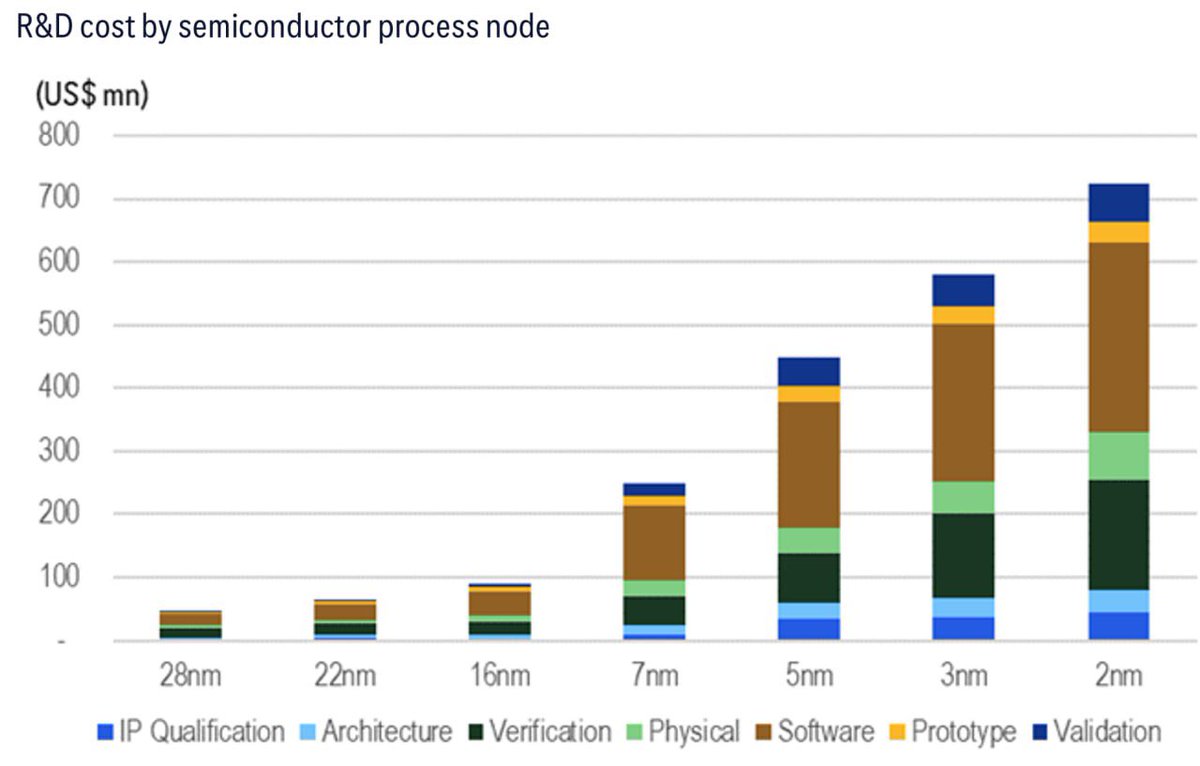

We used Wright’s Law to help predict that Intels 18A will be 18 months or more late to market and 14A even longer. This means Intel chips will command lower prices and volumes, with no ability to invest in the next technology leap—a recipe for bankruptcy.

The result of forcing inferior technology on US enterprises and the military would be disastrous.

TSMC is the only company that can help and expects a win-win deal. The only path to leading edge chips being built in the US is with their help.

English

@dvellante I think you are wildly underselling the value of their fabs.

Trump could simply turn the screws to hyper scalers and ensure wafer agreements on 18A and beyond.

Zero chance Intel gives up fabs for 5B.

Likely outcome is 51% intc (best operator of their fabs and ip), 49% the rest

English

As promised - here's an updated research note on Intel and why we feel it must remain an independent entity after it spins foundry. Would love your input and thoughts: thecuberesearch.com/266-breaking-a…

English

My summary of the evening

Thanks for letting me know❤️

The first 2025 SF Symphony 2025 concert at the Davies yesterday was unforgettable.

The program opened with the world premiere of John Adams’s piano concerto After the Fall, performed by the brilliant Vikingur Ólafsson and commissioned by the San Francisco Symphony. The music was like a complex fine wine, blending contemporary notes with echoes of Bach and Satie’s Gymnopédies.

The second half was Carl Orff’s magnificent Carmina burana, contrasting the profane and celestial. O Fortuna’s thunderous drama framed the performance, while the San Francisco Symphony Chorus and the San Francisco Girls Chorus brought immense power to climaxes.

It was a genuinely transformative evening and one I unreservedly recommend.

English

A Taiwan newspaper reports that Intel plans to outsource all 3nm and below process manufacturing to TSMC and that Intel’s plan to layoff 15% of staff will focus on the foundry business, although Intel’s Taiwan branch will not be affected. The report cites unnamed industry sources for the information. $INTC $TSM #semiconductors #semiconductor ctee.com.tw/news/202409097…

English

@dvellante @awscloud @Oracle @furrier @sarbjeetjohal Finally, we focus on what the customers want instead of what the product managers want!

English

Finally! - @awscloud and @Oracle bury the hatchet and meet where the customers want them to. This has taken way too long and it's good to see AWS CEO Matt Garman will address customers at #oraclecloudworld tomorrow. oracle.com/news/announcem… @furrier @sarbjeetjohal @dfloyer

English

David Floyer retweetledi

This makes a lot of sense to me. For a reference point, ARM market cap is $123 billion (rounded) and Intel market cap is $81 billion (rounded).

It’s amazing how Nvidia abstracted ISA (instruction set architecture) with CUDA. It kills one of the biggest objection for adoption of newer architecture (new way of computing). In a way, it’s a super lean OS. On that note, proximity (and tethering) of a processor to OS is a blessing and a curse.

All in all I like your creative idea, @PatrickMoorhead though it’s a trastiz shift! I hope @PGelsinger and team is considering this as one of the options.

cc @dvellante @Craw @cloudpundit @dfloyer @furrier

English

@sarbjeetjohal @AntonioNeri_HPE @nvidia I would add a network stack, even though it breaks the rule of three!

English

Jensen joins @AntonioNeri_HPE on keynote stage during #HPEdiscover in Las Vegas.

In this industrial age, what’s being invented is embedded with intelligence says Jensen Huang @nvidia CEO as he talks about AI Factories. You need 3 components of stacks to succeed in this area, he added:

1. Model Stack

2. Data Stack

3. Compute Stack

cc @StackPaneLLC @AnuragTechaisle @dvellante @furrier @ShellyKramer @Craw @theCUBE @badnima

English

@holgermu @dvellante @nvidia @TWSemicon @sarbjeetjohal @furrier @AndyThurai @HPE The HPE Cray XD670 is a 5U chassis with x86 2x CPU node & 8xNvidia H100/200. Good benchmarks, no cost analysis.

18/3/24 HPE also announced support for developing future products based the NVIDIA Blackwell platform.

I believe HPE will focus on Blackwell systems long term.

English

@dvellante @nvidia @TWSemicon @dfloyer @sarbjeetjohal @furrier @AndyThurai MyPOV - Very interesting indeed - but we compare CPUs vs supercomputers. Someone has eg Cray vs @Nvidia? Can @HPE share the data. And then, to be fair a different era.

English

Astounding performance curves in this #AI era. We superimposed the Moore's Law curve on top of @nvidia progress. Blackwell is using essentially the same @TWSemicon node "gluing" two dyes together & scaling back the floating point precision. And still... #BreakingAnalysis

English