Dolphin Inference Network node operation is now live for anyone who would like to beta test before we go into production $POD rewards live for testers Repurposing idle GPUs to run Qwen 3.5 35B MoE

Dolphin

110 posts

@dphnAI

AI Lab developing uncensored models & distributed inference ⟠ Over 4m monthly downloads on Hugging Face ⟠

Dolphin Inference Network node operation is now live for anyone who would like to beta test before we go into production $POD rewards live for testers Repurposing idle GPUs to run Qwen 3.5 35B MoE

While most are chasing $LFI, you’re overlooking the @zoe_charms launch $ZOE by @charmsai - What is Charms and Zoe? Charms is a new platform for ai characters, think CharacterAI or Replika, but tokenized. The AI companion niche is already massive, and charms is betting on the emotional connection users build with these bots. $ZOE is the latest character launched by the team to bring attention before the main $CHARMS TGE. - The Team Led by @0xjuan__, @gon0x_, and @0xnaxo. I haven’t dug up their full history yet, but gon is followed by @jessepollak - that’s a signal worth noting. - $CODY and @codygame_com Story If we look at the past, we can find $CODY as the first character on Charms, which charm's team developed and shilled itself. CodyGame won $25k as the most hyped game on Farcaster on October. The token pumped to $4M market cap, because of the game hype. 500k users joined the game. More than 80k weekly active users. And the joined users were absolutely real, because of Worl ID usage in the game. Charms had its moment of attention and hinted at $CHARMS TGE, but something got wrong on 10.10 - the market crushed so hard. That's the reason why Charms postponed their token launch and lost the hype. - My Vision and Speculation around $ZOE and upcoming $CHARMS token Now that the market has recovered, the Charm's team is active again and launched - $ZOE and @zoe_charms. The idea of the launch is the same as $CODY launch - to bring attention to @charmsai platform and upcoming $CHARMS launch. No one understands what is Zoe created for and its utility, but without any utility the token already showed 660k in volume and 800k ath at first day launch. And currently trading at 350k fdv. $ZOE pump -> Attention to @charmsai -> $CHARMS TGE. The formula is simple and that's my bet.

Node provider rollout has been going well Our pool of inference nodes running Qwen 3.6 35B have generated over 3.2B tokens so far Total inference bandwidth -> 9400 t/s 28x RTX 4090 12x RTX 5090 8x RTX PRO 6000 & many other cards API access coming soon 🐬

DeAI is pushing the boundary of Inference 2.0 - If you have a spare Mac lying around, you can plug it in to the private inference network, earning revenue from AI inference demand > @gajesh from EigenCloud built this decentralized inference network 3 days ago and it already has 170+ nodes that's capable of serving millions of tokens at 50% cheaper than Openrouter now - If you have a gaming GPU, an RTX 4090/5090/6000 lying around, you can plug it into @dphnAI inference network and earn POD token incentives > Dolphin (Uncensored model provider for @AskVenice) just launched beta test for their inference network last night — the GPUs will be used for model training/distillation (for now) These implementations are exciting because just like when yields on idle capital offer drastic improvements in capital efficiency, Inference on idle consumer GPUs enable yields on idle hardware — which offer additional revenue for operators + provide cheaper alternatives to developers seeking cheaper/efficient inference. Exciting times ahead for DeAI

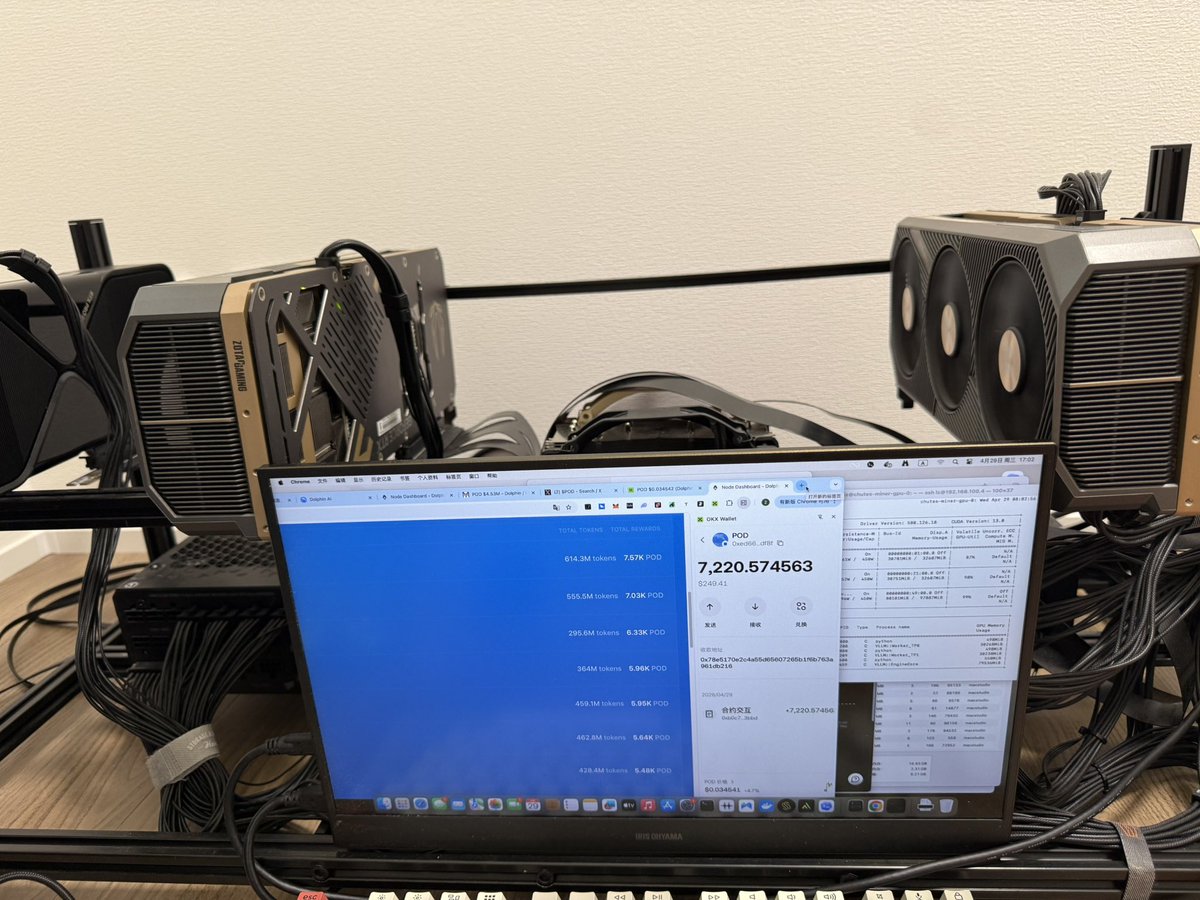

谢谢官方@dphnAI !!! 5天给了7220个 $pod 目前价值250u 之前币价拉到0.045。希望继续 🙏

排行榜终于变成第一了☝️ 3天挖了6430个。 按照今天币价 $pod 0.0366,一个月2000u?收益还算可以。 @dphnAI

Node provider rollout has been going well Our pool of inference nodes running Qwen 3.6 35B have generated over 3.2B tokens so far Total inference bandwidth -> 9400 t/s 28x RTX 4090 12x RTX 5090 8x RTX PRO 6000 & many other cards API access coming soon 🐬

Dolphin Inference Network node operation is now live for anyone who would like to beta test before we go into production $POD rewards live for testers Repurposing idle GPUs to run Qwen 3.5 35B MoE