stridell

96 posts

stridell

@emilstridell

I like bmws, AI, and OSS. Disgusting. Building ai agents, but not very good ones.

Katılım Nisan 2023

66 Takip Edilen5 Takipçiler

stridell retweetledi

Giveaway time!

Win a Claude Max 20x subscription for one month.

To enter

> follow @BleapApp

> RT and like this post

Winner will be selected in 48 hours.

(fyi, you get 20% cashback on your claude, chatgpt and gemini subscriptions when using a Bleap card)

Download the app via the link in our bio today and activate your virtual card in minutes.

English

How is Gemini 3.1 simultaneously the best and worst model?

Lisan al Gaib@scaling01

ARC-AGI-3 scores for GPT-5.4, Gemini 3.1 Pro and Opus 4.6 Gemini 3.1 Pro: 0.37% GPT-5.4: 0.26% Opus 4.6: 0.25% Grok 4.2: 0%

English

@QuixiAI @__tinygrad__ The board is useless. No CUDA, no competitive bandwidth, no adoption.

You're welcome.

English

Intel B70 finally makes a truly competitive move.

32 GB vram for < $1000

No matter how bad the software stack is, the sheer vram / dollar ratio will drive the community to fill in the gaps

@__tinygrad__ Intel tinybox?

English

@kimmonismus Yeah, no. It absolutely sucks. Tried it but literally couldn’t even finish basic tasks.

English

Nemotron remains completely underrepresented. NVIDIA has cooked up a storm, and most people are still ignoring it.

NVIDIA AI Developer@NVIDIAAIDev

@huggingface Nemotron 3 Super is now a leading reasoning foundation for @OpenClaw 🦞 and complex agentic workflows, with 1.5M+ downloads in its first two weeks. 🤗 huggingface.co/nvidia/NVIDIA-…

English

🚨 Exciting Local Inferencing News! @intel just dropped the Arc Pro B70 a serious new opportunity for local AI inference! 🔥💥

💰 Price: $949

📆 Available: Starting today (March 25, 2026)

Key Specs:

32GB GDDR6 VRAM 📦 (608 GB/s bandwidth ⚡)

32 Xe2 cores + 256 XMX engines 🧠

Up to 367 peak TOPS 🚀

TDP: 160–290W (Intel version ~230W) ⚡

Intel claims massive gains

📏 2.2x larger context windows

⚡ 85% higher token throughput

🏎️ 6.2x faster Time-to-First-Token

💵 Better token-per-dollar vs RTX Pro 4000

Equivalent to?

🧩 Strong competitor to the NVIDIA RTX 5000 Ada / Blackwell (32GB class) for local LLM inference — especially on Linux setups

Excellent value under $1k for AI/agent workloads 👀

Worth it over used 3090s? Or still sticking with NVIDIA? Drop your thoughts below 👇

VideoCardz.com@VideoCardz

Intel launches Arc Pro B70 at $949 with 32GB GDDR6 memory videocardz.com/newz/intel-lau…

English

@zeddotdev Did you also generate the marketing with it? We didn’t just x. We y.

English

Zeta2 is here. 30% better acceptance rate than Zeta1. 200x more training data, LSP-powered context, faster predictions, open weights. Try it now in Zed.

We didn't just improve the model. We rebuilt the entire data pipeline behind it: zed.dev/blog/zeta2

English

@testerlabor Grok 4 has 3 trillion params? why is it so stupid then

English

@RevealingSkunk @SOSOHAJALAB 27B params vs well over 1T btw. The density is the cool part.

English

@SOSOHAJALAB Nah it's unbelievably stupid, I asked it to replace a refactored method call in tests, it simply substituted the name without inferring parameters and updating mocks. Real claude did all of that easily

English

Guys.. this model is just crazy.

If you have just less than 48gb vram, just try the 8q gguf format.

Feels just like opus!

Tool calling is working smoothly!!

Appreciate for this! (Hf and qwen!!)

huggingface.co/Jackrong/Qwen3…

English

stridell retweetledi

@DavidOndrej1 it’s a bug, unless you’re trying to dictate a message >10 min long

English

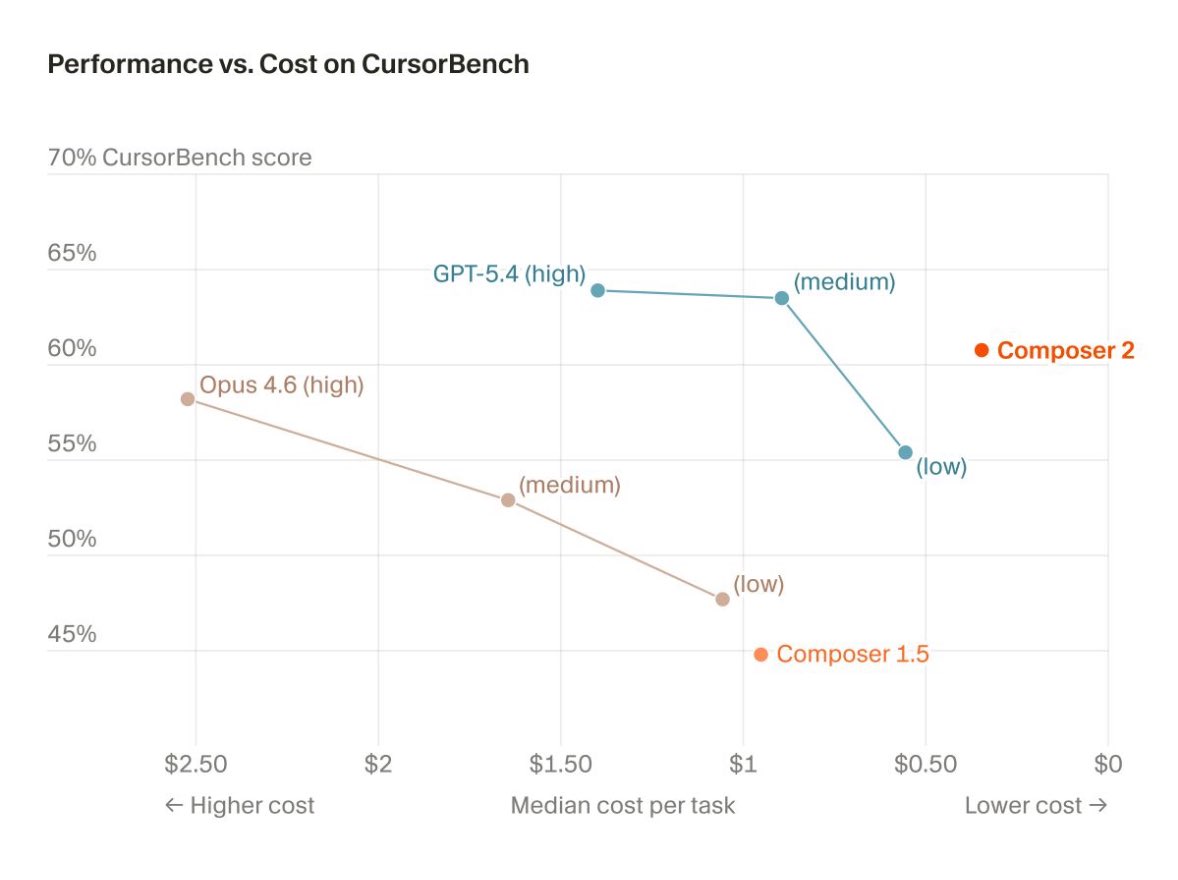

@Amank1412 Seen so many people disliking the chart. What’s wrong with it?

English

@BentoBoiNFT @GG_Observatory Nah he just types in newline, you gotta render the post in your head to understand

English

Why would anyone choose OpenClaw vs Claude Code?

Claude now has:

• Discord/Telegram integration

• Cron Jobs (/loop)

• 1M token memory

• Webhooks to phone

• Can run 24/7 on any Computer or Mac Mini

This covers 95% of what people actually use OpenClaw for with better security and easier setup

The only reason to stick with OpenClaw is if you want a multi-agent setup. That's the only difference I could think of

Going to stick with OpenClaw for now because of this, but the gap is almost at zero

English