Ev

14.7K posts

Guy made a great tool and at the right time.

Wildest thing about it, is there have been plenty of established players in the market (social media scheduling) already but Postiz still found a way to slide into the market.

Nevo David@wickedguro

OMG OMG OMG OMG OMG OMG wtf wtf wtf wtf wtf wtf wtf wtf wtf I knew it would reach 60k, but it's hard for me to process how my life is going to change, and that's I am going to get it every month (or even higher)

English

@ComRicheyweb Could you please message support (they're super responsive) so you can share your plan and they can investigate.

I haven't seen other reports of this but either way it shouldn't be happening, so want to get it working for you :)

English

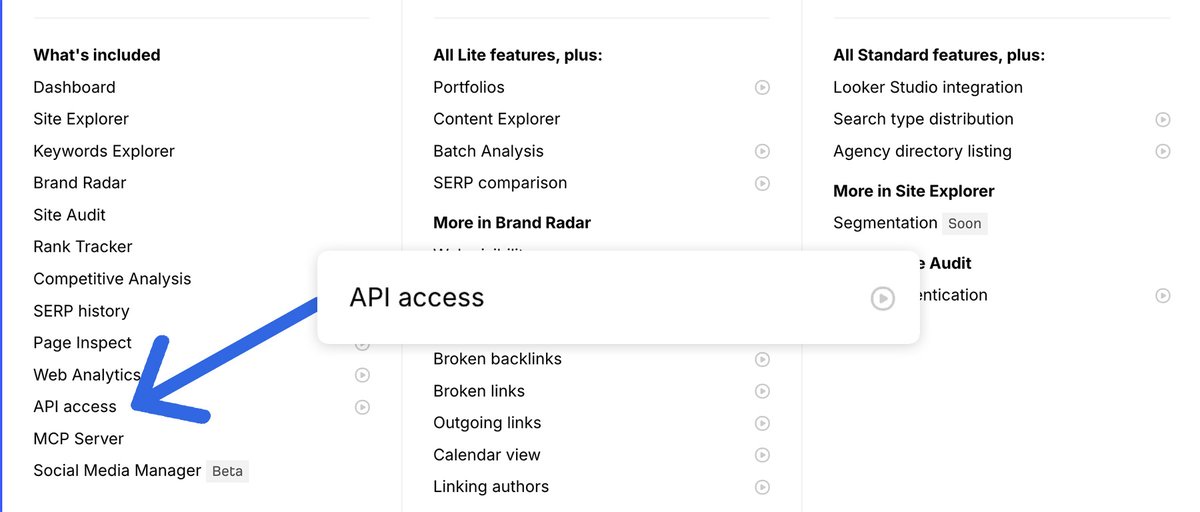

Holy ship. 🚢 Genuinely never thought Ahrefs would do this.

API access is now available on all plans. No extra fees. 🥳

It's something I always wanted as a customer, and I had no involvement in this going live, so my surprise is genuine.

Limits apply depending on your account, but I'm super happy more people can now play with this.

English

@jakezward You're the man this is hilarious. It's easy to spot those who don't actually do SEO.

Love the experiment. I have a DR70 domain just sitting here collecting dust, wheels are turning now.

English

This is job security.

I'm sure it will be as useful as broad match or "smart" campaigns.

Barry Schwartz@rustybrick

A Google patent describes the process of Google building custom AI-generated landing pages for when people click on search results, instead of going to your website seroundtable.com/google-patent-… hat tip @glenngabe @NarwhalJosh @BrandonLazovic

English

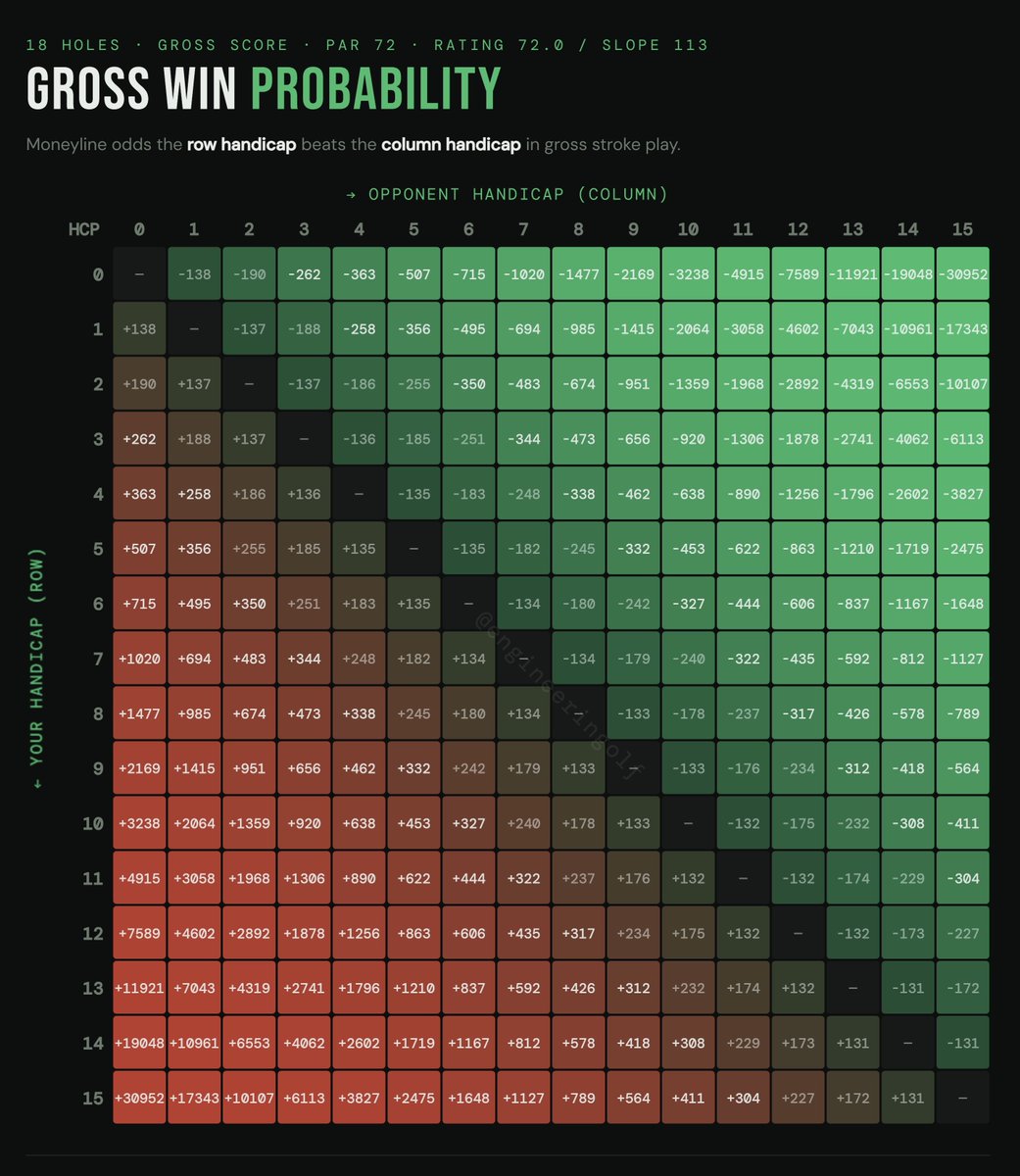

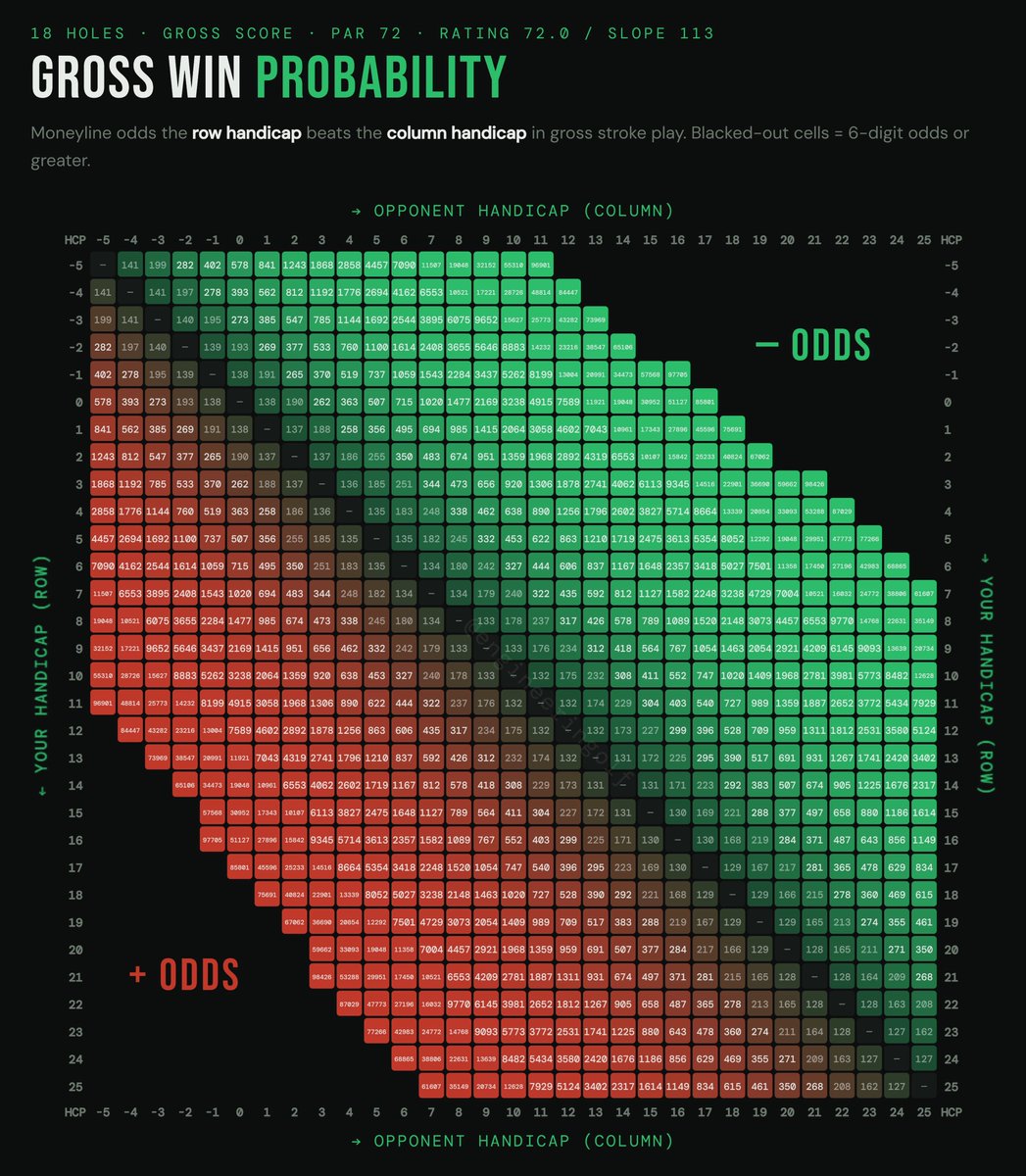

@engineeringolf I went all the way to +8 - 36 with FairwayOdds.com based on your data

English

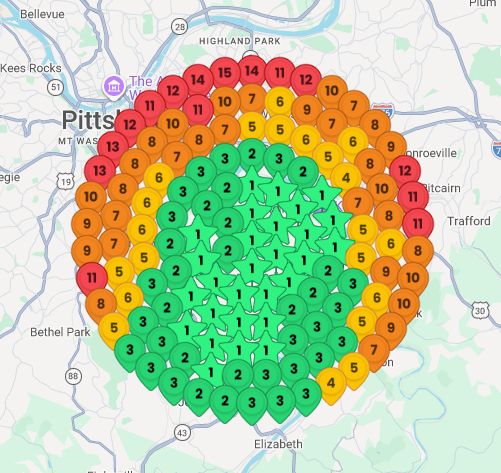

An expanded grid by request. Zoom in, degenerates. 😄

The Golf Engineer@engineeringolf

If your golf buddy usually wants a match with strokes… Would you consider offering the moneyline instead?

English

@ER @crypto_highs No need for any code if you already signed up. The post can be found here:

#post6042" target="_blank" rel="nofollow noopener">ralf-christian.com/forum/index.ph…

English

Like this concept so I built it. Fairwayodds.com let me know what you think.

The Golf Engineer@engineeringolf

If your golf buddy usually wants a match with strokes… Would you consider offering the moneyline instead?

English

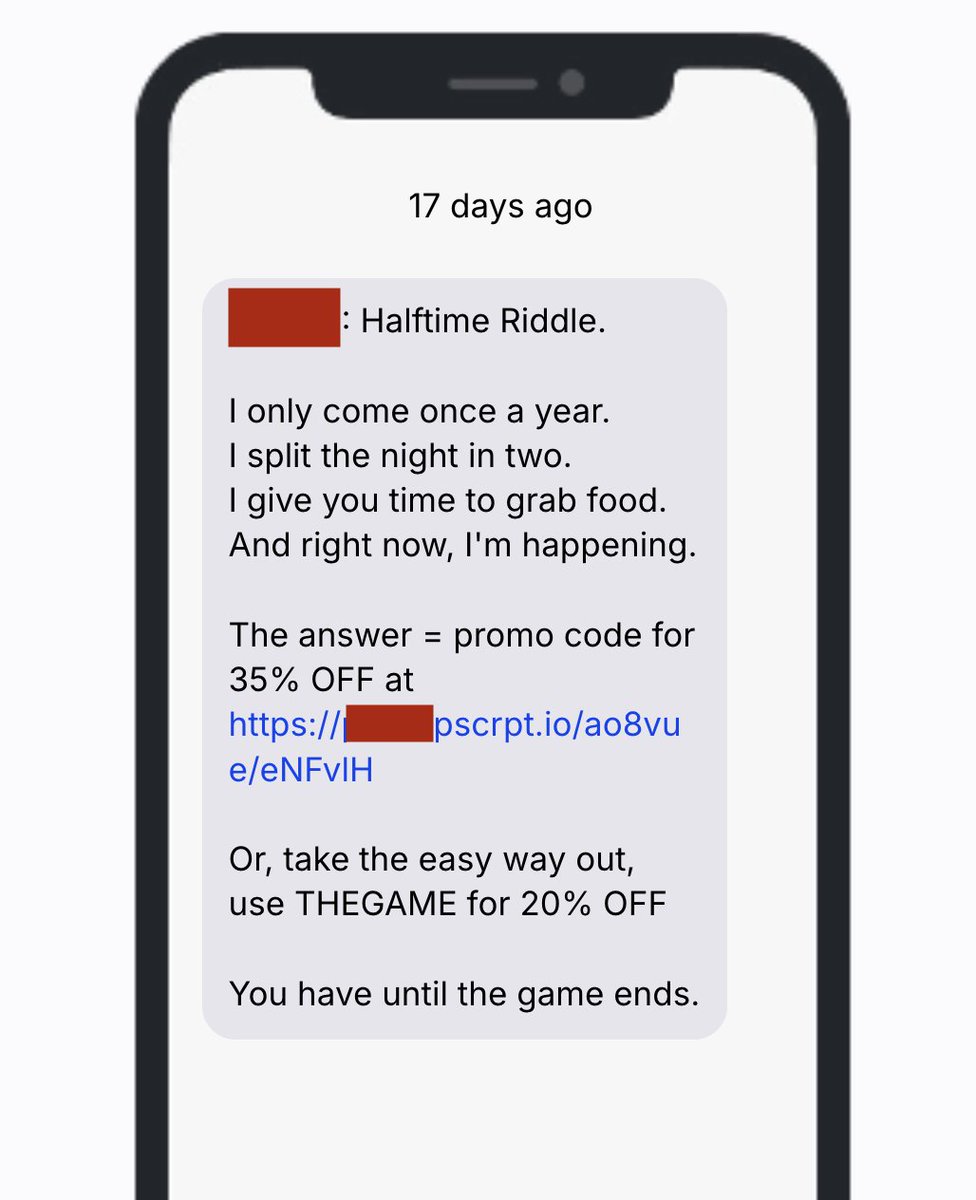

@crypto_highs That's the little riddle you have to solve

If you can't solve that, I'm sorry, but you're not my kind of people. Might sound arrogant, but saves us both a lot of time

If you can solve it, I welcome you

GIF

English

My OpenClaw bot runs 6 AI agents 24/7:

- Finds local businesses without a website

- Builds a custom demo site for them automatically

- Sends outreach with the preview + payment link

- Handles objections and closes the sale

Most local businesses don't have a website, this system finds them, pitches them, and collects payment automatically

Reply "OpenClaw" and I'll send you early access (must be following)

English

Ev retweetledi

We offered 5 people a Porsche 911 GT3 RS if they could get @WisprFlow to make a mistake

It's the fastest and most accurate AI voice dictation app that's 3x more accurate than ChatGPT, Claude, or Siri.

Today, we’re finally launching on Android. Download now: play.google.com/store/apps/det…

As a part of the launch, we’re giving away 6 months of Wispr Flow Pro for free.

Like, retweet and comment ‘Wispr Flow’ to get it. Enjoy.

— Written with Wispr Flow

English