David Townsend retweetledi

📢 New Special Issue TOC Alert

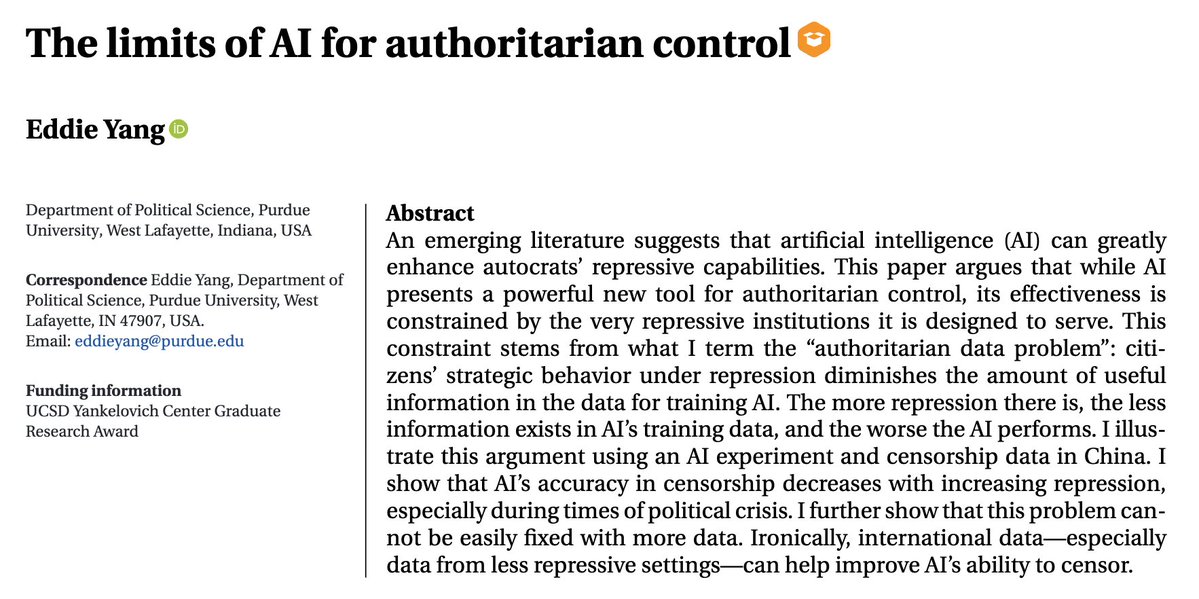

Artificial Intelligence: Organizational Possibilities and Pitfalls

Journal of Management Studies (Mar 2026)

Research on AI, work, governance, trust, strategy & ethics in organizations.

🔗 bit.ly/3NzxHu2

#JMS #AI #ManagementResearch

English