Zhecheng Yuan

210 posts

Zhecheng Yuan

@fancy_yzc

PhD @Tsinghua University, IIIS. Interested in reinforcement learning, representation learning, robotics.

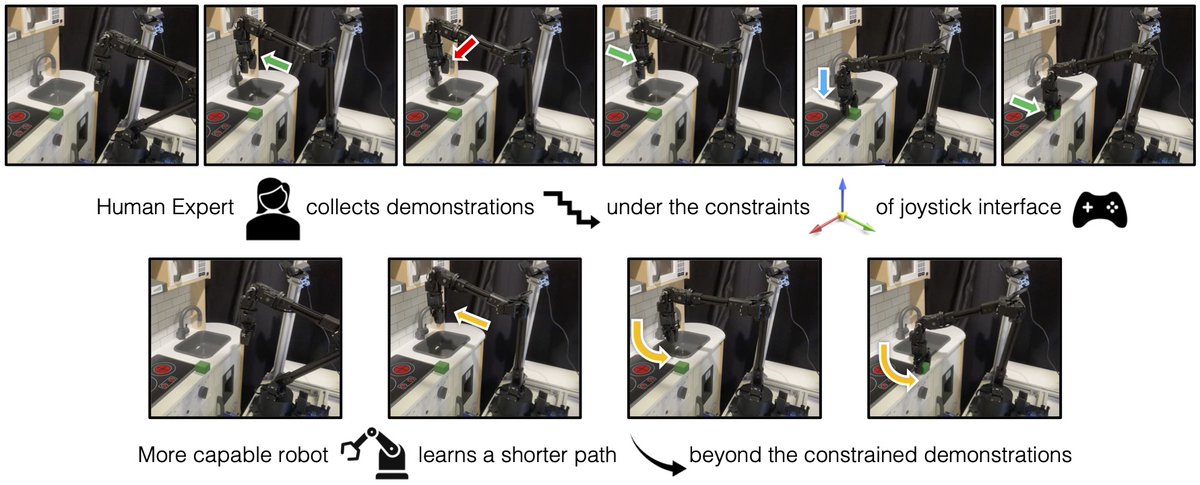

Excited to introduce mimic-video, a new class of Video-Action Model that achieves 10x better data efficiency 📉and trains ⏩ 2x faster than standard VLAs! mimic-video.github.io

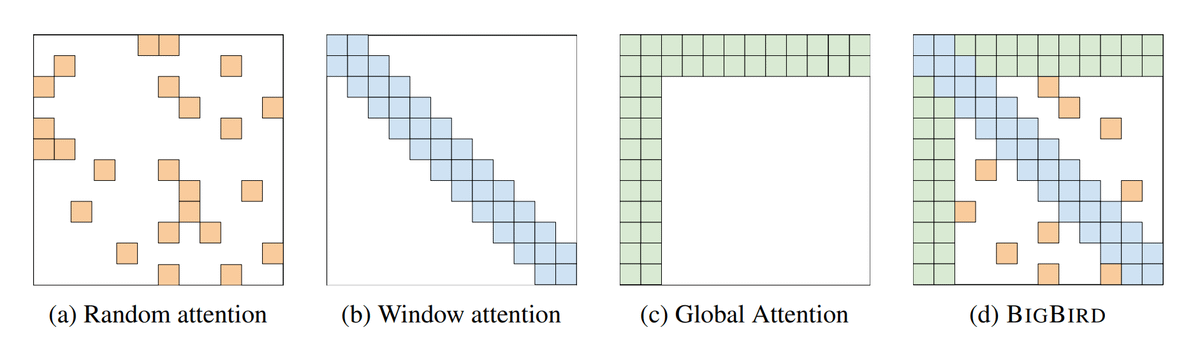

Deploying language models in scientific discovery domains requires extraordinary amounts of test-time compute for search algorithms. An ideal training algorithm should be designed with this goal in mind - that we want agents to learn how to not only exploit but also optimistically explore novel strategies. The agent should learn how to synergistically explore and exploit. We propose Poly-EPO, a set RL algorithm that explores and discovers diverse reasoning paths. Work with @jubayer_hamid (co-lead), Shreya, @ShirleyYXWu, @HengyuanH, @noahdgoodman, @DorsaSadigh, and @chelseabfinn.