Alec Flowers retweetledi

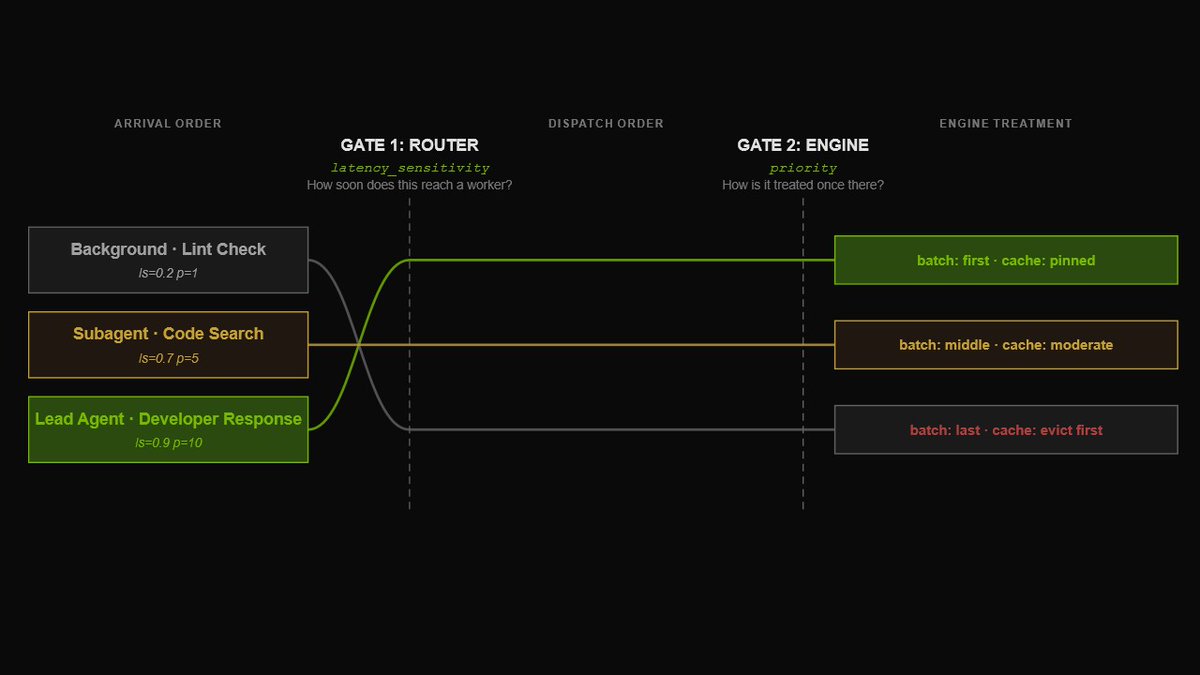

Traditional inference wasn’t built for agentic coding.

Agentic tools make hundreds of API calls per coding session, often with recomputed context, creating bottlenecks that drive up cost per token.

NVIDIA Dynamo rebuilds the stack for agents with:

→ KV-aware routing

→ Agent-aware scheduling

→ Multi-tier caching

→ Unified orchestration

The result: higher cache hit rates, lower latency, and up to 7× more throughput: nvda.ws/3P1tO1N

English