Gary Lang

41 posts

Gary Lang

@garylang

One day I'm Walt. The next day I'm Roy

Students who took notes by hand scored ~28% higher on conceptual questions than laptop note-takers. Writing forces your brain to process and compress ideas instead of copying them.

Tech dudes not being annoying and smug challenge: impossible

@varunram 2007 was better. :-) The ribbon as done the first time was art.

@LauraPowellEsq This video sums it up perfectly. CA mafia youtu.be/-D4WdNlx52g

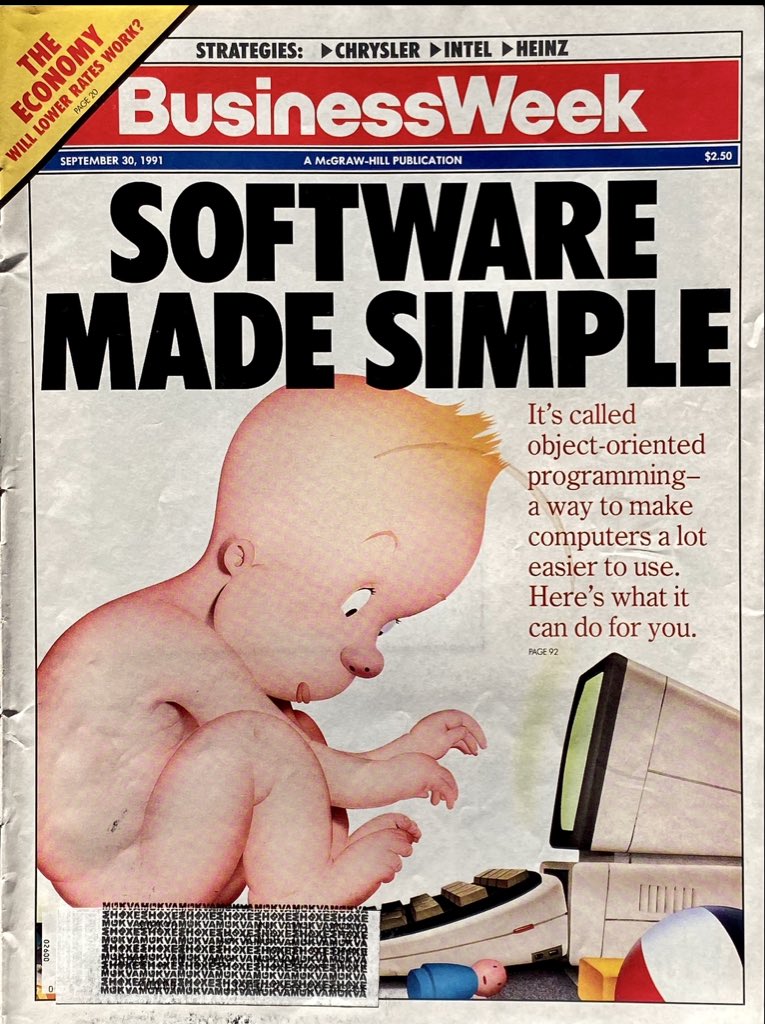

If you are a software engineer "experiencing some degree of mental health crisis", now hear this, because I've been coding for 50 years since the days of punched cards and I have a salutary kick in your ass to deliver. Get over yourself. Every previous "programming is obsolete" panic has been a bust, and this one's going to be too. The fundamental problem of mismatch between the intentions in human minds and the specifications that a computer can interpret hasn't gone away just because now you can do a lot of your programming in natural language to an LLM. Systems are still complicated. This shit is still difficult. The need for people who specialize in bridging that gap isn't going to go away. As usual, the answer is: upskill yourself and adapt. If a crusty old fart like me can do it, you can too.

Flashback to 2008 when John McCain shut down a racist line of questioning. I miss this Republican Party.