Genta Winata

1.3K posts

Genta Winata

@gentaiscool

AI Researcher @CapitalOne AIF. Ex @TechAtBloomberg @BigScienceW @SFResearch @hkust. Working on multilingual and LLM #NLProc. Building @GrassrootsSci

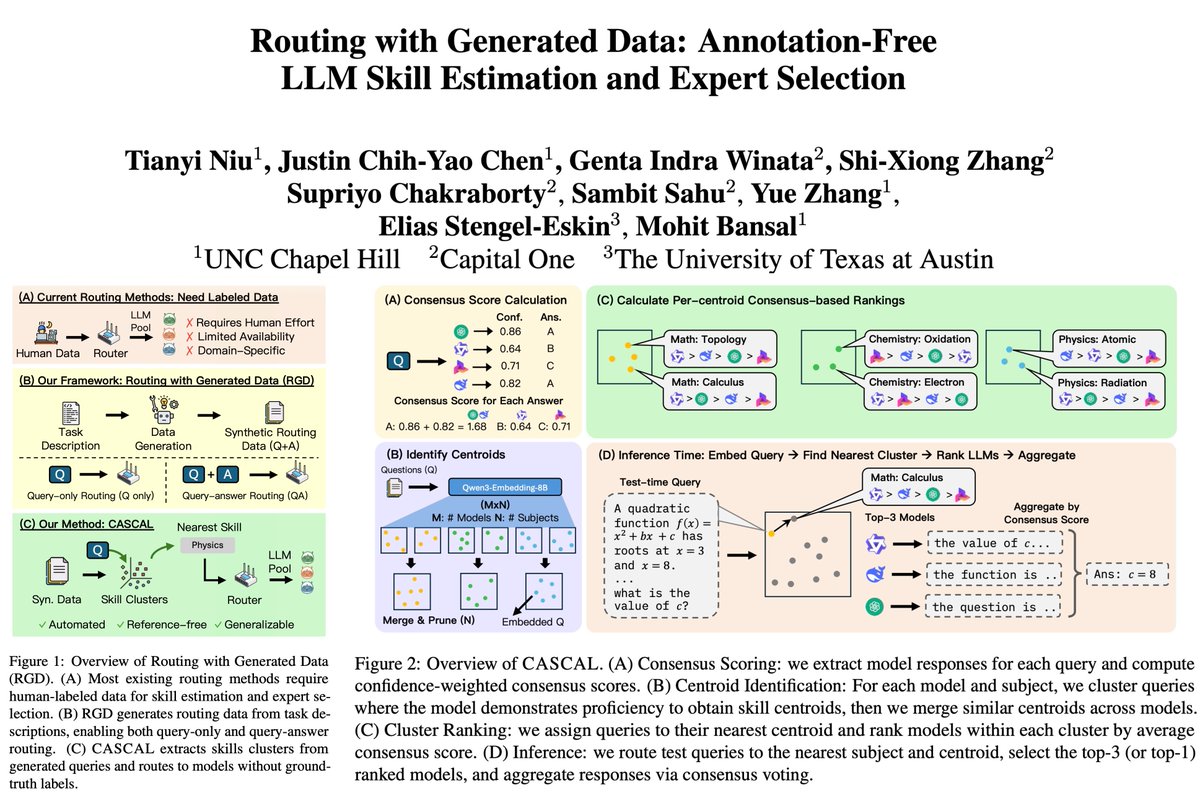

📢 Introducing Routing with Generated Data (RGD), a new setting for annotation-free LLM routing. We study how routers can be trained without any ground-truth labels. We also introduce CASCAL, a novel label-free LLM router that identifies niche skills using consensus-voting and hierarchical clustering. ➡️ Most LLM routers assume access to labeled, in-domain data to estimate model skills (query-answer routers). However, user distributions are unknown and labels are expensive or unavailable, highlighting the need for routers that work without labels. ➡️ We introduce Routing with Generated Data (RGD): routers are trained only on Q&A data generated from task descriptions, without human annotation. We experiment with various LLM generators of different strengths (Gemini-2.5-Flash, Qwen-3-32B, Exaone-3.5-7.8B). ➡️ CASCAL outperforms other query-answer and query-only routers across diverse datasets (MMLU-Pro, SuperGPQA, MedMCQA, BigBench Extra Hard), and is more robust to weaker generators.

Excited to share that we have committed our paper “Vision-Language Models are Confused Tourists” to #CVPR2026 (Findings)! 🇺🇸🏔 Arxiv: arxiv.org/abs/2511.17004 We question whether current SOTA VLMs remain robust in simple cultural grounding QA when distracting contextual objects are present For example, if you eat chicken schnitzel with Mt. Fuji in the background, will the model fail to recognize it as Japanese katsu? ConfusedTourists introduces: 👉 5k+ evaluation samples across 3 cultural item categories, comprising 243 unique cultural items from 57 countries and 11 sub-regions 🌍 👉 Evaluation of 14 VLMs across 12 data features 🤖 👉 Findings showing that simple concept mixing can cause up to a -40% drop in perform 📉 Special thanks to my co-authors @IkhlasulHanif0 , @emthehunt, @gentaiscool, @FajriKoto, and my advisor @AlhamFikri for the valuable contributions along the way! #multimodal #vlm #multicultural #robustness #evaluation #NLProc #ComputerVision

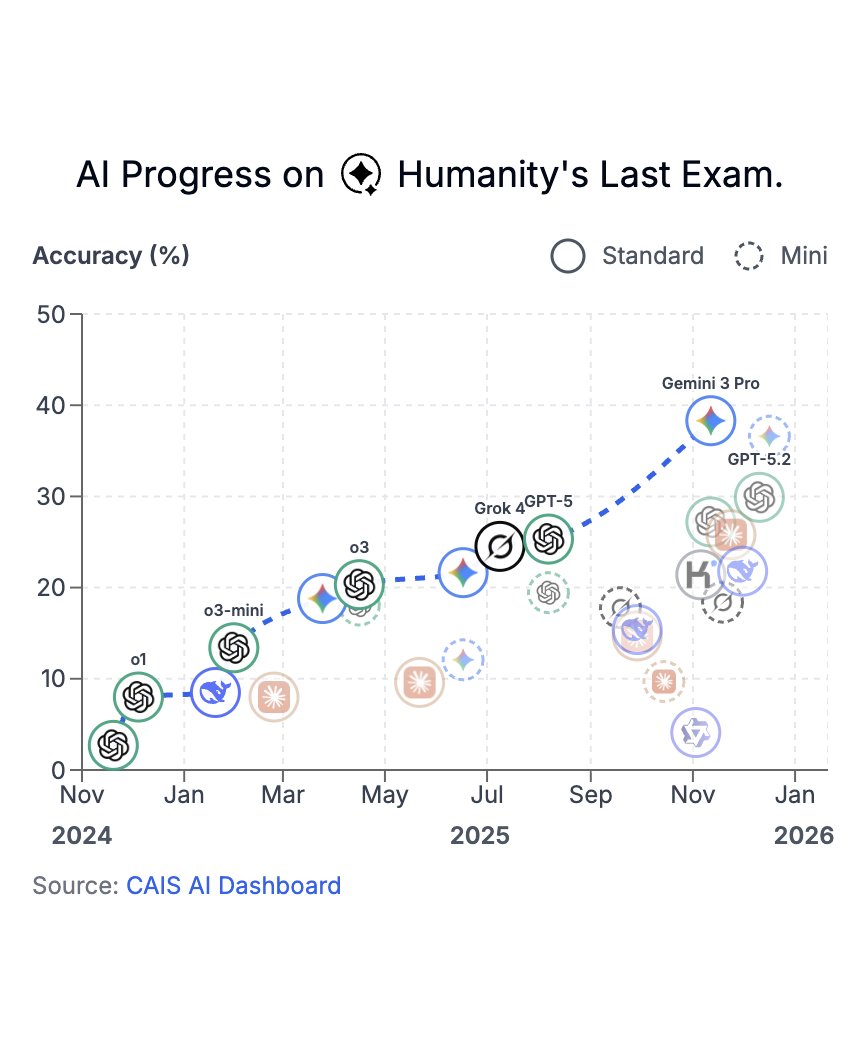

Last week, Humanity’s Last Exam was published in @Nature. In just over a year, model scores on HLE have risen from under 5% to nearly 40%. Thank you to @scale_AI and the 1000+ HLE co-authors for helping policymakers and the public track these rapid advances in AI capabilities.

We are releasing IndicBERT-v3, a suite of multilingual encoder language models (270M, 1B, 4B) built on top of Gemma-3. We adapted these models to use bidirectional attention, making them effective for encoder-heavy tasks. (1/3) @psidharth567 @_iunravel

Craving holiday-themed paper? Say less🎄 Turns out, Vision Language Models are Confused Tourists ✈️😵💫 We show that adversarially induced cultural scenes significantly impair VLM cultural comprehension and trigger potential bias #NLProc #multimodal #robustness /thread 🧵(1/8)