Virginie Do

138 posts

@gini_do

gêne AI researcher 😬 @AIatMeta

New paper! 🎊 We are delighted to announce our new paper "Robust LLM Safeguarding via Refusal Feature Adversarial Training"! There is a common mechanism behind LLM jailbreaking, and it can be leveraged to make models safer!

Announcing the NeurIPS 2024 Best Paper Awards: blog.neurips.cc/2024/12/10/ann…

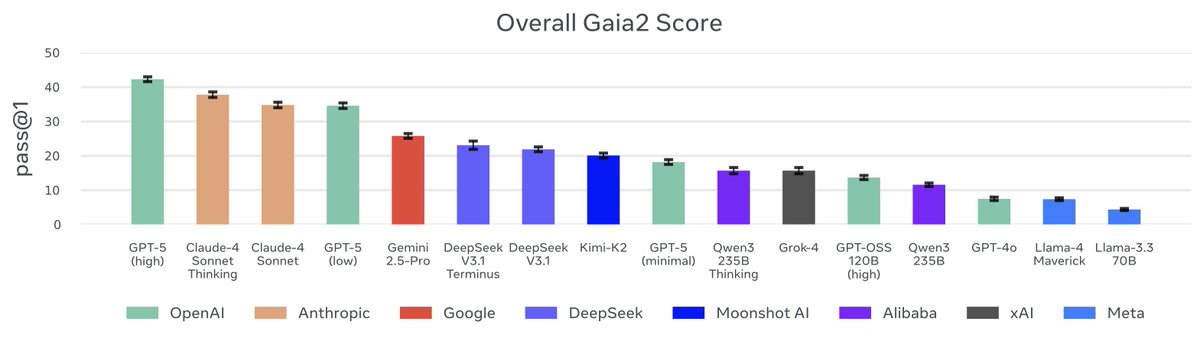

🚨🌟Very excited to share our #EMNLP2024 work on XAI for healthcare 🩺 We did a large user study with 💫85 medics💫 to find out how natural language explanations and saliency maps influence clinical decision-making Check out the details below ⬇️ #XAI #Medical #UserStudy

I am hiring an intern in our Llama team for 2025! Near the end of PhD completion, willing to be based out of Paris. You will succeed @MekalaDheeraj, work around frontier LLMs, tool use, agents, and more :) Please apply here: metacareers.com/jobs/109555634…