Paco Guzmán

410 posts

Paco Guzmán

@guzmanhe

Researcher in Language Technologies

🗣 Introducing TranslateGemma, our new collection of open translation models built on Gemma 3. The model is available in 4B, 12B, and 27B parameter sizes, and furthers communication across languages, no matter what device you own. blog.google/innovation-and…

Happy new year #NAACL! The 2026 election results are here. Congrats🥳 Chair: Anna Rumshisky @arumshisky Secretary: Jessy Li @jessyjli Board members: Muhao Chen @muhao_chen, Francisco (Paco) Guzmán, Ana Marasović @anmarasovic naacl.org/posts/2025-12-… Thank you all for voting!

We're excited to welcome 28 new AI2050 Fellows! This 4th cohort of researchers are pursuing projects that include building AI scientists, designing trustworthy models, and improving biological and medical research, among other areas. buff.ly/riGLyyj

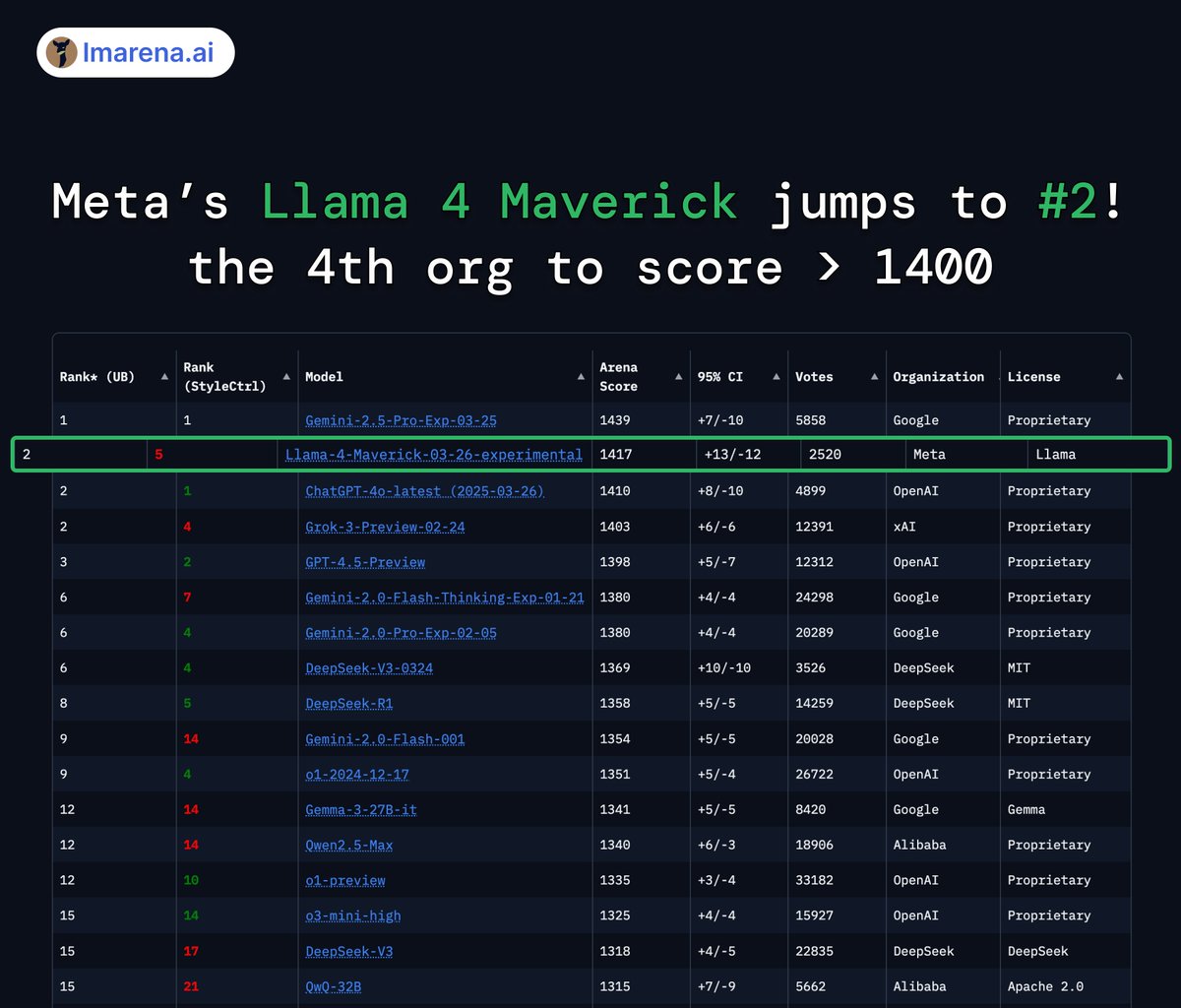

Today is the start of a new era of natively multimodal AI innovation. Today, we’re introducing the first Llama 4 models: Llama 4 Scout and Llama 4 Maverick — our most advanced models yet and the best in their class for multimodality. Llama 4 Scout • 17B-active-parameter model with 16 experts. • Industry-leading context window of 10M tokens. • Outperforms Gemma 3, Gemini 2.0 Flash-Lite and Mistral 3.1 across a broad range of widely accepted benchmarks. Llama 4 Maverick • 17B-active-parameter model with 128 experts. • Best-in-class image grounding with the ability to align user prompts with relevant visual concepts and anchor model responses to regions in the image. • Outperforms GPT-4o and Gemini 2.0 Flash across a broad range of widely accepted benchmarks. • Achieves comparable results to DeepSeek v3 on reasoning and coding — at half the active parameters. • Unparalleled performance-to-cost ratio with a chat version scoring ELO of 1417 on LMArena. These models are our best yet thanks to distillation from Llama 4 Behemoth, our most powerful model yet. Llama 4 Behemoth is still in training and is currently seeing results that outperform GPT-4.5, Claude Sonnet 3.7, and Gemini 2.0 Pro on STEM-focused benchmarks. We’re excited to share more details about it even while it’s still in flight. Read more about the first Llama 4 models, including training and benchmarks ➡️ go.fb.me/gmjohs Download Llama 4 ➡️ go.fb.me/bwwhe9

Introducing Llama 3.3 – a new 70B model that delivers the performance of our 405B model but is easier & more cost-efficient to run. By leveraging the latest advancements in post-training techniques including online preference optimization, this model improves core performance at a significantly lower cost, making it even more accessible to the entire open source community 🔥 huggingface.co/meta-llama/Lla…

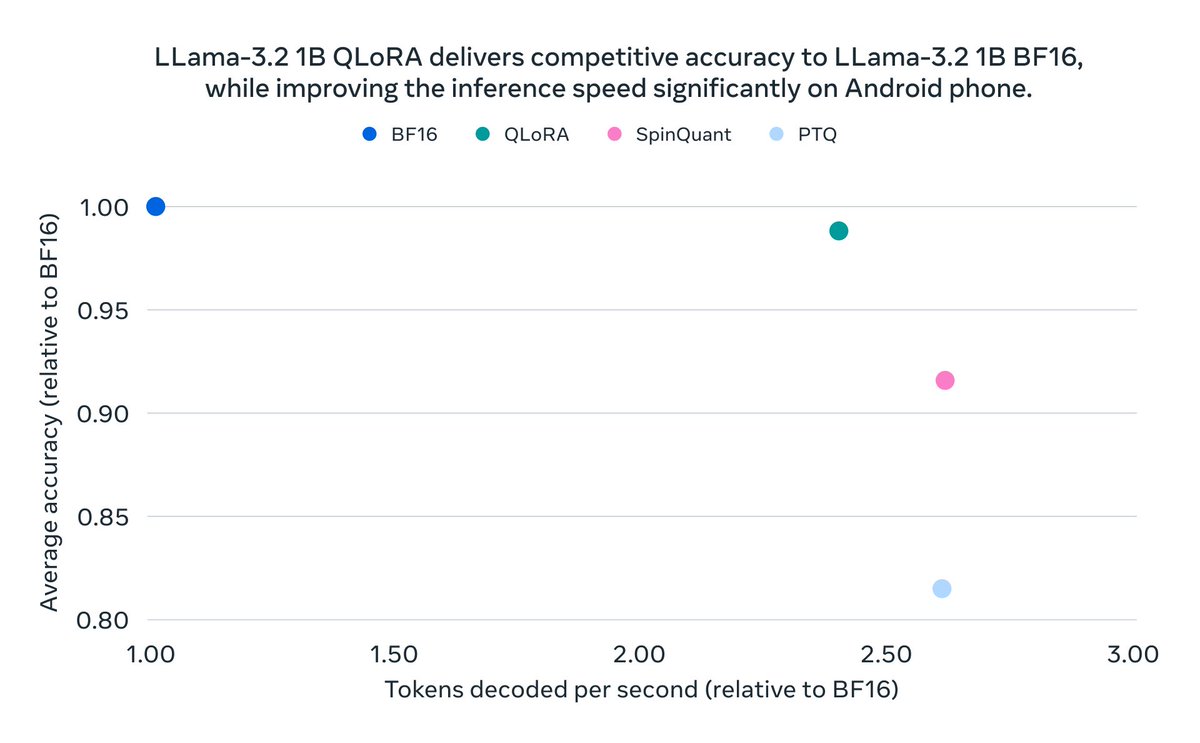

We want to make it easier for more people to build with Llama — so today we’re releasing new quantized versions of Llama 3.2 1B & 3B that deliver up to 2-4x increases in inference speed and, on average, 56% reduction in model size, and 41% reduction in memory footprint. Details on our new quantized Llama 3.2 on-device models ➡️ ai.meta.com/blog/meta-llam… While quantized models have existed in the community before, these approaches often came at a tradeoff between performance and accuracy. To solve this, we Quantization-Aware Training with LoRA adaptors as opposed to only post-processing. As a result, our new models offer a reduced memory footprint, faster on-device inference, accuracy and portability — while maintaining quality and safety for developers to deploy on resource-constrained devices. The new models can be downloaded now from Meta and on @huggingface.

We’ve released QUANTIZED Llama 3.2 1B/3B models. ⚡️FAST and EFFICIENT: 1B decodes at ~50 tok/s on a MOBILE PHONE CPU. ⚡️As ACCURATE as full-precision models. ⚡️Ready to CONSUME on mobile devices. Looking forward to on-device experiences these models will enable! Read more👇