Rahul Kumar

2.2K posts

@hellorahulk

building memory technology | @timelnapp 🪄 prev: Chief AI @apres_io @neuronslab

Anthropic pays $750,000+ a year for engineers who can build LLM architectures from scratch. Stanford taught the entire thing in 1 hour lecture & released it for free. Bookmark & watch this today before someone takes it down.

New for financial services: ready-to-run Claude agent templates for building pitches, conducting valuation reviews, closing the books at month-end, and more. Install them as plugins in Cowork and Claude Code, or use our cookbooks to run them in production as Managed Agents.

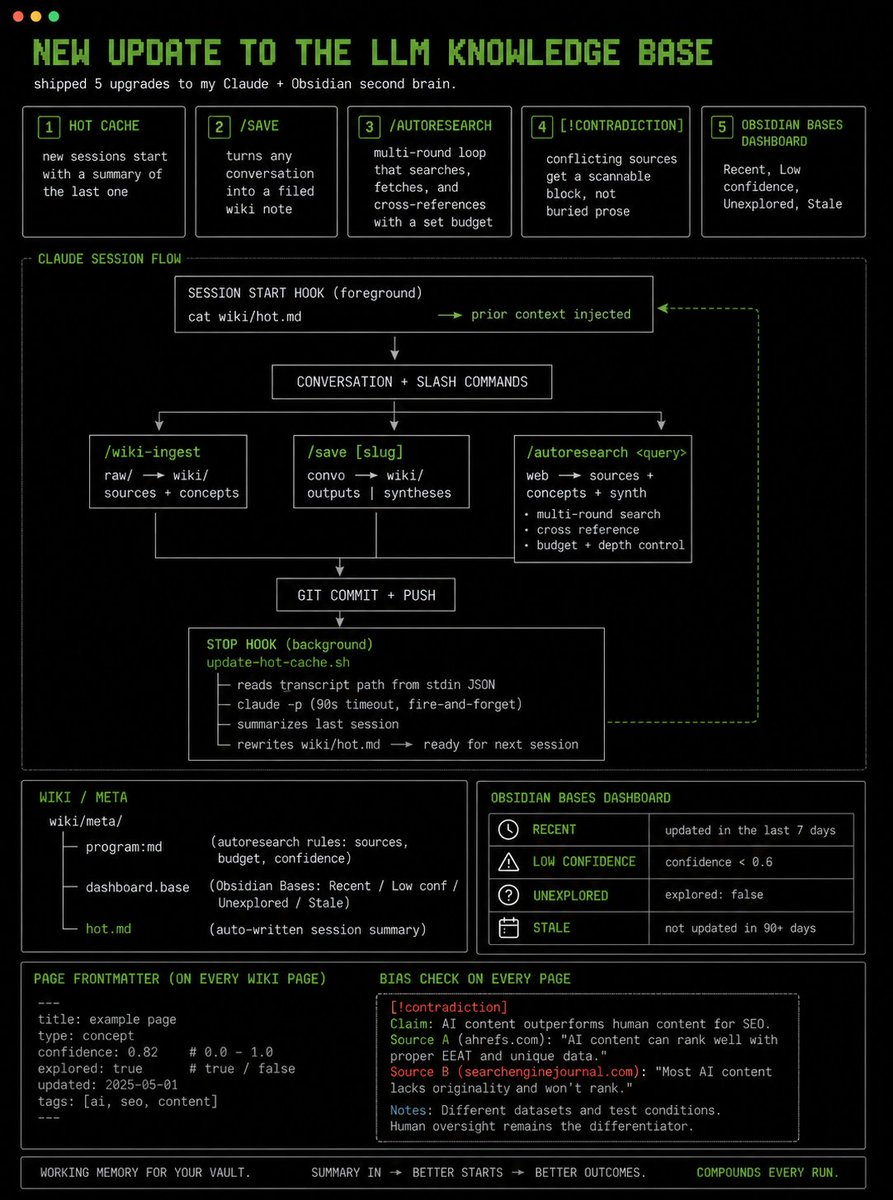

new update to the LLM Knowledge base shipped 5 upgrades to my Claude + Obsidian second brain today: - hot cache → new sessions start with a summary of the last one - /save → turns any conversation into a filed wiki note - /autoresearch → multi-round loop that searches, fetches, and cross-references with a set budget - [!contradiction] callouts → conflicting sources get a scannable block, not buried prose - Obsidian Bases dashboard → Recent, Low confidence, Unexplored, Stale every page has confidence + explored frontmatter. the dashboard shows what's shaky, unreviewed, or 90+ days old. the hot cache I'll notice daily. Stop hook runs claude -p on the transcript and rewrites wiki/hot .md. SessionStart injects it, that means the vault has working memory.