Sabitlenmiş Tweet

kailash

2.7K posts

@jtregunna Makes sense.. LSP is key. The problem with repo maps without LSP is that they get stale every turn.

Another possible idea is a context 'sieve' that sifts in relevant info from a large context for an LLM query, perhaps implemented with an SLM? Many ideas unexplored..

English

Yeah it's on my reading list, at work right now so will check later. Also thinking of a major rewrite to ctrl+code just to slim it down, and make a context router out of one of the libraries i use in ctrl+code called harnessutils and have not just my approach, but essentially a bunch of different approaches, you pick and choose, but ontologies of types of interactions, a cheap way to classify (TBD) and use that ontology to pick which of the approaches makes sense... continuation type? just take last prompt + the minimal delta of whatever the user's one word-ish prompt said... debugging? Focus your retrieval, error logs, recent changes, test failures, LSP symbols in the failing module, etc... it'll be robust.

English

Coding harnesses listen up:

Integrate LSPs, you can cover many languages as there are many quality LSPs.

Use those LSPs to build indexes and local semantic understanding around things like invariants, contracts, call graphs, etc., and where they're located.

When you use the edit/update tool, track what file was changed, what offsets, update those in the indexes.

You can probably take it from here, but you'll want some sort of scoring system on the code you index.

Then, on the next turn, don't just shit this turn's response into the context window and continue... reset the context window (you know, what some harnesses have a /clear command for), and recreate it based on the user's next turn prompt.

Rinse, repeat.

Yes it adds latency... yes it improves accuracy and context window management... no almost nobody does it this way because they're bloody moronic.

Use your own human brain from time to time at least when working on your harnesses.

English

@jtregunna I'd love to hear your take on the approach i've taken (doc linked earlier). It's a turn based, rolling window that just tells the LLM where to look for history. I've found it effective. I'll check out ctrl+code.

English

@hsaliak Yeah, my approach is not quite fully complete in ctrl+code, but it's in this direction. Don't even need an embedding model if you're ok burning a turn in the loop on it though, but yup. The key is to shed messages like we'd shed traffic during a DoS attack on a website.

English

@jtregunna But your approach will certainly work.

1. Build a code map based on user request.

2. Use an embedding model to obtain relevant data from it.

3. ignore irrelevant (or consider previous N turn's user messages)

Has legs. It could work!

English

@jtregunna I've noticed that a 'message group' which is a user request to final response, often varies, it's like moving between one scene to another. I take advantage of this insight in designing the context. You can cover for multi turn context by tuning the number of groups to consider.

English

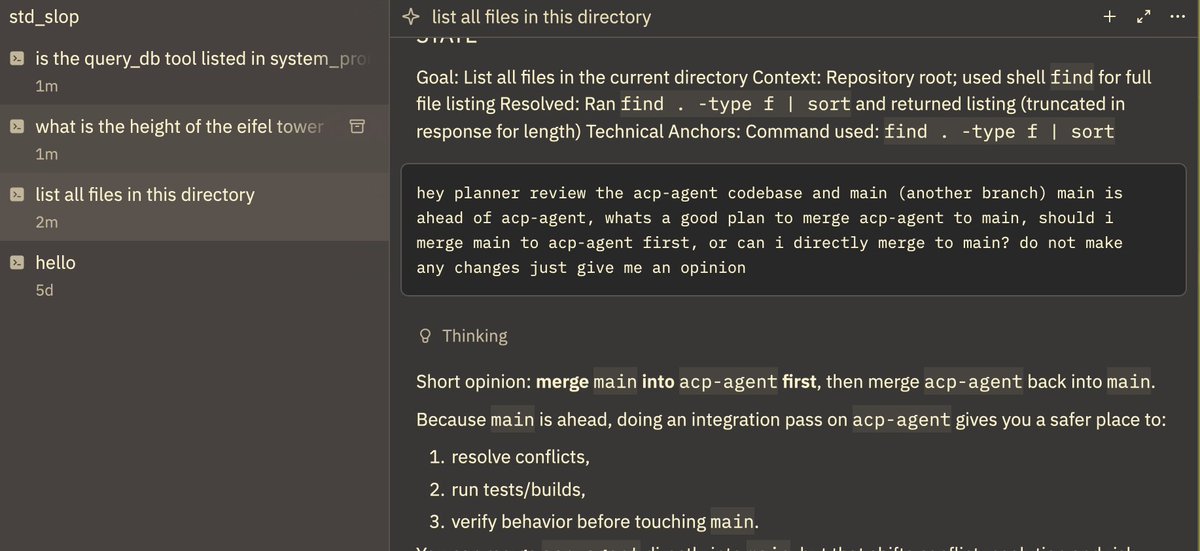

The multi-agent feature announced by @zeddotdev totally works with std::slop's ACP support! I dont think i'll merge this feature to main because of the complexity of the protocol, but it's been an interesting learning experiment.

English

@bkaradzic @olson_dan No arguments there, but this nuance is lost on a non technical audience with a blanket policy.

English

@hsaliak @olson_dan This is not library, it's compiler extension. It's like using GCC.

English

@bkaradzic @olson_dan Many companies or teams have an uphill battle to climb the moment discussion on vendoring a gpl3 codebase starts, regardless of use. It’s a hurdle.

English

@olson_dan Orthodoxy - Clang plugin to enforce custom C++ feature restrictions

#orthodoxy" target="_blank" rel="nofollow noopener">github.com/d-musique/orth…

English

@olson_dan No-exceptions abseil and c++17 works for me. My problem with c++20 is not really the language changes, but the standard library bloat with features unsuitable for high performance code. Ranges in 20 for example.

English

Spent time this weekend on a new release of std::slop. Native OAuth flow, so you can get going with a single binary download.

Revised documentation github.com/hsaliak/std_sl…

UX polish. github.com/hsaliak/std_sl…

ACP support remains in a separate branch for now. I’m not convinced.

English

@jorandirkgreef The value of compounding engineering work. It beats haste all the time.

English

@olson_dan Please don’t get me wrong, I know I can still do this, but the pricing model is not consistent with what I perceive to be the ideal workflow. Pricing had to make the right things easy, and the wrong things hard - hence the questions.

English

@olson_dan Ok I see - use the SOTA model for one-shot but use something simpler for conversational workflows. I still feel that this is the opposite of how LLMs work.. and I feel that I need to converse and “close the loop” in a fundamentally non deterministic workflow, but I will try it!

English

I am a Microsoft employee if you go down the org chart far enough, so take that as a disclaimer. But I think github copilot is one of the best values in AI all around right now for personal stuff.

OpenCode and copilot CLI both support it, and both are not bad. Another disclaimer: I haven't tried codex or claude code to see how they compare.

English