iamrobotbear (bk)

27.7K posts

iamrobotbear (bk)

@iamrobotbear

Product Manager & AI Engineer working on Gen AI & ML. Opinions are my own, not my employer's. RT !=endorsement

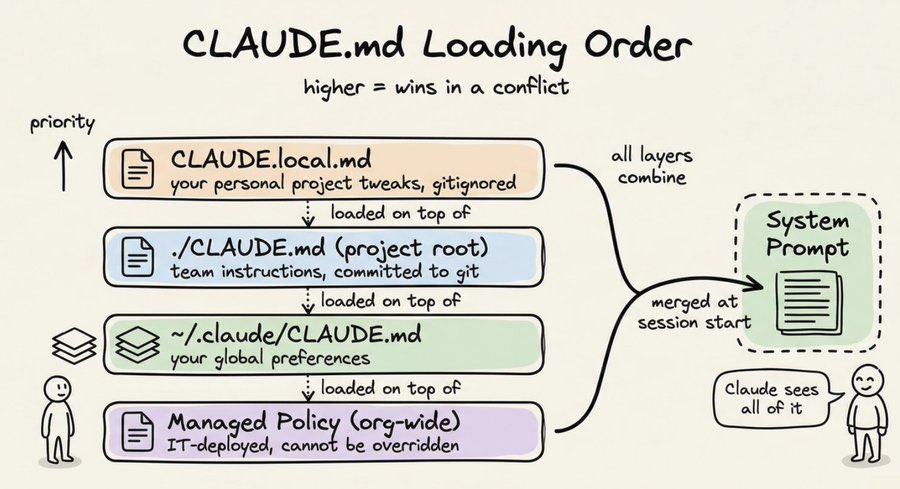

I want to make /init more useful- what do you think it should do to help setup Claude Code in a repo?

LlamaParse now has an official Agent Skill you can use across 40+ agents. With built-in instructions for parsing complex documents, including different formats, tables, charts, and images, your agents gain access to deeper document understanding, not just raw text extraction. 👇 Watch the demo 📖 Read the docs: developers.llamaindex.ai/python/cloud/l… 🚀 Get started with LlamaCloud: cloud.llamaindex.ai/signup?utm_sou…

We've spent years building LlamaParse into the most accurate document parser for production AI. Along the way, we learned a lot about what fast, lightweight parsing actually looks like under the hood. Today, we're open-sourcing a light-weight core of that tech as LiteParse 🦙 It's a CLI + TS-native library for layout-aware text parsing from PDFs, Office docs, and images. Local, zero Python dependencies, and built specifically for agents and LLM pipelines. Think of it as our way of giving the community a solid starting point for document parsing: npm i -g @llamaindex/liteparse lit parse anything.pdf - preserves spatial layout (columns, tables, alignment) - built-in local OCR, or bring your own server - screenshots for multimodal LLMs - handles PDFs, office docs, images Blog: llamaindex.ai/blog/liteparse… Repo: github.com/run-llama/lite…

VLMs today—including our own Molmo—point via raw text strings (e.g. "

We've been building an internal Claude Code plugin system at Intercom with 13 plugins, 100+ skills, and hooks that turn Claude into a full-stack engineering platform. Lots done, more to do. Here's a thread of some highlights.