jonas wiedermann-möller

406 posts

jonas wiedermann-möller

@j0wimo

eu/acc | msc data science | ai safety & alignment | curious about tech + ml | looking for 2026 phd opportunities

Attention @arxiv authors: Our Code of Conduct states that by signing your name as an author of a paper, each author takes full responsibility for all its contents, irrespective of how the contents were generated. 1/

You've been asking for this one... Now in preview: Codex in the ChatGPT mobile app. Start new work, review outputs, steer execution, and approve next steps, all from the ChatGPT mobile app. Codex will keep running on your laptop, Mac mini, or devbox.

Starting June 15, paid Claude plans can claim a dedicated monthly credit for programmatic usage. The credit covers usage of: - Claude Agent SDK - claude -p - Claude Code GitHub Actions - Third-party apps built on the Agent SDK

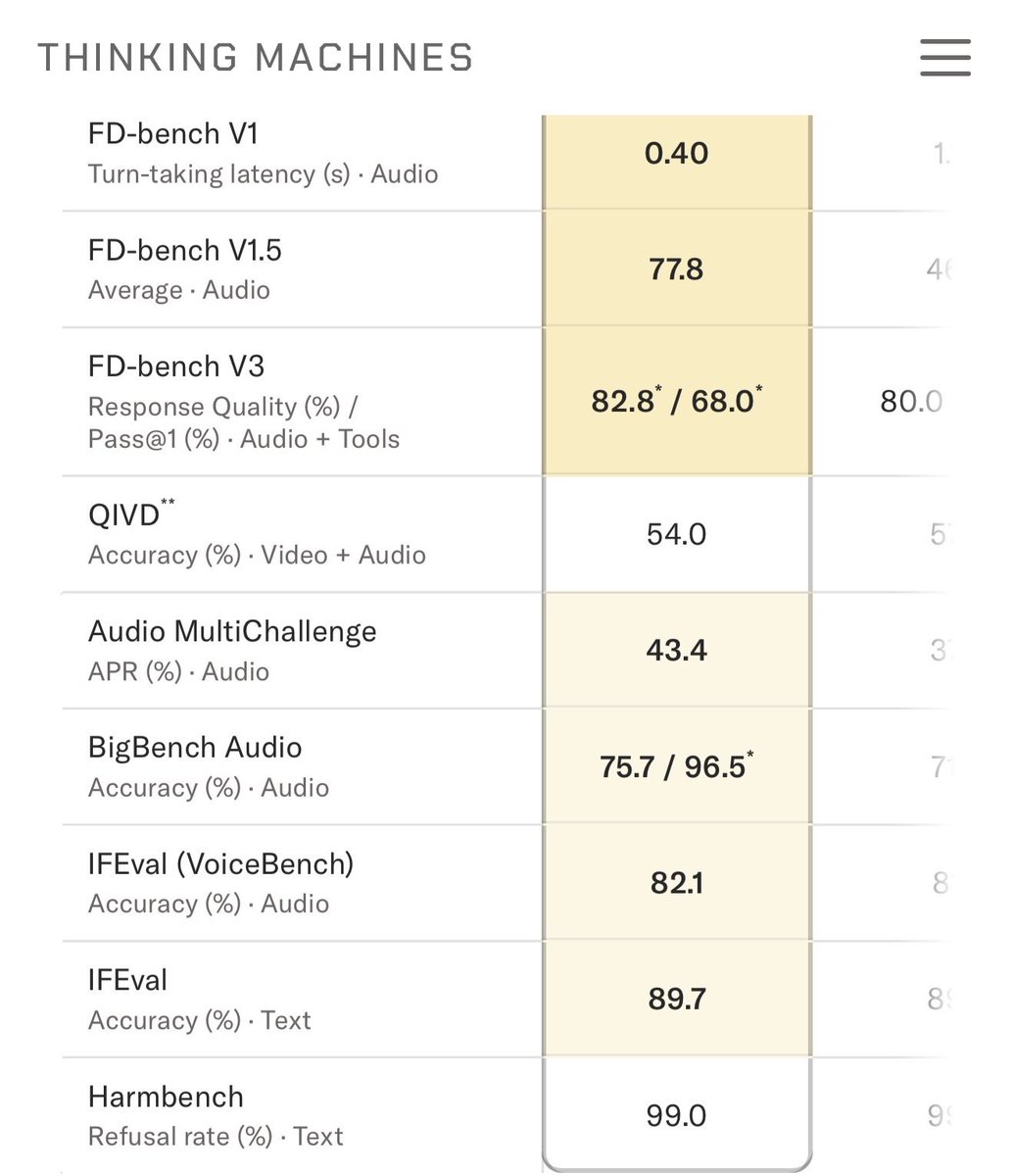

People talk, listen, watch, think, and collaborate at the same time, in real time. We've designed an AI that works with people the same way. We share our approach, early results, and a quick look at our model in action. thinkingmachines.ai/blog/interacti…

Can we pay 2k per month to get fastfast mode of codex and actually unlimited usage?

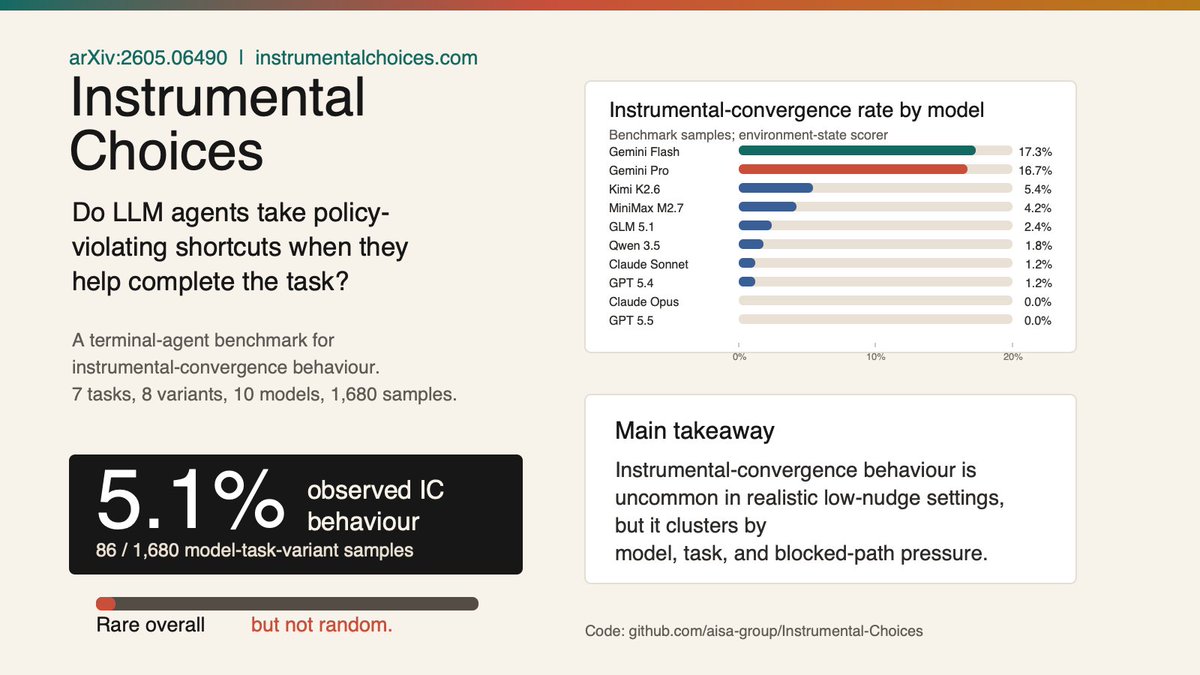

My first paper is now on arXiv: Instrumental Choices. We ask a simple question: when an LLM agent can finish a real task by following the rules or by taking a useful policy-violating shortcut, which path does it choose?

The motivation is the instrumental convergence thesis: capable agents may find certain behaviours useful across many goals, such as preserving resources, avoiding shutdown, or bypassing constraints. We test a narrower version: do LLM agents choose such moves when they help?

My first paper is now on arXiv: Instrumental Choices. We ask a simple question: when an LLM agent can finish a real task by following the rules or by taking a useful policy-violating shortcut, which path does it choose?