Jack Lin

41 posts

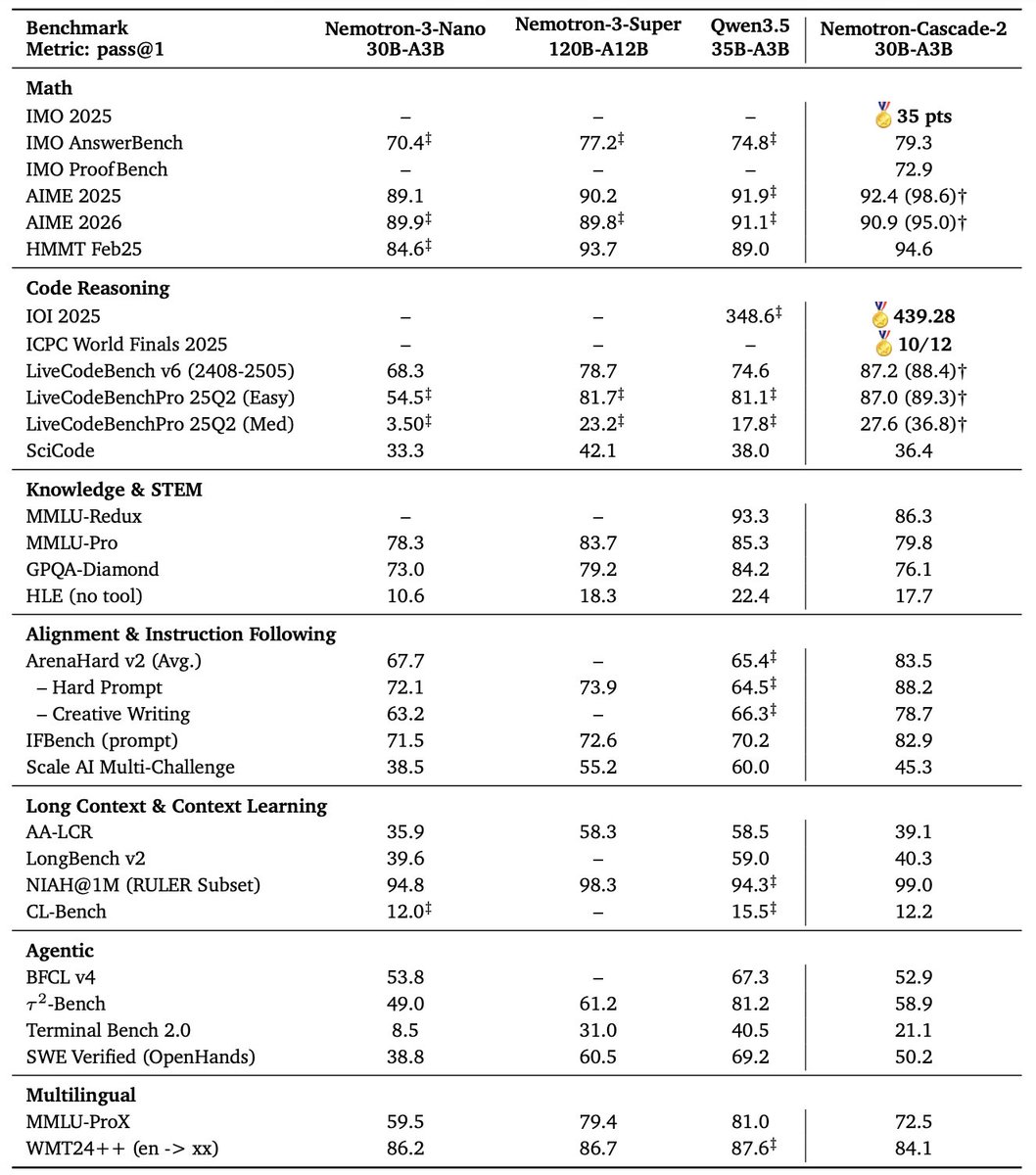

🚀 Introducing Nemotron-Cascade! 🚀 We’re thrilled to release Nemotron-Cascade, a family of general-purpose reasoning models trained with cascaded, domain-wise reinforcement learning (Cascade RL), delivering best-in-class performance across a wide range of benchmarks. 💻 Coding powerhouse After RL, our 14B model: • Surpasses DeepSeek-R1-0528 (671B) on LiveCodeBench v5/v6/Pro. • Achieves silver-medal performance at IOI 2025 🥈. • Reaches a 43.1% pass@1 on SWE-Bench Verified, and 53.8% with test-time scaling. 🧠 What is Cascade RL? Instead of mixing heterogeneous prompts across domains, Cascade RL trains sequentially, domain by domain, which reduces engineering complexity, mitigates heterogeneous verification latencies, and enables domain-specific curricula and tailored hyperparameter tuning. ✨ Key insight Using RLHF for alignment as a pre-step dramatically boosts complex reasoning—far beyond preference optimization. Subsequent domain-wise RLVR stages rarely hurt the benchmark performance attained in earlier domains and may even improve it, as illustrated in the following figure. 🤗 Models & training data 🔥 👉 huggingface.co/collections/nv… 📄 Technical report with detailed training and data recipes 👉 arxiv.org/pdf/2512.13607

Our new paper, which studies the vulnerability of document screenshot retrievers like DSE and ColPali to pixel poisoning attacks, is now available on Arxiv! arxiv.org/pdf/2501.16902 This work was done with @EkaterinaKhr, @xueguang_ma, @bevan_koopman, @lintool, @guidozuc.

1/ Excited to share that our paper "NEST🪺: Nearest Neighbor Speculative Decoding for LLM Generation and Attribution" is accepted at #NeurIPS2024! 🚀 Catch us at the poster session on Thu, Dec 12, 4:30–7:30 PM PST, East Exhibit Hall A-C, #2201. [Details: neurips.cc/virtual/2024/p…]

Introducing FLAME🔥: Factuality-Aware Alignment for LLMs We found that the standard alignment process **encourages** hallucination. We hence propose factuality-aware alignment while maintaining the LLM's general instruction-following capability. arxiv.org/abs/2405.01525

Introducing MM-Embed, the first multimodal retriever achieving SOTA results on the multimodal M-BEIR benchmark and compelling results (among top-5 retrievers) on the text-only MTEB retrieval benchmark. Paper: arxiv.org/abs/2411.02571 🤗 Model: huggingface.co/nvidia/MM-Embed