🄹🄳🄷

45 posts

Black swan events come with their own set of disruptions. TSMC is not getting helium right now. Singapore port is having logistics issues with delayed cargo. Crude stuck in Hormuz is causing a storage glut. The world is going through a manufactured supply chain disruption.

@HPbasketball Now that I think about it, it’s hard to make the argument that “Shai is the best scorer since…” when Luka is playing. Both have around 500 career games. Luka has scored 14570 pts vs SGA’s 13000. Luka has 57 40-point games, SGA has 31. SGA has been more efficient recently though

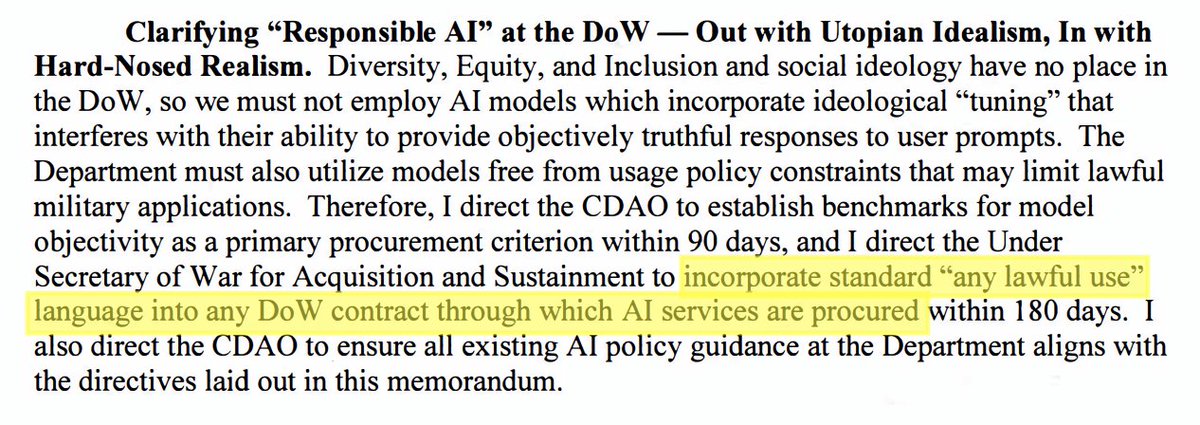

I've been thinking more about this Anthropic vs. Department of War fight, and there's a weird wrinkle here, separate from the Defense Production Act mess. And even if what I said earlier sounded pro-Anthropic, this part might not. (Sorry, Claudites.) Here's the question: who do we want making military decisions? It's an old question but AI makes it fresh again. When Colt sells the military an M16, Colt is out of the loop. Not just legally, but practically. Colt has no way to override a soldier's judgment about where to point the gun or when to pull the trigger. The gun's limits are baked in at the point of sale, and really even earlier. AI isn't like that. Even if the military runs an AI model on its own hardware and fine-tunes it in-house, the base model can still carry the developer's built-in constraints and priorities. That's kind of the point of "alignment": the model keeps following certain principles across many different situations. So a model used in an operation could spit out answers, take actions, or refuse to take actions, that clash with what a soldier asks, what a commander orders, or what the mission requires. This basic issue isn't new. Whether and how "upstream" model choices shape "downstream" behavior is a huge debate in AI policy. It's why some people want to regulate model developers directly, and why others want to pin liability on them when an application causes harm. But in most of those debates, the concerning outcome is unintended by the model developer. I don't think anyone thinks model developers intend to cause AI psychosis or libel or whatever. The classic exception is concerns over "woke AI," where critics claim the model is intentionally steered to reflect certain "woke" values. This Anthropic / DoW dust-up is more like the "woke AI" debate than most of AI policy, because it is about intentional changes to the model. You can imagine the DoW worrying that Anthropic's training choices, its "constitution," etc, could make Claude resist certain tasks or nudge decisions in ways that undercut military intent. The fear isn't AI accidentally failing, it's the AI subverting the chain of command. Now, I don't know for sure that is the concern driving this disagreement with Anthropic. Another read of the reporting is simpler: maybe it's just about which programs Anthropic will participate in. The company has been vocal that it doesn't want its tech used for domestic surveillance or autonomous weapons. But I suspect the military's bigger worry isn't "we can't use Claude for Program X." It's "we can't trust Claude to do what we need when it matters." A few things point that way: - The military says this isn't about surveillance or autonomous weapons. - The dispute reportedly flared when Anthropic raised concerns about use tied to seizing Maduro. That's not domestic surveillance, and it's not obviously autonomous weapons either. - The threat to label Anthropic a supply-chain risk makes a lot more sense if the worry is deeper than which programs use Claude--if the worry is that the model itself carries values that could conflict with defense objectives. To me, that concern makes sense. It no doubt applies to every AI model developer, not just Anthropic. But I understand why the military wants a partner that will work to embody the military's values and directives into the AI model, even if that overrides the company's values. I'm no expert on the institutions that govern military force. But it seems fundamental that the monopoly on legitimate force has to sit with **politically accountable** government actors. If outside parties can intentionally shape how military tools behave in decisive moments, that blurs responsibility. Commanders still own the consequences of military actions. They should be able to demand tools that let them make the calls they'll be held accountable for. To be clear, I don't think Anthropic has to sell anything to the U.S. military. They can refuse service. That's their right. But if they do sell the service, the procurement model should look more like the M16: once the deal is done, the military makes the decisions. And the military owns the results.

I have to admit: I managed to get my hands on a piece by @kimasendorf (commissioned by @jdh). 45DF #96 is in the vault now. I love everything about it—the unmistakable digital language of Kim, the physical object, even the wild packaging. This piece has a special place in my collection, and it always will. And maybe I played the tiniest part in how all of this came to be. That’s what many people don’t understand: if you collect for the right reasons, you’re so much more than a buyer. You can’t help but engage with artists, open doors, and support where you can. Something grows—something far more complex than what’s visible from the outside. For me, this work also stands for that complexity. Thank you, Kim. (And of course @jdh as well.)

no one’s gonna believe me but becoming a good speaker is really easy just record yourself for 10 minutes every day, first thing in the morning. don’t send it to anyone, just force yourself to watch it later. you’ll notice every possible flaw you can imagine.