J

91 posts

@mallocblock @captain0001 @pflodin @pronounced_kyle After many flights with horrible media quality, I highly doubt that the source files even have that bitrate lol

English

@captain0001 @pflodin @pronounced_kyle The audio is streamed over AVDTP. A good audio quality stream is +380kb/s. In such a noisy location that can probably be reduced by 100kb and not be easily noticed.

English

I would love to hear an RF engineer talk about how this is possible.

There's only so much 2.4 GHz bandwidth... and planes are gigantic metal tubes...

gaut@0xgaut

airlines finally letting you connect your own headphones via bluetooth to screens is life changing

English

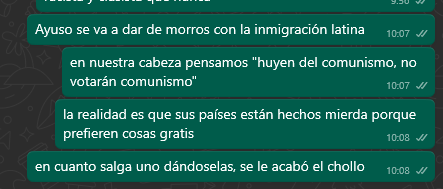

@iknovusnucleo @Franquistaaa @basedn7 Pues como venezolano en España te digo que todos mis familiares (venezolanos hijos de españoles) y amigos venezolanos nacionalizados votan derecha. La putada es q aún así hay mucho venezolano retrasado que no se da cuenta de que el PSOE nos lleva por el mismo camino, es demencial

Español

@Franquistaaa @basedn7 Salvo contadas excepciones (cubanos), el 90% de los panxos votan a izquierda. El otro día mismamente un venezolano me dijo: "Yo sé que Sánchez es un hijoputa pero estoy aquí gracias a él, así que le tengo que votar".

No se podía saber.

Español

@DePasqualeOrg Oh yes, I’m sorry for not clarifying it, I thought it was clear. The app and all the other models work perfectly!

English

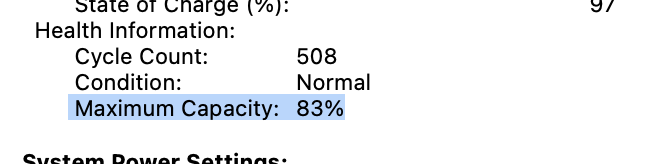

@dessatel @mweinbach TBF, I’ve seen some implementations (I think it was MLX?) that disregard the NPU and use the GPU and CPU exclusively for better speeds. Would love to see the comparison between mlc-llm, MLX, and llama.cpp, running on both M3 and M4 chips!

English

@mweinbach Not surprising. A lot of cookie-cutter reviews. GPU AI reviews will come first. Apple Neural Engine will be the last to come. I miss AnandTech.

English

@ErkanEker @sille_jazavac @politypto @AbdulSbeei @theonecid @theapplehub Yeah, I can definitely use everything except for iPhone mirroring, and I’m in Spain with a US Apple ID

English

@sille_jazavac @politypto @AbdulSbeei @theonecid @theapplehub Why? To show you my full name?

This is more than enough.

You will hear from europeans using intelligence in the next days anyways..

English

@Depthperpixel @flyosity Exactly! Some don’t seem to realize those exact results are the ones people will love to use

English

@flyosity But that IS ready! I want comically bad AI emojis so bad!

English

@DePasqualeOrg Will it be recorded? Sadly, I can’t attend even though I’m in Spain😕

English

I'll be giving a presentation on MLX for Swift developers at Glovo in Barcelona on November 5. Come by if you're in town! meetup.com/nsbarcelona/ev…

English

@rossetate Awesome and impressive insight! Additionally it seems to be written in LaTeX?!

English

As the author of this PDF, it's been interesting seeing people guess at the rationale behind its design. However, the rationale had nothing to do with theory vs practice, and everything to do with pragmatically coping with an unaccommodated disability in academia. (1/16)

Deedy@deedydas

Compilers was was known to be the hardest CS class at Cornell which was hard as it is. We were handed a 8-page PDF at the start of sem for a language spec we'd be implementing by the end of sem, split into 6 parts. On part 5, the median was a 0/100 and most the class failed.

English

LM Studio 0.3.4 ships with Apple MLX 🚢🍎

Run on-device LLMs super fast, 100% locally and offline on your Apple Silicon Mac!

Includes:

> run Llama 3.2 1B at ~250 tok/sec (!) on M3

> enforce structured JSON responses

> use via chat UI, or from your own code

> run multiple models simultaneously

> download any model from Hugging Face

Video at 1x speed.

English

@le_chuck_melee @literallydenis AFAIK, it’s not that simple, as Apple has been designated as a gatekeeper by the EU (Samsung hasn’t). Still sucks, and I think the EU has overstepped its boundaries once again.

English

I’m concerned:

ChatGPT Advanced Voice Mode – not available in the EU

AI features in iOS – not available in the EU

Llama 3.2 model – not available in the EU

It looks like this is becoming a standard practice. Does the EU government understand that if they continue to delay introducing AI features to their citizens, there will be:

a) Not enough time for EU citizens to learn new technologies, making them less competitive in the world-wide job market

b) Long-term negative effects on the IT sector, as AI development will happen elsewhere, hitting the EU economy hard

English

@ItFadeeYibee @nisten @_philschmid @OpenAI @Alibaba_Qwen Looks like LLM Farm apps.apple.com/us/app/llm-far…

English

We have GPT-4 for coding at home! I looked up @OpenAI GPT-4 0613 results for various benchmarks and compared them with @Alibaba_Qwen 2.5 7B coder. 👀

> 15 months after the release of GPT-0613, we have an open LLM under Apache 2.0, which performs just as well. 🤯

> GPT-4 pricing is $30/$60 while a ~7-8B model is at $0.09/$0.09 that's a cost reduction of ~333-666x times, or if you run it on your machine, it's “free”.💰

Still Mindblown. Full post about Qwen 2.5 tomorrow. 🫡

English

@Evg724 @nisten @_philschmid @OpenAI @Alibaba_Qwen Looks like LLM Farm apps.apple.com/us/app/llm-far…

English

@NathanielIStam @foley2k2 @tosho @mattshumer_ What’s your inference speed (tok/s) when splitting the model between RAM and VRAM? Is it usable? I’ve read about memory swapping (admittedly, to SSD) being super slow but haven’t read much about swapping between VRAM and RAM

English

I've run quantized versions of 70B on my dual 3090s, with about half the layers on 64 GB of ram. I'd probably need 128 GB RAM to do full precision.

The reason I replied with use LMStudio is you can slit the model between your ram and your Vram, I run Llama3.1 Q8 on my 1660 Ti 6GB with that very often

English

We’re looking for a compute sponsor for our 405B run.

Happy to give a shout out when we launch it include you in the report, first access for inference, etc.

Ideally 64x H100s. Please reach out if you’re serious.

Matt Shumer@mattshumer_

I'm excited to announce Reflection 70B, the world’s top open-source model. Trained using Reflection-Tuning, a technique developed to enable LLMs to fix their own mistakes. 405B coming next week - we expect it to be the best model in the world. Built w/ @GlaiveAI. Read on ⬇️:

English

@RobDenBleyker They probably should just fine-tune the model so it uses python to count the letters at this point lol

English