Sabitlenmiş Tweet

joe

4.5K posts

joe

@jlchnc

I make ai models that use 79.2% fewer tokens | building at @fdotinc

USA Katılım Mart 2023

536 Takip Edilen578 Takipçiler

@StevenPWalsh @fabianstelzer That sounds like a man who has lost all control lol

English

@fabianstelzer My wife and I once overheard a dad comment "these kids have been drinking undiluted juice!" In a very exasperated voice at a trampoline park... and that one has stuck with us.

English

We have been spinning up a ton of new clusters for people interested in using a git infrastructure that has 100% uptime and is actually built for their agents, which has been a blast and very exciting

If you would like to try out code[dot]storage for your business dm here or @CoastalFuturist

English

@kevinnguyendn @tonitrades_ @karpathy I couldn’t get your cli to work, I tried to index several things and query wouldnt return anything

If you get a chance to open source the benchmarks then they can be verified

Let me know if I can help you debug

English

@jlchnc @tonitrades_ @karpathy hey, thanks, you can check out our paper arxiv.org/abs/2604.01599, the benchmarks in there

English

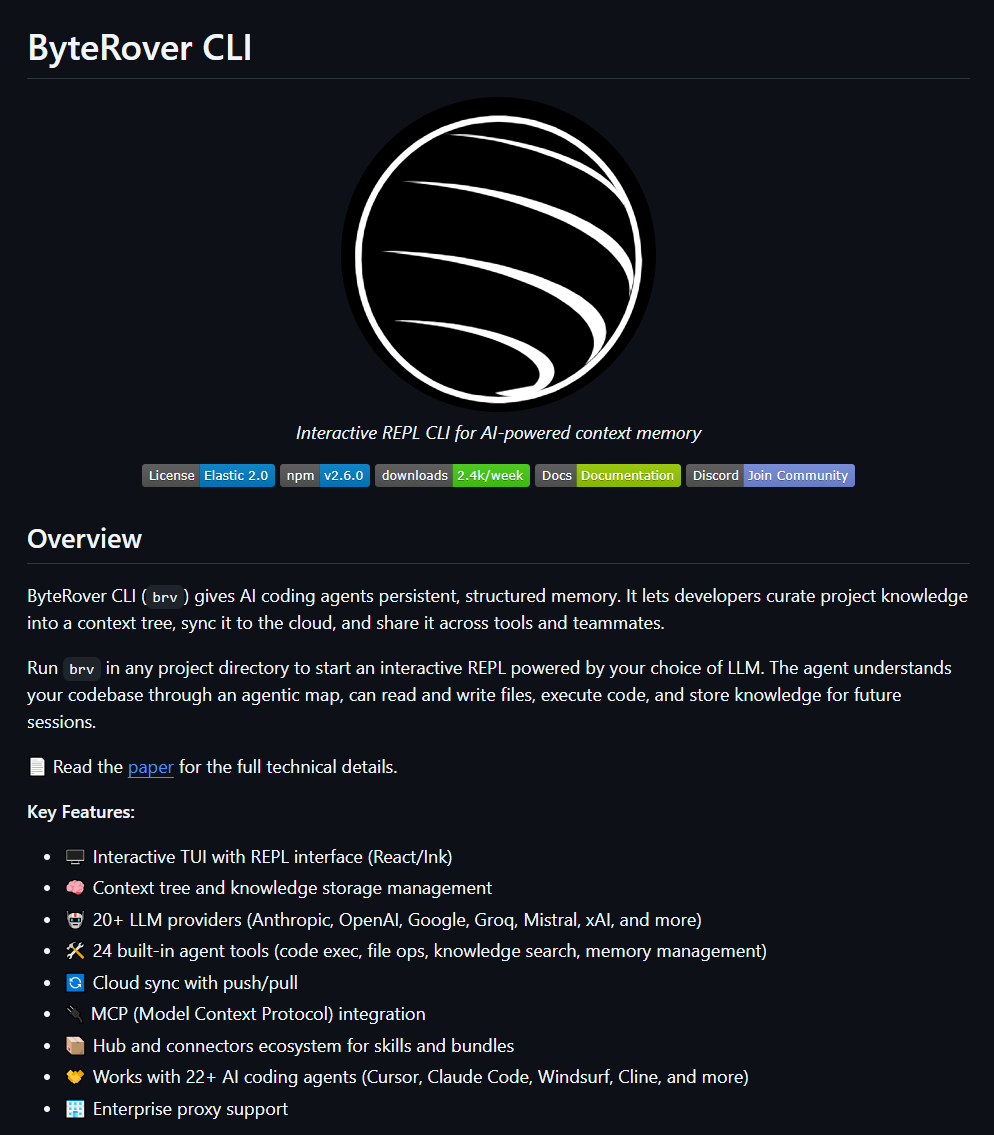

Open-source Memory for Agents - OpenClaw, Hermes, Claude Code and more

@karpathy just validated the exact memory architecture we open-sourced today. Detailed in our new arXiv paper.

The idea here is that structured Markdown vaults are the gold standard for agent memory. However, the "compilation" of these vaults is usually too tedious for manual maintenance.

It turns out you can just automate the whole curation layer. ByteRover solves this by automating the curation layer: Connecting nodes, links and context graphs while maintaining a human-readable Obsidian format.

Token Efficiency: Save you tons of tokens (50-70% on average) because the tiered retrieval only pulls exactly the context the agent needs, instead of dumping massive files into the prompt.

Proven Scalability: Benchmarked on Locomo & LongMemEval for production-grade latency and accuracy.

Collaboration: Native support to sync and manage knowledge with your teammates and other agents.

No vector DBs, zero infra. Just a native "second brain" for your agents that actually works out of the box.

English

It would be, if you're just grepping a folder of files. That's not what we're doing.

Retrieval is a 5-tier system: cache hits, fuzzy matching, and BM25 confidence routing handle 80%+ of queries under 200ms with zero LLM calls. The index is in-memory, validates freshness via file stats, and never re-reads unchanged files.

Token budget is fixed at 6,500 regardless of vault size. Entries compete for that space via compound scoring (relevance + importance + recency). Stale stuff decays and gets archived automatically. The agent doesn't see noise, just the highest-ranked context.

96.1% accuracy on LoCoMo, 92.8% on LongMemEval-S. Both state-of-the-art. No vectors, no external DB.

The markdown is for you to read. The retrieval layer is what the agent sees. Different problems, different solutions.

English

@businessbarista llm is your engine, harness is everything around it that makes you a car

English

@mattshumer_ Hey Matt, I am building an open source memory provider. It has a CLI and is local-first, releasing very very soon. I will DM you

English

@pierrecomputer @steipete this is a big idea, but i've been playing with this:

a git porcelain for vibe coding. git feels a little out of place now that the main interactions i'm having are intent+decision related, not code related

if that is done well, you could get to a new github for vibe coders

English

@ryancarson agree with this

even though the technical part has gotten easier, I've still made most of the mistakes starting a company, it's hard to do

English

@kentcdodds @xWayfinder in 2020 mid-covid i would have been thrilled to see 100 rolls of toilet paper

English

What's would you think if your hotel room had 100 rolls of toilet paper? Would that "delight" you? Let's talk about the Kano Model with @xWayfinder

English

@orcdev @steipete @mattpocockuk I like plan mode because different models make different assumptions, and you're cutting down on those assumptions

you also take fewer turns if you have a good plan to start

English

you'll often see hot takes from famous AI people that just don't apply to regular devs

one recent hot take from @steipete and @mattpocockuk :

"I don't use plan mode", and a regular dev thinks ZOMG

until you realize those guys have:

- infinite tokens

- 100 agents running in ralph loops

- entire workflows auto iterating

of course they don't need plan mode

meanwhile regular dev needs plan mode, because regular dev is on a $20 subscription, and regular dev cannot burn 5b tokens a day, regular dev says hello and burns 4% of his session

different game, different rules

English

@gregisenberg still so many wrong turns to make, i'm pretty sure i've taken most of them

English