Joe Fernandes retweetledi

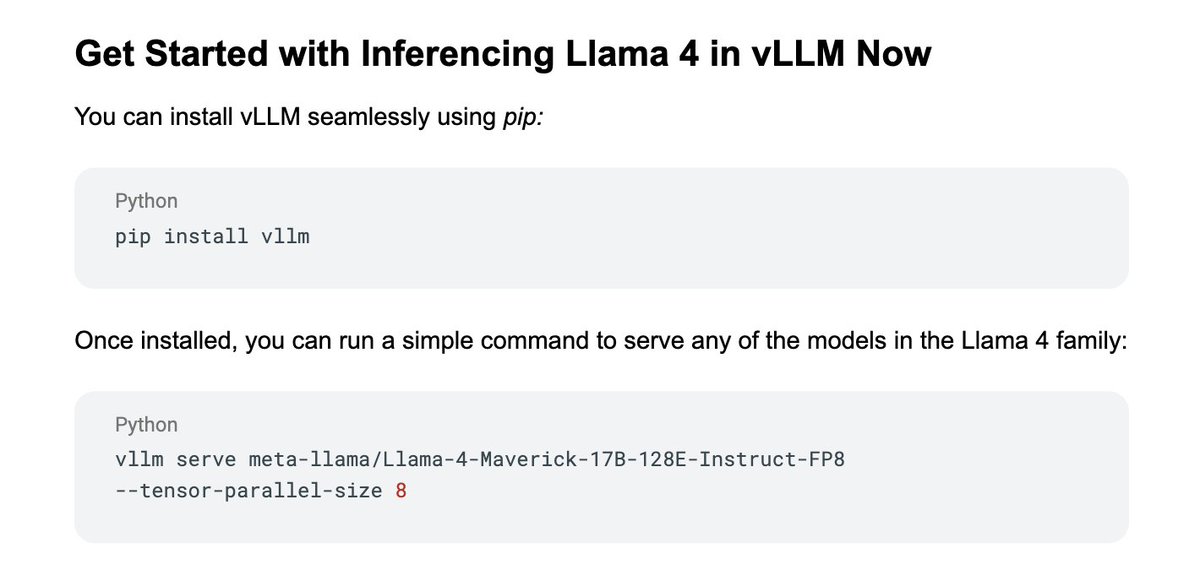

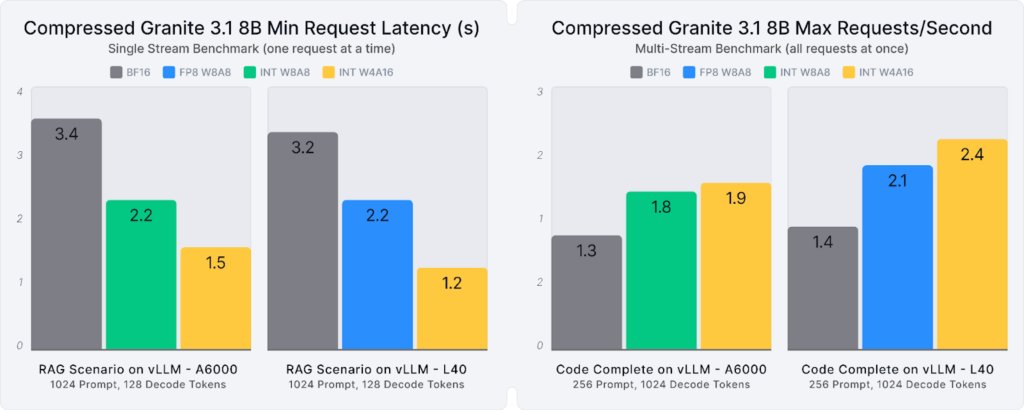

How do you solve AI's biggest performance hurdles? On Technically Speaking, @kernelcdub & Nick Hill dive into vLLM, exploring how techniques like PagedAttention solve memory bottlenecks & accelerate inference: red.ht/4lDjJ5P.

English