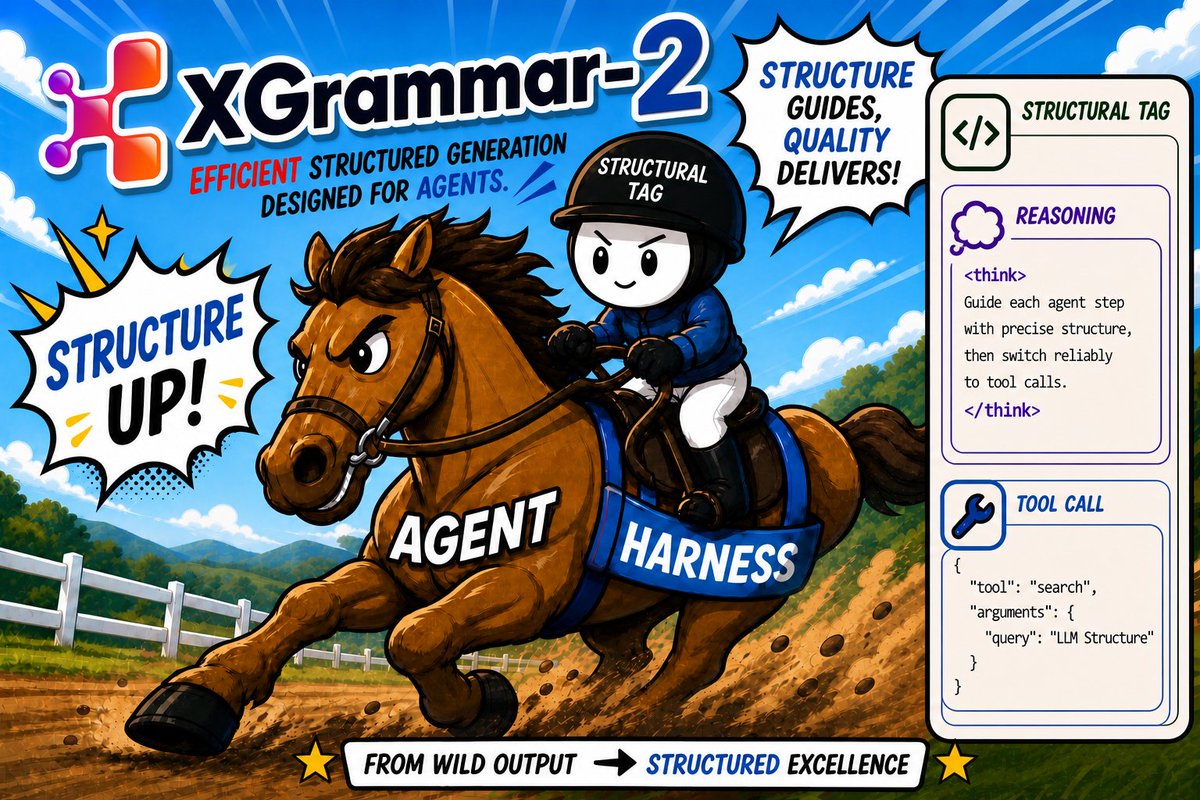

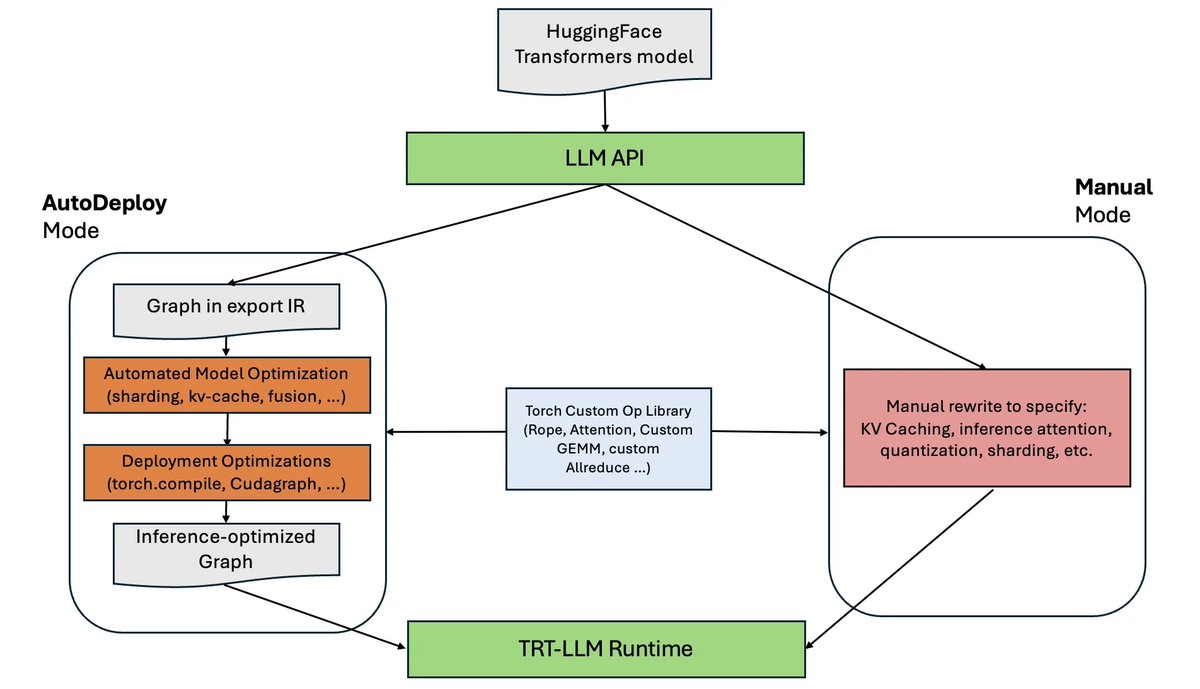

Introducing XGrammar-2: structured generation for complex agent harnesses. Strict tool-calling formats. Built-in DeepSeek-V4 and Qwen-3.6 support. Up to 80x speedup over XGrammar. Ready-to-use integrations with vLLM, SGLang, TensorRT-LLM, and more! ⚡ From Claude Code to OpenClaw, agents are defining more complex harnesses. XGrammar-2 ensures LLMs always interact with them in the right way. Built in collaboration with DeepSeek, Databricks, and leading frontier AI labs to bring XGrammar-2 into latest models and products. 🧩 Structural Tag: one unified abstraction to describe any format your agent needs 🚀 Scales to 500+ strictly typed tools for complex agent harnesses 🌐 Native APIs in Python, C++, Rust, and JS, running everywhere from cloud to edge 🛠️ Integrated with vLLM, SGLang, TensorRT-LLM, and more Excited to see what agent builders create with it! Blog: blog.mlc.ai/2026/05/04/xgr… GitHub: github.com/mlc-ai/xgrammar