Kushal Arora

439 posts

Kushal Arora

@karora4u

Research Scientist at Toyota Research Institute. Ph.D. student at @rllabmcgill, @MILAMontreal. Prev: FAIR, MSR, BorealisAI, Amazon, UF.

Excited to introduce our paper, ReFiNe, at #SIGGRAPH2024 this Thursday! Learn how we encode multiple assets as continuous neural fields with high precision & low memory usage by exploiting object self-similarity. @RaresAmbrus @robo_kat @adnothing Webpage: zakharos.github.io/projects/refin…

We’re presenting the first AI to solve International Mathematical Olympiad problems at a silver medalist level.🥈 It combines AlphaProof, a new breakthrough model for formal reasoning, and AlphaGeometry 2, an improved version of our previous system. 🧵 dpmd.ai/imo-silver

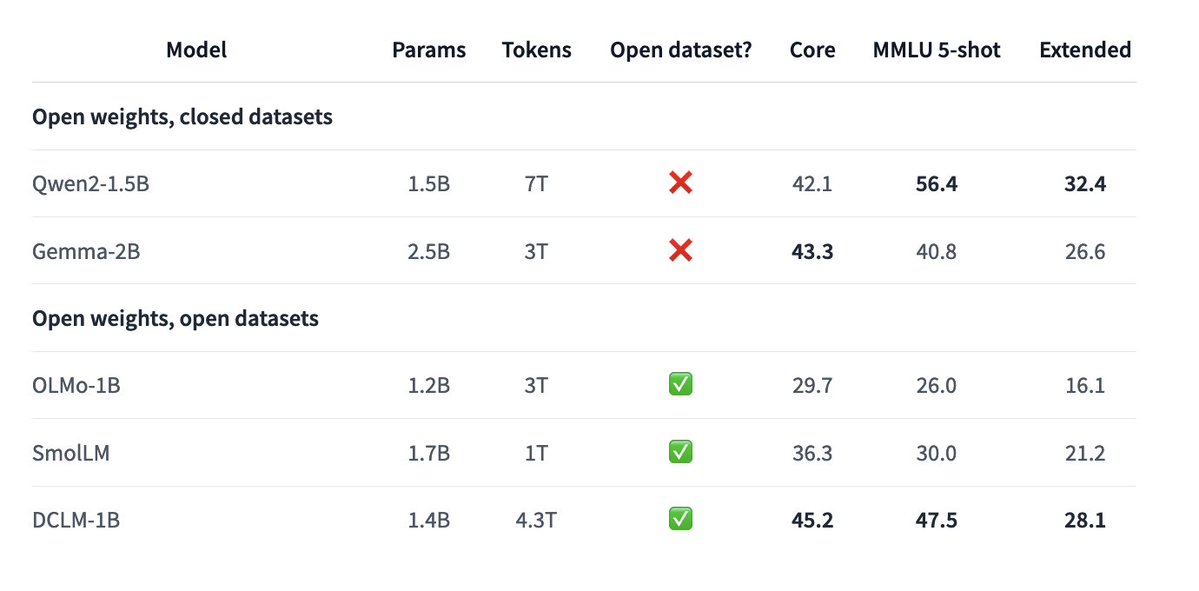

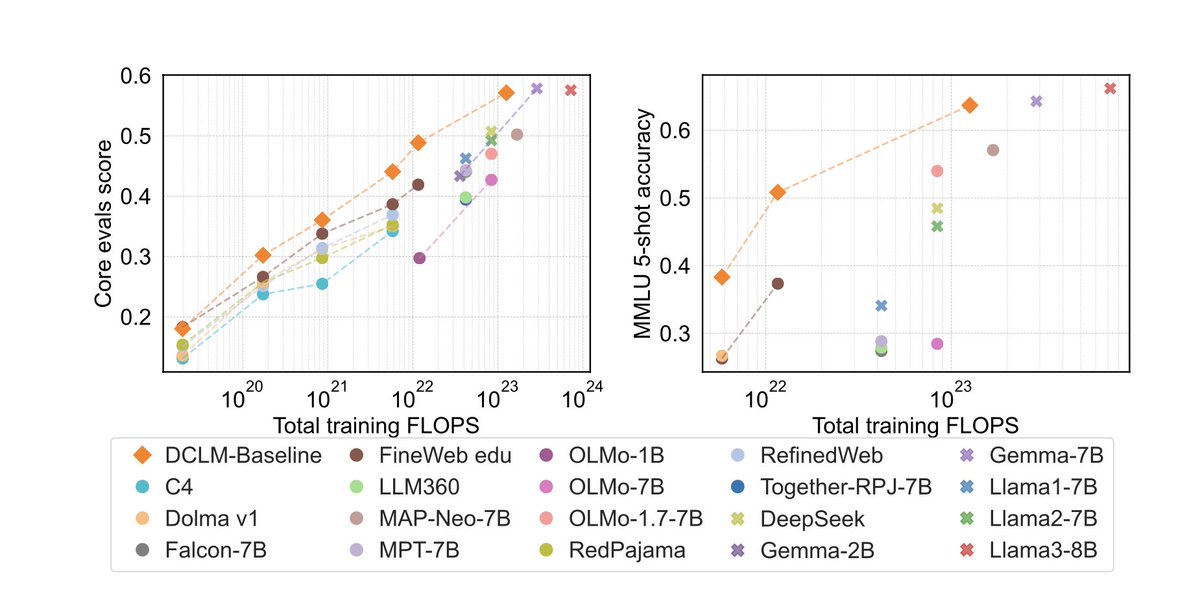

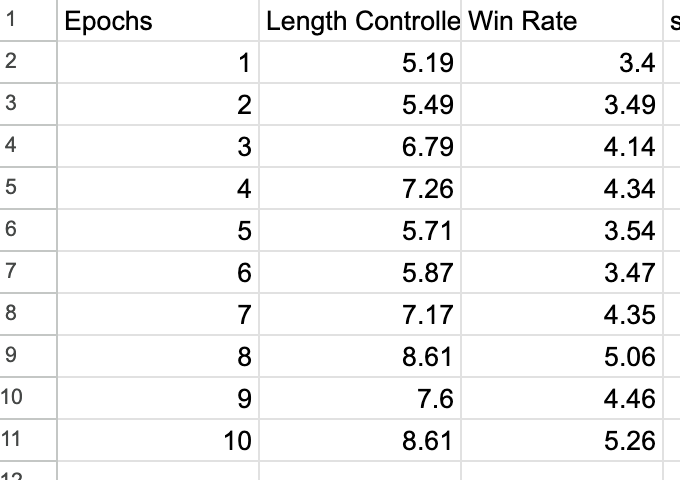

I am really excited to introduce DataComp for Language Models (DCLM), our new testbed for controlled dataset experiments aimed at improving language models. 1/x

I am really excited to introduce DataComp for Language Models (DCLM), our new testbed for controlled dataset experiments aimed at improving language models. 1/x

I am really excited to introduce DataComp for Language Models (DCLM), our new testbed for controlled dataset experiments aimed at improving language models. 1/x

I am really excited to introduce DataComp for Language Models (DCLM), our new testbed for controlled dataset experiments aimed at improving language models. 1/x

I'm attending #NAACL2024 at Mexico City this week! Excited to chat about pre-training, evaluation, and multimodality! (also excited for🌮🌯🫔)

After two years, it is my pleasure to introduce “DROID: A Large-Scale In-the-Wild Robot Manipulation Dataset” DROID is the most diverse robotic interaction dataset ever released, including 385 hours of data collected across 564 diverse scenes in real-world households and offices