keerti manney

24 posts

keerti manney

@keertimanney

Towards spatial intelligence

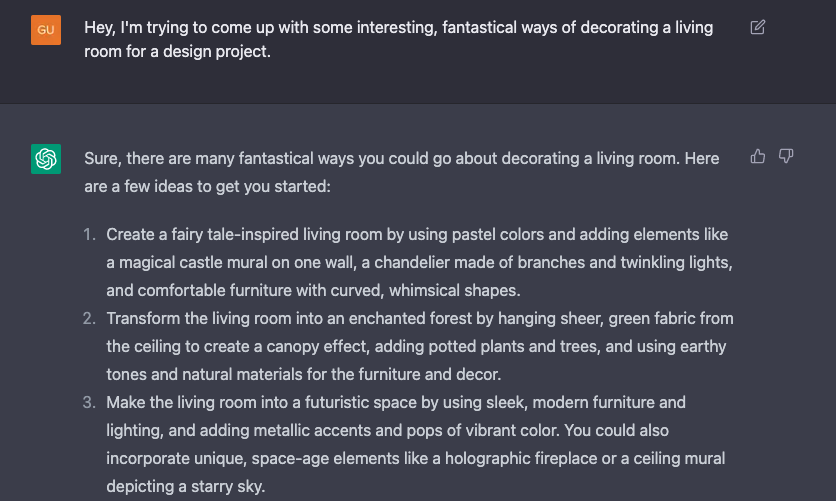

We made Jarvis, a robot assistant built for the 2026 Seeed Embodied AI Hackathon in sc. Huge thanks to the amazing sponsors and ecosystem partners that made this possible: @seeedstudio @NVIDIA @PollenRobotics @huggingface @AgoraIO! 1/3

@seeedstudio @nvidia @pollenrobotics @huggingface @AgoraIO built w/: Jetson Orin Nano, Reachy Mini, OpenClaw, GPT-4.1-mini, MediaPipe, Nvidia OSS Model on TensorRT Edge-LLM(trt_pose), Agora ConvAI, and Govee API teammates: @irenekarrot @keertimanney @sahithchada @bhushan017 3/3

create... explore... repeat

Introducing Grok Code Fast 1, a speedy and economical reasoning model that excels at agentic coding. Now available for free on GitHub Copilot, Cursor, Cline, Kilo Code, Roo Code, opencode, and Windsurf. x.ai/news/grok-code…