Alex Mizrahi

23.1K posts

Alex Mizrahi

@killerstorm

Blockchain tech guy, made world's first token wallet and decentralized exchange protocol in 2012; CTO ChromaWay / Chromia

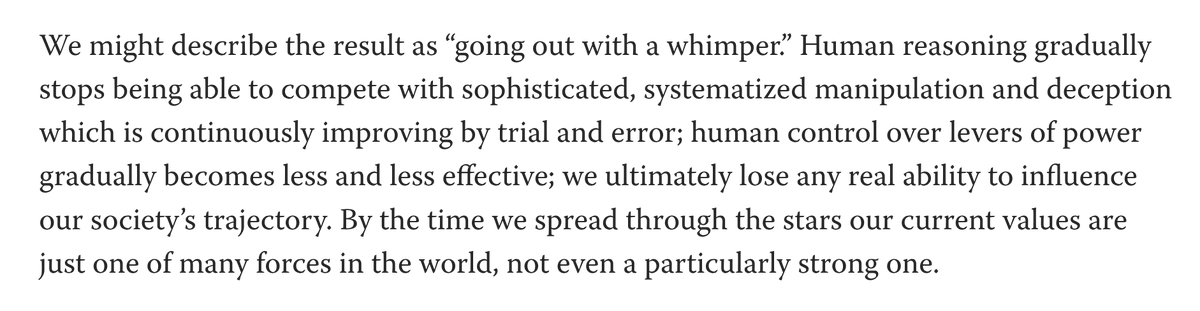

btw any transformer forward pass is neuralese

The new Gemini 3.5 Flash solved the HVM3's wnf bug in 1/3 attempts. This is my main test to take a model seriously. So far only the big models like GPT 5.5 solved it. And seems like it is 20x faster than Opus 4.6 ! Promising but Google will still find a way to fuck up

‘The Serpent in the Grove’ by Jamir Nazir is a story set in rural Trinidad about a struggling farmer, a silenced young wife and a grove that seems to remember what others try to bury. Awarded the Caribbean regional winner title for its lyrical precision and haunting atmosphere, the story stood out for the confidence and restraint of its voice. The story has been published on Granta: granta.com/the-serpent-in…

We’re releasing a 30B-A3B reasoning model that reaches gold-medal level across both physics and math Olympiad evaluations: IPhO directly, and IMO/USAMO with test-time self-verification and refinement. A simple, unified scaling recipe for proof search. huggingface.co/papers/2605.13…