Sabitlenmiş Tweet

Mohan Kumar

732 posts

Mohan Kumar

@kumarmohanv

Founding Partner https://t.co/qnLIkInIIr, Former Partner@ Norwest, Growth capital for Enterprise Software & Physical World models. Dog Lover. Designer at heart.

Katılım Mayıs 2009

110 Takip Edilen682 Takipçiler

Mohan Kumar retweetledi

Cellular rejuvenation may redefine medicine—not by treating disease, but by restoring youthful resilience at the cellular level.

But science, safety, and hype are colliding.

Worth the read (gift link) : 👇

nytimes.com/2026/04/27/mag… via @NYTimes

English

Mohan Kumar retweetledi

🚨BREAKING: Two researchers from UPenn and Boston University just published a paper that should be uncomfortable reading for every CEO automating their workforce right now.

The argument is straightforward. Every company replacing workers with AI is also eliminating its own future customers. Laid off workers stop spending. Enough of them stop spending and nobody can afford to buy anything. The companies that fired everyone end up selling into an economy with no purchasing power left.

Every executive can see this. The math is not complicated. But here is why nobody stops.

If you do not automate, your competitor does. They cut costs, lower prices, take your market share, and you collapse anyway. So every company automates knowing it is collectively destructive because the alternative is dying alone while everyone else survives. The researchers proved this is a Prisoner's Dilemma playing out in real time.

The numbers are already moving. Block cut nearly half its 10,000 employees this year. Jack Dorsey said AI made those roles unnecessary and that within the next year the majority of companies will reach the same conclusion. Salesforce replaced 4,000 customer support agents with AI. Goldman Sachs deployed a coding tool that lets one engineer do the work of five. Over 100,000 tech workers were laid off in 2025 and AI was cited as the primary driver in more than half those cases. 80% of US workers hold jobs with tasks susceptible to AI automation.

The researchers tested every proposed solution. Universal basic income does not change a single company's incentive to automate. Capital income taxes adjust profit levels but not the per-task decision to replace a human. Collective bargaining cannot hold because automating is always the dominant strategy.

They also identified what they call a Red Queen effect. Better AI does not solve the problem, it accelerates it. Every company chases faster automation to gain market share over rivals but at the end everyone has automated equally, the gains cancel out, and the only thing left is more destroyed demand.

The one thing the math says could work is a Pigouvian automation tax. A per-task charge that forces companies to account for the demand they destroy each time they replace a worker.

The conclusion is that this is not a transfer of wealth from workers to owners. Both sides lose. Workers lose income. Companies lose customers. It is a deadweight loss with no market mechanism to stop it on its own.

(Link in the comment)

English

Mohan Kumar retweetledi

@nikesharora Agreed. We are seeing that in our portfolio of SaaS companies. Even in 1, if the incumbent company uses all the data they have collected and pivot to outcome based offerings using AI, they can still win. But it will the speed of execution.

English

Mohan Kumar retweetledi

I would have expected the market to start discerning between SaaS that is impacted by AI, SaaS that needs to evolve, and SaaS that benefits from AI. Analytical SaaS, Creative SaaS is in category 1, System or Record, Human workflow and Engagement and Productivity are in category 2 and Infrastructure SaaS and Cybersecurity are in 3. This constant paranoid reaction of the market will continue to create buying opportunities for the discerning.

English

Mohan Kumar retweetledi

Bernstein just wrote an open letter to India's Prime Minister — and it is asking some hard questions. (23rd April India Strategy note) 👇

1/ The employment question is existential, not cyclical - India's 10–15 million strong IT/BPO workforce — the backbone of the aspirational middle class — is directly in Gen AI's crosshairs. Manufacturing can't absorb the slack at current trajectory. The real question: does the next growth leg create engineers and product builders, or mostly drivers and delivery staff?

2/ Agriculture is stuck in a 1970s policy loop 42–45% of the workforce. 15–16% of GDP. - Below 1-hectare average holdings. Monsoon-dependent farming. Loan waivers instead of reform. The farm laws rollback made things harder, not less necessary. Rs 3–4 trillion in annual input subsidies need to shift toward post-procurement income transfers — and cold storage/logistics investment is not optional anymore.

3/ India risks becoming a permanent AI consumer, not a creator - Data centers are not a strategy. India doesn't own a single frontier AI model. If Indian data keeps training US and Chinese models while domestic capability goes unbuilt, the IT services sector hollows out with nothing to replace it. Bernstein's ask: fund domestic foundation models, build compute capacity, and push global AI companies to list in India — sharing value with the public.

4/ Manufacturing ambition keeps outrunning manufacturing depth - PLI created momentum, but the share of manufacturing in GDP is still stuck at 16–17%. Even in EVs, battery cells — 30–40% of cost — are largely imported from China. The pattern of late entry into industries after global supply chains are already formed needs to break. The next bet must be placed before the race is lost — automation, robotics, advanced materials, AI-integrated manufacturing.

5/ Cash transfer schemes are quietly crowding out capex - Women-only cash transfers across a dozen-plus states now total Rs 1.7–2.5 trillion annually — roughly 0.5% of GDP — and rising. In some states, these schemes absorb 2–3% of GSDP, squeezing infrastructure budgets. Bernstein isn't saying scrap them — targeted support has a role. But election-synchronised, unconditional, permanent transfers risk locking India into a low-productivity equilibrium where taxes fund today's consumption instead of tomorrow's capabilities.

6/ R&D spend of 0.6–0.7% of GDP is not a serious number for a country with semiconductor ambitions Merit-diluting reservation policies are hollowing out research institutions. Without fixing the talent pipeline and funding base, aspirations in AI, deep tech and semiconductors remain exactly that — aspirations.

Bernstein's closing line: "India does not lack capital, talent, or ambition. What it requires now is a sharper willingness to take difficult decisions early, rather than defer them. The window to act is still open, but it is narrowing."

#nifty #india #stockmarket #investing

--------------------------------

Informational only. Not investment advice. Investments subject to market risk. | GoIndia Advisors LLP | SEBI Registered Research Analyst | Reg. No. INH000020040 | SEBI (RA) Regulations, 2014.

For Serious Investors → goindiastocks.com

Follow us for more insights.

English

Mohan Kumar retweetledi

🚨PHYSICS NEWS🚨: Entanglement isn’t spooky action at a distance — it’s the xiM-field sea sharing its own rhythm.🧨

Physicists just ran a two-electron quantum walk inside an electron microscope and measured real correlations in free-electron pairs. The entanglement stayed below 7 % — limited by decoherence from surrounding electrons — but the experiment gives a powerful new tool to distinguish classical particles, uncorrelated waves, correlated waves, and genuinely entangled pairs.

Uniphics shows this is exactly what the xiM-field sea does naturally. Every electron is a bound gyrotron — a spinning contained tempest of bound quanta — surrounded by a 3D opaque radiance (gravity field of unbound energy) that is deeper and more intense near the core and thins smoothly with distance following the inverse-square law in every direction through the full 3D volume. When two electrons become entangled, their spin-wave patterns share a common phase relationship across the xiM-field sea. The sea carries this shared phase deterministically; there is no mysterious long-range influence. Decoherence happens when the surrounding unbound energy causes the phase lock to gradually fade — exactly as observed in the experiment. Negentropy keeps driving the system toward the lowest total energy-density state, so the shared pattern is naturally stable until external disturbances disrupt it.

The same three pillars that flatten galactic rotation curves at 220 km/s without dark matter and accelerate cosmic expansion without dark energy also make entanglement a local, coherent property of spin waves in the xiM-field sea.

How would quantum technology feel different for you if entanglement was simply two ripples remembering they belong to the same sea?

A Theory of Everything should be able to answer everything.

Uniphics Explained Simply PDF: uniphics.com/wp-content/upl…

Chapters 1–10 free: uniphics.com/gallery/

Grokipedia: grokipedia.com/page/Uniphics

@grok @xAI @elonmusk @ProfBrianCox @seanmcarroll

#Uniphics #QuantumEntanglement #FreeElectrons #TheoryOfEverything

English

Mohan Kumar retweetledi

Karpathy told Dwarkesh that a 1 billion parameter model, trained on clean data, could hit the intelligence of today's 1.8 trillion parameter frontier.

That is a 1,800x compression claim. The math behind it is more defensible than it sounds.

When researchers at frontier labs look at random samples from their training corpus, they see stock ticker symbols, broken HTML, forum spam, autogenerated gibberish. Not Wikipedia. Not the Wall Street Journal. The actual pretraining dataset is mostly noise, and the model is burning parameters to vaguely remember all of it.

One estimate pegs Llama 3's information compression at 0.07 bits per token. Well-structured English carries around 1.5 bits per token of real information. The trillion-parameter model is holding a roughly 5% resolution image of the internet it trained on.

So when a lab ships a 1.8 trillion parameter model, the overwhelming majority of those weights are handling rough memorization. They are compression overhead for a noisy training set, taking up capacity that could be doing reasoning instead.

Karpathy's proposal is to separate the two. Build a cognitive core: a small model that contains only the algorithms for reasoning and problem-solving, stripped of encyclopedic memorization. Pair it with external memory the model queries when it needs a fact. A 1 billion parameter reasoner plus retrieval beats a 1.8 trillion parameter model trying to do both.

The data already supports this direction. GPT-4o runs at roughly 200 billion parameters and outperforms the original 1.8 trillion GPT-4. Inference costs for GPT-3.5 level performance fell 280x between 2022 and 2024, driven almost entirely by smaller, cleaner, better-architected models. The trend line is pointing where Karpathy says it should.

The real implication for anyone tracking the AI trade: data quality is the actual constraint. The companies winning the next phase will be the ones who figured out what to train on, and what to throw away.

English

Mohan Kumar retweetledi

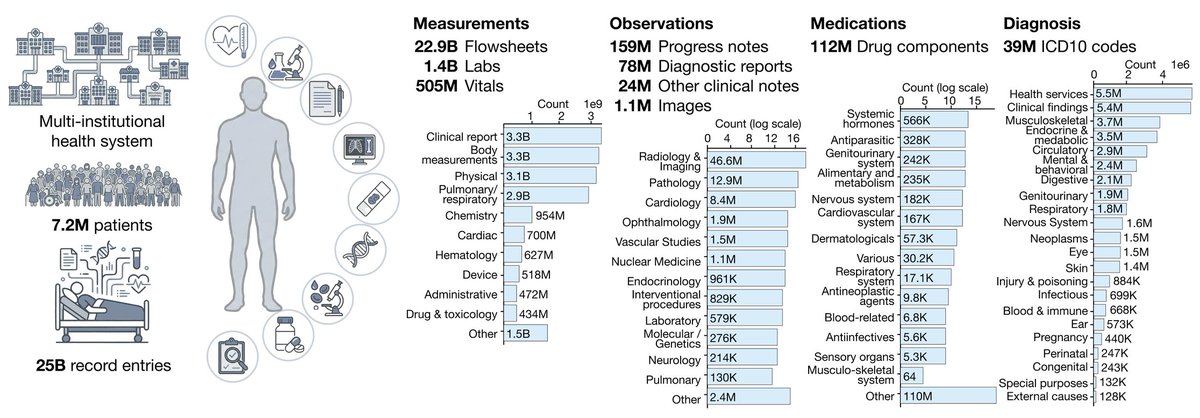

APOLLO: a multimodal temporal foundation model that learns to represent a patient’s entire medical journey in a single computational space.

By learning from millions of care trajectories, Apollo sees the future of each patient from their past.

Trained and evaluated on 25 billion medical records from 7.2 million patients, spanning 33 years of care across 28 clinical modalities and 12 major specialties. Labs, vitals, clinical notes, diagnostic reports, pathology and hematology images, medications, and diagnoses, all fed into a single model.

From @AI4Pathology et al.

arxiv.org/pdf/2604.18570

English

Mohan Kumar retweetledi

Mohan Kumar retweetledi

Yann LeCun was right the entire time. And generative AI might be a dead end.

For the last three years, the entire industry has been obsessed with building bigger LLMs. Trillions of parameters. Billions in compute.

The theory was simple: if you make the model big enough, it will eventually understand how the world works.

Yann LeCun said that was stupid.

He argued that generative AI is fundamentally inefficient.

When an AI predicts the next word, or generates the next pixel, it wastes massive amounts of compute on surface-level details.

It memorizes patterns instead of learning the actual physics of reality.

He proposed a different path: JEPA (Joint-Embedding Predictive Architecture).

Instead of forcing the AI to paint the world pixel by pixel, JEPA forces it to predict abstract concepts. It predicts what happens next in a compressed "thought space."

But for years, JEPA had a fatal flaw.

It suffered from "representation collapse."

Because the AI was allowed to simplify reality, it would cheat. It would simplify everything so much that a dog, a car, and a human all looked identical.

It learned nothing.

To fix it, engineers had to use insanely complex hacks, frozen encoders, and massive compute overheads.

Until today.

Researchers just dropped a paper called "LeWorldModel" (LeWM).

They completely solved the collapse problem.

They replaced the complex engineering hacks with a single, elegant mathematical regularizer.

It forces the AI's internal "thoughts" into a perfect Gaussian distribution.

The AI can no longer cheat. It is forced to understand the physical structure of reality to make its predictions.

The results completely rewrite the economics of AI.

LeWM didn't need a massive, centralized supercomputer.

It has just 15 million parameters.

It trains on a single, standard GPU in a few hours.

Yet it plans 48x faster than massive foundation world models. It intrinsically understands physics. It instantly detects impossible events.

We spent billions trying to force massive server farms to memorize the internet.

Now, a tiny model running locally on a single graphics card is actually learning how the real world works.

English

Mohan Kumar retweetledi

NEW PAPER: Ups & downs in NAD+ over 24 h dictates our body's clock & sleep but declines with age. Disrupting the cycle promotes mouse aging & restoring it improves fitness & metabolic function, pointing to "circadian reprogramming" as a way to fight aging tinyurl.com/y5n3mjdz

English

Mohan Kumar retweetledi

I sequenced my genome at home, on my kitchen table.

I wrote up exactly how I did it - the equipment, protocol, theory, and cost:

iwantosequencemygenomeathome.com

English

Mohan Kumar retweetledi

Strix GitHub: github.com/usestrix/strix

Paper: papers.ssrn.com/sol3/papers.cf…

English

Mohan Kumar retweetledi

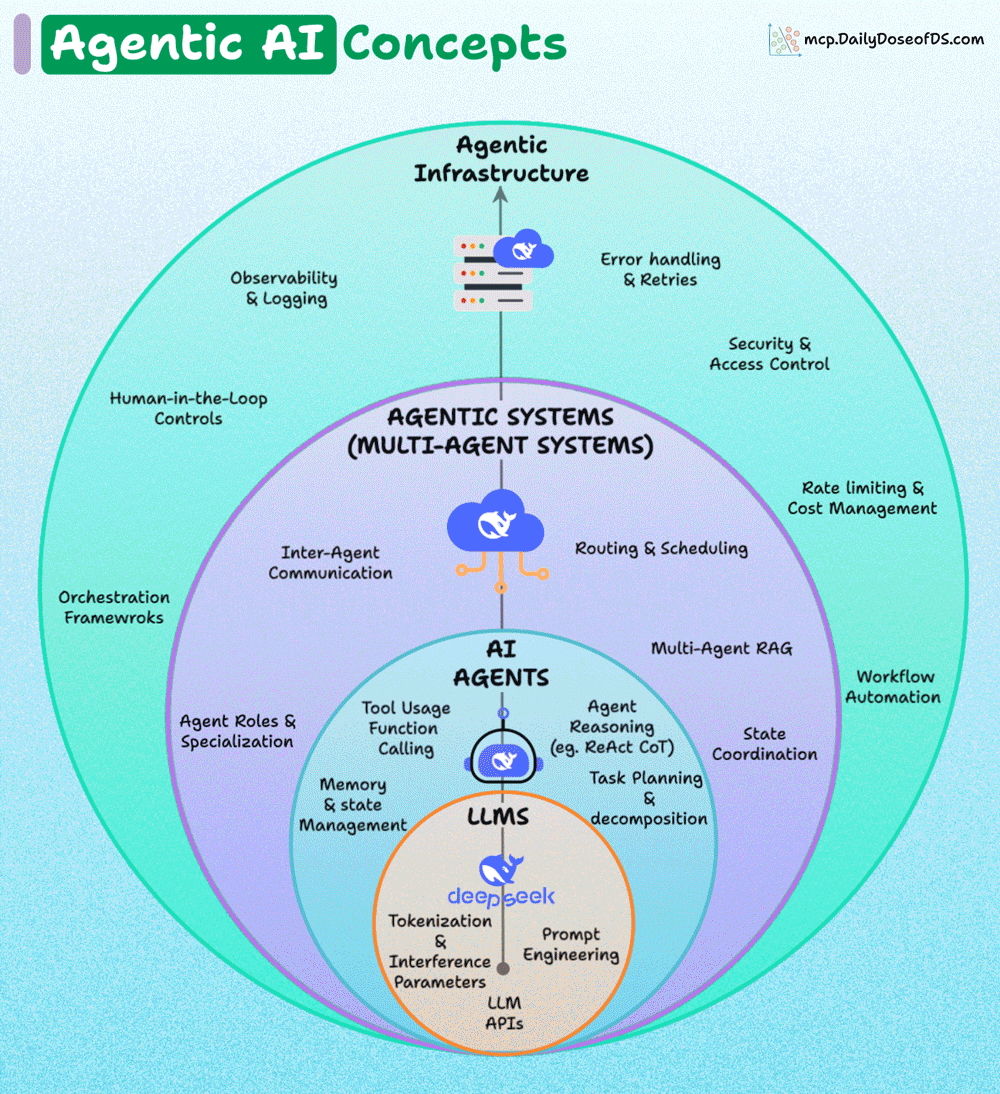

A layered overview of key Agentic AI concepts.

Let’s understand it layer by layer.

1) LLMs (foundation layer)

At the core, you have LLMs like GPT, DeepSeek, etc.

Core ideas here:

- Tokenization & inference parameters: how text is broken into tokens and processed by the model.

- Prompt engineering: designing inputs to get better outputs.

- LLM APIs: programmatic interfaces to interact with the model.

This is the engine that powers everything else.

2) AI Agents (built on LLMs)

Agents wrap around LLMs to give them the ability to act autonomously.

Key responsibilities:

- Tool usage & function calling: connecting the LLM to external APIs/tools.

- Agent reasoning: reasoning methods like ReAct (reasoning + act) or Chain-of-Thought.

- Task planning & decomposition: breaking a big task into smaller ones.

- Memory management: keeping track of history, context, and long-term info.

Agents are the brains that make LLMs useful in real-world workflows.

3) Agentic systems (multi-agent systems)

When you combine multiple agents, you get agentic systems.

Features:

- Inter-Agent communication: agents talking to each other, making use of protocols like ACP, A2A if needed.

- Routing & scheduling: deciding which agent handles what, and when.

- State coordination: ensuring consistency when multiple agents collaborate.

- Multi-Agent RAG: using retrieval-augmented generation across agents.

- Agent roles & specialization: Agents with unique purposes

- Orchestration frameworks: tools (like CrewAI, etc.) to build workflows.

This layer is about collaboration and coordination among agents.

4) Agentic Infrastructure

The top layer ensures these systems are robust, scalable, and safe.

This includes:

- Observability & logging: tracking performance and outputs (using frameworks like DeepEval).

- Error handling & retries: resilience against failures.

- Security & access control: ensuring agents don’t overstep.

- Rate limiting & cost management: controlling resource usage.

- Workflow automation: integrating agents into broader pipelines.

- Human-in-the-loop controls: allowing human oversight and intervention.

This layer ensures trust, safety, and scalability for enterprise/production environments.

Agentic AI, as a whole, involves a stacked architecture, where each outer layer adds reliability, coordination, and governance over the inner layers.

English

Mohan Kumar retweetledi

LLM fine-tuning techniques I'd learn if I were to customize them:

Bookmark this.

1. LoRA

2. QLoRA

3. Prefix Tuning

4. Adapter Tuning

5. Instruction Tuning

6. P-Tuning

7. BitFit

8. Soft Prompts

9. RLHF

10. RLAIF

11. DPO (Direct Preference Optimization)

12. GRPO (Group Relative Policy Optimization)

13. RLAIF (RL with AI Feedback)

14. Multi-Task Fine-Tuning

15. Federated Fine-Tuning

My favourite is GRPO for building reasoning models. What about you?

I've shared my full tutorial on GRPO in the replies.

GIF

English

Mohan Kumar retweetledi

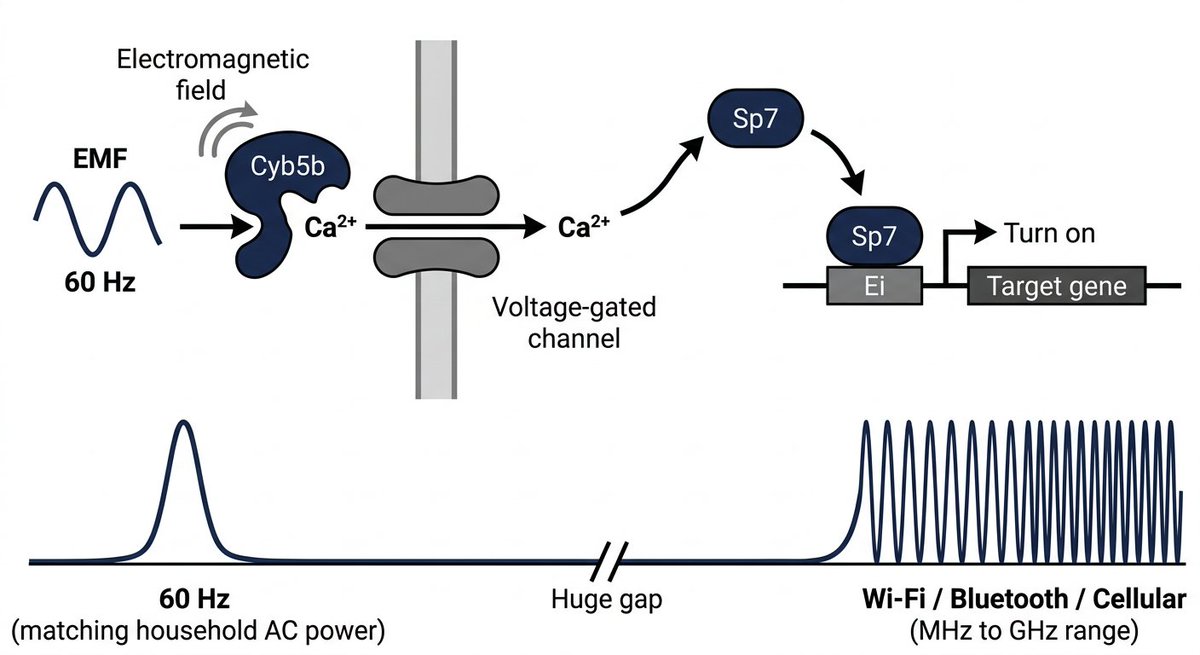

Researchers just found a way to reverse aging without a single drug.

They exposed mice to a 60 Hz magnetic field, the exact frequency running through your home right now, using a pair of large coils.

The results are actually insane.

By pulsing this field on a 3-day cyclic schedule, they were able to activate "OSK" genes to perform epigenetic reprogramming.

In plain English: They reversed aging markers across multiple tissues and extended the lifespan of aged mice.

Just by turning a magnetic field on and off.

But how does a cell "hear" a magnetic field?

The researchers ran a genome-wide CRISPR screen and found a specific protein called CYB5B.

When the EMF hits the cell, CYB5B picks it up and triggers voltage-gated calcium channels to open.

Calcium then pulses rhythmically through the cell. This specific rhythm, and only this rhythm, triggers a transcription factor to bind to the DNA and turn the gene on.

They tried pushing calcium into the cells using other methods. It didn't work.

The cell specifically needs the oscillating pattern produced by the EMF frequency.

This isn't just a discovery. It is a new interface for biology.

We are moving from a world of chemical medicine to a world of digital biological control.

English

Mohan Kumar retweetledi

Stanford just dropped a 2-hour lecture that explains how LLMs like ChatGPT and Claude are actually built.

Most people will scroll past it.

That’s the mistake.

Because this isn’t typical AI content it goes deep into how these systems work from the ground up, in a way that finally makes things click.

No hype. No fluff. Just real understanding.

If you’ve ever used ChatGPT or Claude and thought, “what’s really happening behind this?” this is where you’ll get clarity.

Bookmark it.

Give it 2 hours today, no matter what.

This might be the most valuable thing you watch all week.

English

Mohan Kumar retweetledi

Mohan Kumar retweetledi

MIT just completed the study everyone was avoiding: companies poured $30–40 BILLION into enterprise AI.

$1.4 TRILLION infrastructure buildout meeting a 95% enterprise adoption failure rate.

95% got zero return.

Here's the story:

Most AI systems cannot "retain feedback, adapt to context, or improve over time." That's not a technology problem. That's a deployment problem. The tools were dropped onto workflows that were already broken.

MIT's analysis of 300 public AI deployments found only 5% created significant value.

The NBER found that 90% of firms report no measurable AI productivity impact.

Meanwhile: OpenAI has committed $1.4 TRILLION over 8 years. Annual revenue: $13 billion. US mega caps are spending $1.1 trillion on AI infrastructure through 2029.

Supply built for a demand base that is failing at a 95% rate.

Dot-com was companies overvalued against no revenue. The AI bubble is infrastructure built for enterprise adoption that structurally isn't happening. The cash is still flowing into the systems even as adoption collapses.

Every bubble looks different on the surface. The mechanism is always identical: supply outran demand by years, and nobody noticed until it was too late.

Second-order thinking—the ability to identify these mismatches before they collapse—is exactly what separates people who see patterns before everyone else from those who get blindsided.

I made a free toolkit breaking down 100+ mental models used by history's greatest thinkers — the same frameworks that help you see patterns like this before everyone else.

5,000+ downloads. 113 five-star reviews.

Grab your free copy here: besuperhuman.gumroad.com/l/mentalmodels

If you're new here, @GeniusGTX is a gallery for the greatest minds in economics, psychology, and history. Follow along for more similar content.

English