Lucas Machado

849 posts

Lucas Machado

@lucaompr

AI Marketing & Ops consultant 🕹 Building the future with AI - like a videogame

Sao Paulo, Brazil Katılım Ocak 2018

1.4K Takip Edilen737 Takipçiler

🚨 BREAKING: R.I.P. CLICKFUNNELS.

Opus 4.6 ONE-SHOTS entire VSL funnels now.

I compressed my entire VSL framework into a single 5,280-word prompt.

The same framework that's generated $1M+ and 500+ booked calls.

HOW IT WORKS:

You plug in your offer details. It spits out:

→ Full VSL script from hook to close

→ Built on the persuasion structure behind EVERY high-converting agency funnel

→ Generated $1M+ and 500+ booked calls

This thing can INSTANTLY double your booked calls.

Use it now or get left behind. 🤘

Like + reply "OPUS" and I'll DM it to you

English

@AuthorityNull @jlehman_ Cool. So your plugin solves everything you’ve described above?

Besides that – how’s mem0 speed?

English

I like it, but had to do a lot of customization so it would fit in along side QMD.

I use Qwen3 Max (Alibaba) for extraction and Snowflake (local) for embeddings and added/tuned a scoring system to keep bad facts from being recalled (this is a real issue with memory recall systems).

Once you get it dialed in it's pretty powerful. I've heard good things about MemU too.

My extension is here 🫡 github.com/AuthorityNull/…

English

A common line of questions I receive: what does lossless-claw do differently than memory systems? How do the two relate? Should I use both? Here’s the lowdown:

Memory systems are good for letting you search for information that’s external to your context window, which are typically “memories” extracted from past/different conversations. This is necessary because:

Compaction is lossy: when your conversation gets too big, your agent replaces the whole conversation with a summary. Do this a few times and details from the first conversation are no longer part of the summarized conversation.

Your context is split across many sessions: you have conversations with different agents over time and want to be able to reference all of that in your current conversation.

Memory systems work okay in the first case and pretty well in the second case. lossless-claw works phenomenally well in the first case and only indirectly addresses the second one. Let’s expand that.

Lossless context makes frequent summaries of smaller pieces of context in the background. It keeps your most recent messages around verbatim (the “fresh tail”). As the summaries accumulate, they get combined into summaries of summaries.

This lets your agent stay focused: older content is still there, but becomes more “vague” over time — kind of like your own recollection of events. Current messages are always there and never suddenly disappear to be replaced by a summary. This effectively solves the “post-compaction amnesia” problem where your agent seems to suddenly forget important recent details about what you were doing.

The reason lossless-claw is called “lossless” though is because your older messages never get truly removed. The incremental summaries replace the messages, but act as “pointers” to them that can be used to expand the source messages back into context. Because the summaries stick around, your agent doesn’t forget about what it can expand should it need to.

By contrast, memory systems don’t offer the agent any ideas about what can they can be used to remember. This is why you have to frequently tell your agent to “search its memories” explicitly for something. This feels unnatural and is certainly inefficient.

Using lossless-claw means that you can keep one conversation going indefinitely without ever needing to reset. This assesses point (2) from above indirectly: if you don’t need to start new sessions all the time, you don’t need a way to recall information from past sessions!

If you work across multiple agents and want to share memories between them, or want to be able to recall information that happened outside of the scope of a conversation (eg meeting notes), you’ll want a memory system.

Much of what memory systems are used for is a poor fit for them stemming from overly naive approaches to managing context, which unfortunately are industry-standard. Don’t get me wrong: they’re still useful — I still use one — but they’re not the only tool that agents need to become effective personal assistants.

Lossless-claw is among the first production-grade implementations of an alternative context management strategy, and certainly the most effective, and it’s only available on @openclaw.

None of this would be possible without the excellent research into Lossless Context Management pioneered by @ClintEhrlich and @rovnys at @Voltropy, so make sure to give them a follow if you’re looking for some real alpha.

Peter Steinberger 🦞@steipete

There's a lot of cool stuff being built around openclaw. If the stock memory feature isn't great for you, check out the qmd memory plugin! If you are annoyed that your crustacean is forgetful after compaction, give github.com/martian-engine… a try!

English

@AuthorityNull @jlehman_ Can you give some feedback on your experience with mem0 ?

English

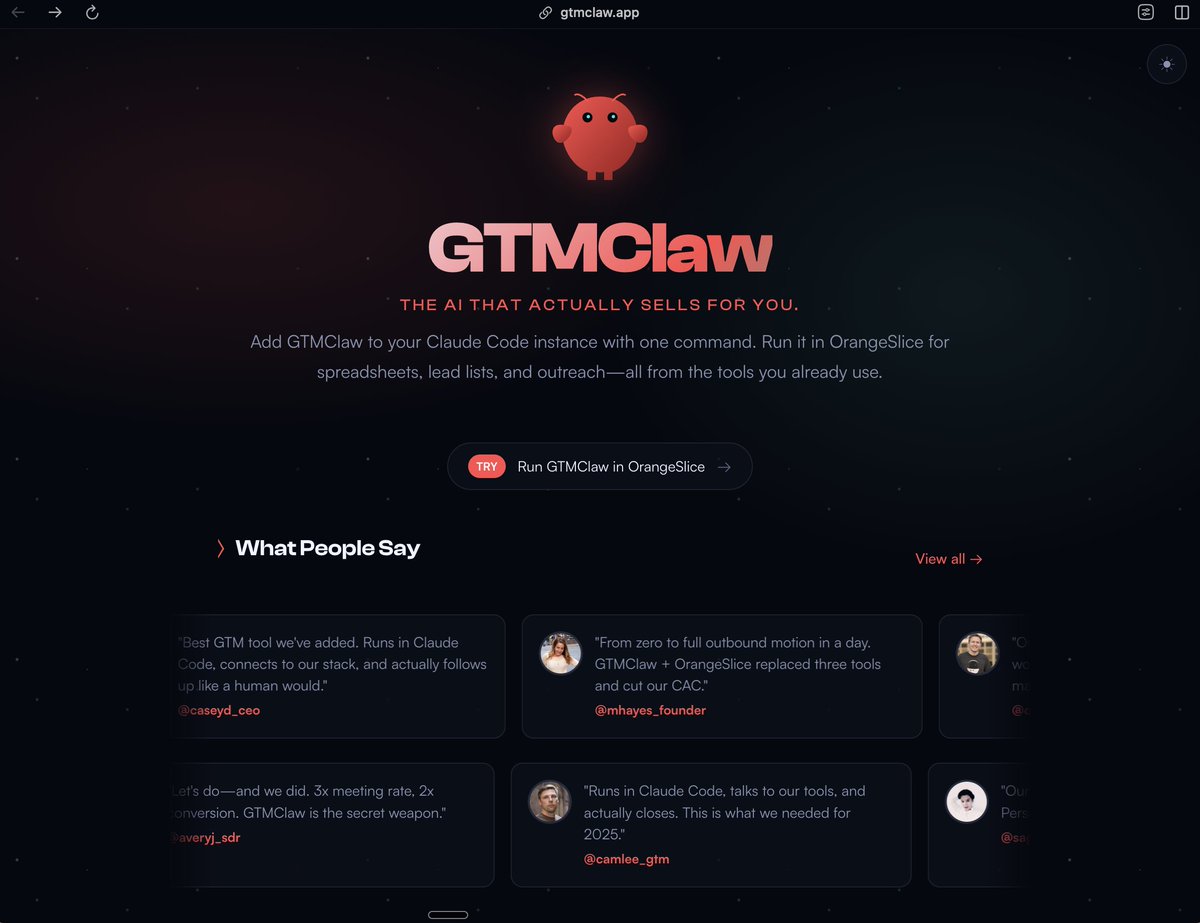

We built something a little dangerous for GTM teams.

It’s called GTM Claw.

A workflow library that turns OpenClaw + Claude Code into a customer-finding machine.

Think:

• Find people talking about your problem on Reddit, LinkedIn, Twitter

• Identify companies showing buying signals

• Enrich decision makers automatically

• Check ICP fit

• Push qualified leads into sequences or your CRM

Just continuous discovery of in-market accounts.

We’ve been using it internally at Orange Slice to:

• scrape LinkedIn reactors on competitors’ posts

• detect product complaints on Reddit

• identify operators switching companies

• surface companies hiring GTM engineers

We’re opening GTM Claw in beta.

Only 100 users for the next couple months.

~30 spots already filled.

If you want access:

Comment “CLAW” and follow me so I can dm you the link.

Turn Claude Code + Open Claw into a GTM menace. 🦞

English

@DJohnstonEC What if the user is on qmd? Shouldn’t be defaulting to those providers

English

gemini 3 just made every $15k ai consultant look like a clown

google silent-dropped autonomous agents to 650 million users yesterday

what consultants charge $15K and 6 weeks to "implement" now takes 4 minutes on a phone

here's what actually changed:

the model:

→ plans multi-step workflows autonomously

→ executes start to finish with zero hand-holding

→ optimized for non-experts (no CS degree needed)

→ already live on mobile canvas feature

while "AI agencies" are charging $8k-20k for strategy decks, google just deployed real automation to more people than chatgpt's entire user base

the intelligence gap is getting stupid:

that consultant billing $200/hr to "set up AI workflows" → the app does it autonomously now

that agency charging $15k for "custom AI implementation" → built in 4 minutes on gemini 3 mobile

that bootcamp selling "learn AI automation" for $2k → obsolete before the course launched

some startup just replaced their $18k/month AI consulting retainer with a free app

same output. 4 minute setup. zero technical knowledge required.

most businesses still think AI automation needs:

- 6 month roadmaps

- technical teams

- consulting firms

- $50k+ budgets

reality: it needs a phone and 4 minutes

your competition doesn't know this exists yet

but they will

comment "GEMINI" and i'll send you the breakdown of how to use this before everyone figures it out

English

FCK it. Here's all the sauce.

After shipping 100+ apps with @Lovable — I made the ULTIMATE Design Cheat Sheet.

Every prompt.

Every design system pattern.

Every cloud config + infra setup.

Every component standard + best practice we actually use to achieve world-class UI.

All in one doc.

Follow + comment "Cheat Sheet" and I'll DM it to you.

English

@SkeezOmatic @therooster exactly. if one major thing like this is wrong, how can we trust the entire rest?

English

@therooster Has surveyor vault on both sections. Invalid. Can't trust it

English

Here’s one of my favorite @variantui workflows:

Sketch a wireframe → make it real → explore styles until you find a design you love

Reply if you'd like to try, giving away 50 beta invites

English

Writing viral posts will never be a struggle again...

I packaged every context file, example, and framework that got me 4.5M+ impressions into one Claude skill.

Just upload it to your project and Claude instantly references:

- 20+ tweets with 100K+ views each

- Proven hook formulas

- Writing principles that actually work

- Real feedback on what converts (and what flops)

Most people prompt Claude with zero context and wonder why their posts sound generic.

They're basically asking it to write blind.

This skill changes that completely.

You upload it once to your Claude account and it becomes part of Claude's memory.

Now when you say "write a post about X," Claude pulls from battle-tested patterns.

- It knows which hooks are overused and market-fatigued.

- It understands how to structure posts for maximum engagement.

- It references actual examples that crushed it.

The skill teaches Claude to write posts that actually convert, and it never runs out of fresh angles.

Claude gets better the more you use it together, because you can keep adding to the skill over time.

This is the new way to add context to AI.

No more copy-pasting examples into every chat.

No more re-explaining your style from scratch.

Follow + comment "SKILL" and I'll DM you the file

English

Next.js 16 is good. I think it’s going to age like a Cheval Blanc 1947.

Extreme gratitude to the @nextjs & Turbopack teams, and the broader ecosystem, including @reactjs and all the partners whose feedback culminated in Deployment Adapters.

nextjs.org/blog/next-16

English

The /init is clear enough for the first time. Then how or what to do later with claude.md's is the tricky part. We wanna provide as much info as possible while also not hurting context windows.

If we could have a '/init-update' or '/init here' so it would automatically generate the updated general or new feature/folder specific instructions by following best standard and automating the process.

English

@TheAlexYao Is this not helpful enough? docs.anthropic.com/en/docs/claude…

Anything in particular you want more detail on?

English

@pie6k Please add free manual camera positioning / moving / sizing instead of just the preset positions

English

@eugene_galaxy @pie6k Just use raycast or cleanshotX from setapps. Very simple to do that.

English

@pie6k Same background/padding/inset settings as for the video.

I am tired of making it manually in Figma, boss...

Example:

English

Our Fine-Tuned AI SDR has booked this client over 75 qualified sales appointments in the 7 days alone.

With average close rate of 22% and a ticket price of $6500, that's an additional $107,250 in revenue.

There are a lot of businesses that are wasting thousands and thousands of dollars a month on "appointment setters"....

We live in 2025, you can leverage AI Agents to fully qualify, converse, and book appointments for you.

Comment the word "Setter" down below and I'll send you a free guide that show's how this agent works.

English

@mreflow So the first picture I had Chatgpt remove everything in the room so I can see how it looks when I move in. And then I shared the kind of bed I would like and had it added into the room.

English

I've just rounded up 35 different ways I've seen people using ImageGen from GPT-4o... And those were just the ones I was able to quickly rattle off onto a notepad.

The implications of that launch I don't think have been totally felt yet by most. It was / will be a massive disrupter.

Share some unique ways you've seen it used with me. Going to try to round all these up into a video for you.

English

100%. Realized this when they first launched it.

Ringgit after that, both Cusor and other IDEs started having rate limiting, slowness and context / dumbness issues with Claude ApI usage.

There are no coincidences.

And I wouldn’t be surprised if they came out with 4.0 and with an updated training knowledge base (code focused) to better deal with most recent techs.

Huge bonus.

I also think 3.7 is a fake model with just temperature and creativity bar tweaked so they had something to launch and generate buzz for a while while 4.0 is still cooking.

They were silent for months into then.

Claude code is their ultra fast spun mvp to validate the product market fit.

Next step - nice UI desktop IDE that can integrates 50x better with their LLM and handles context flawlessly.

Please ship that already.

English

@AdamMura @BrettFromDJ @AdamMura 🎩 How many new subs did you guys get out of Brett humiliating himself?

English

Hey @BrettFromDJ, thanks for the shoutout!

Question... what is the most important thing you think this tool is missing?

English