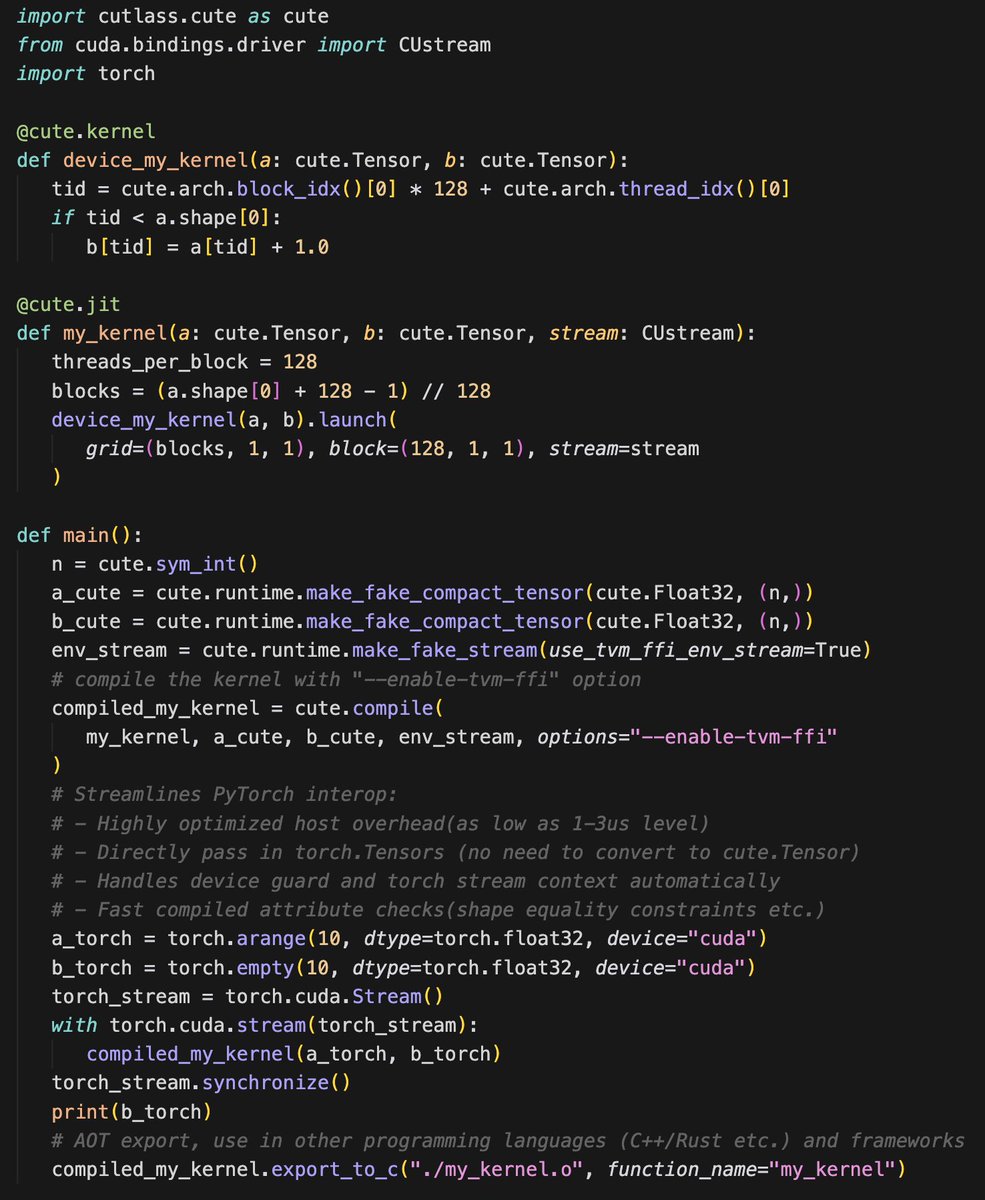

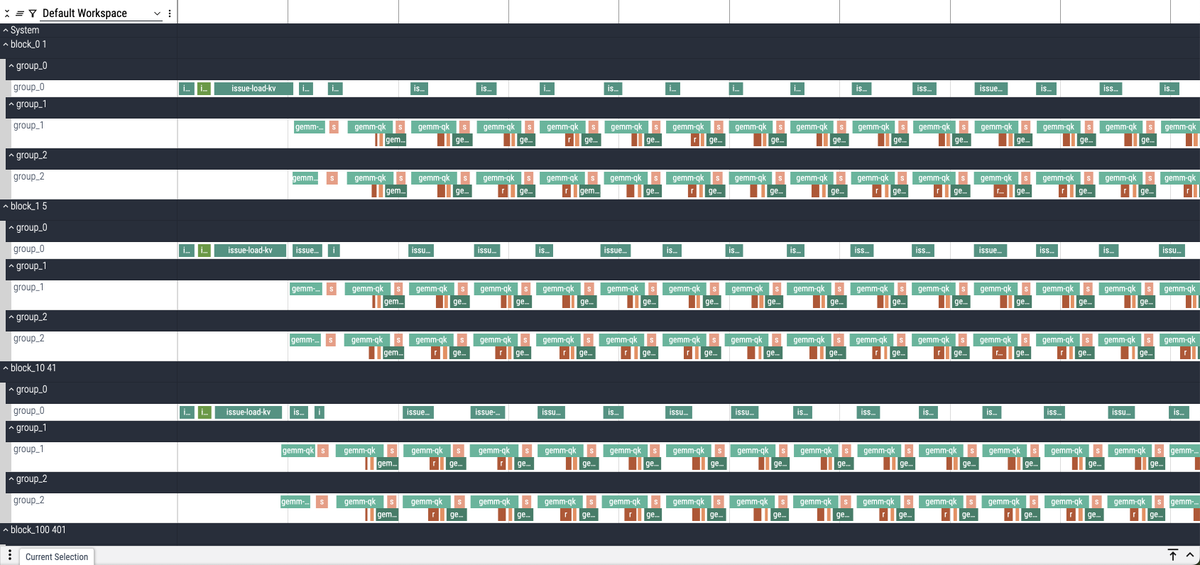

🚀 MLSys 2026 Contest - @nvidia Track is LIVE! Registration is now open for the FlashInfer-Bench Challenge! Submit high-performance GPU kernels for cutting-edge LLM architectures on NVIDIA Blackwell GPUs. Three Tracks * MoE (Mixture of Experts) * DSA (Deepseek Sparse Attention) * GDN (Gated Delta Net) Human experts AND AI agents welcome — evaluated separately. Let's see who builds the best kernels! 🤖 🎁 Prizes: Winners take home NVIDIA GPUs and are invited for presentation at MLSys 2026. ⚡ First 50 teams to register get free GPU credits from @modal - huge thanks for the sponsorship @charles_irl ! Whether you're a kernel wizard or building autonomous coding agents, we want to see what you've got. 🔗 Contest details: mlsys26.flashinfer.ai See you at MLSys 2026! 🔥