Luis Molina

366 posts

@ivanfioravanti I use mine in low power because of this, if you need some test in M2 Max just tell me.

English

Interesting video of M5 Max, on impact of Low, Automatic and High power modes on inference.

- No external monitor attached

- Model not relevant, but it's DS4 Flash Q2.

Results:

- Low ~25W ~12 toks/s

- High ~120W ~ 32 toks/s

- Automatic varies from 40W ~14 to 90W ~29 in relation to the fan speed and temperature of the Mac.

If you really want to push your MacBook to the max, High Power mode and no external monitors, with them I see a very strange behavior that I'm investigating 🧐

English

No please, no! Don't push people to think that a cluster of 4 M5 Max is a viable solution. If you set the to High Power they will drain battery after few hours even if connected. Moreover It will generate an incredible amount of heat and noise.

For me is a no go, sorry.

Ivan Kuleshov@Merocle

M5 Max cluster 72 CPU and 128 GPU cores, 512GB unified Ram Each MacBook is connected to all the others with Thunderbolt 5 (120Gbit/s). But I’ll have to use Wi-Fi to connect to the cluster

English

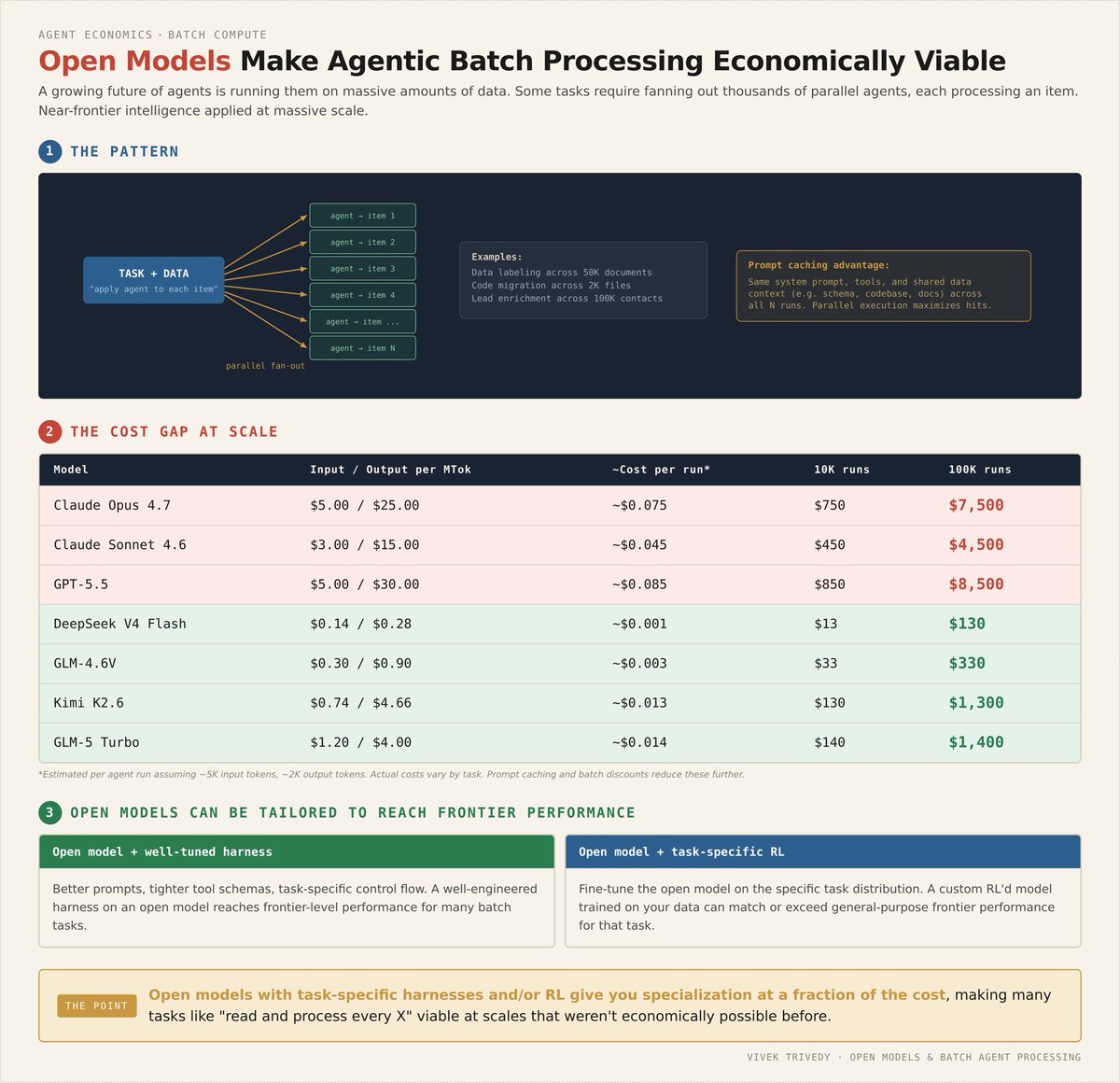

Open Models Make Agentic Batch Processing Economically Viable

A lot of world’s work looks like “Do X for EVERY Y”

- read every trace

- respond to every email

- deep dive into every document

- enrich every lead

This is the domain of Agentic Batch Computing

Much of the world’s work does not need peak frontier intelligence, it needs carefully shaped intelligence pointed at specific tasks

The holy grail here is having a tailored agent run on every single one of these data points + tasks

The world is producing more data than ever before. To understand and process it at scale, we’re going to have to point Intelligence and Compute at this

Open Models are a fantastic tool here

- they’re often an order of magnitude cheaper

- they can be finetuned (SFT & RL) to fit to your exact task distribution and outperform frontier models for your task

As companies contend with rising AI costs, Open Models and specialized models become incredibly important to make sure there’s good ROI on AI spend

here to help as you let the token machine rip without busting the bank (and with better results) 🫡

English

I’m going to give away every piece of swag I got at the OpenAI event tonight.

Of course very appreciative to get it 🙏

But, never been a swag guy, and know so many people love this stuff, so would like to see it go to a good home.

Will share more tomorrow or Thursday, but if you want some swag from the event, comment below and I’ll add you to the random drawing.

And I’ll ship it to you for free.

Let’s let the GPT 5.5 love go around 🫶

English

@luismolinaab @Teejay_first @NousResearch @Teknium @fal Tbh, idk!

The tooling portion is almost certainly Hermes specific

I’d think generally it’s probably safe to say this offering fits best in the @NousResearch ecosystem

English

@SinatraCrypto @grok Why the price if you are paying nous portal? I mean you PayPal the subscription and nothing else. Don't?

English

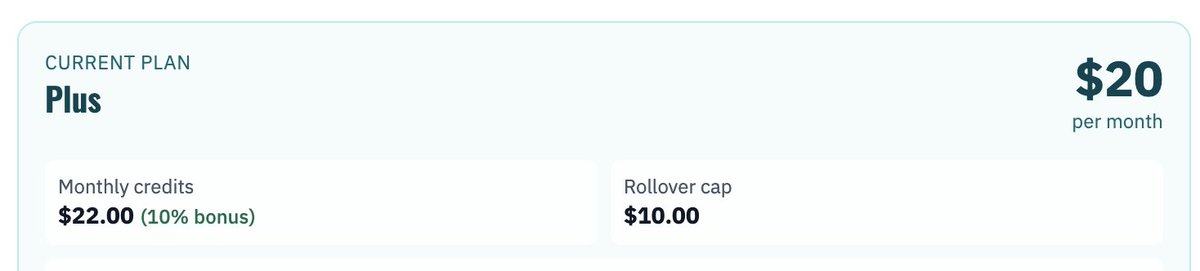

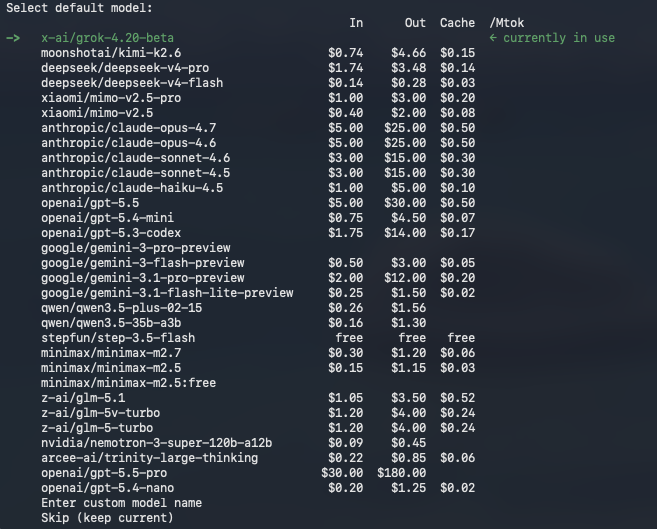

Switching up a bit from Anthropic and using Nous Portal subscription for my Hermes Agent now.

Also has access to Tools Gateway.

Conveniently shows you the price in and out of all models.

There are also many many more models they host.

I'm currently using @Grok 4.2

@NousResearch

English

@mr_r0b0t @Teejay_first @NousResearch @Teknium @fal And can be used also in other tools like pi? Or only works on hermes?

English

@Teejay_first @NousResearch @Teknium apples to oranges imho!

inference providers are (for the most part) only providing just that

Nous Portal gives you inference and 300+ tools!

Nous provides the only plan out there (that I'm aware of) which gives you access to things like @fal via your sub!

English

@JulianGoldieSEO What about multiple projects, like in vs code?

English

I just tested Hermes Workspace v2 and honestly this is what Hermes should have looked like from the start.

Why it matters:

• Native chat with your Hermes agents

• Mission control style workspace

• Task boards for agent workflows

• Memory and skills management

• File browser and terminal in one UI

• Works with local and cloud models

• Runs across phone, Mac, PC, iPhone, Android

If you like Hermes but hate living in the terminal, this update is for you.

English

@a1zhang @raw_works If I wanted to contribute to an open source RLM project, which you will recommend?

English

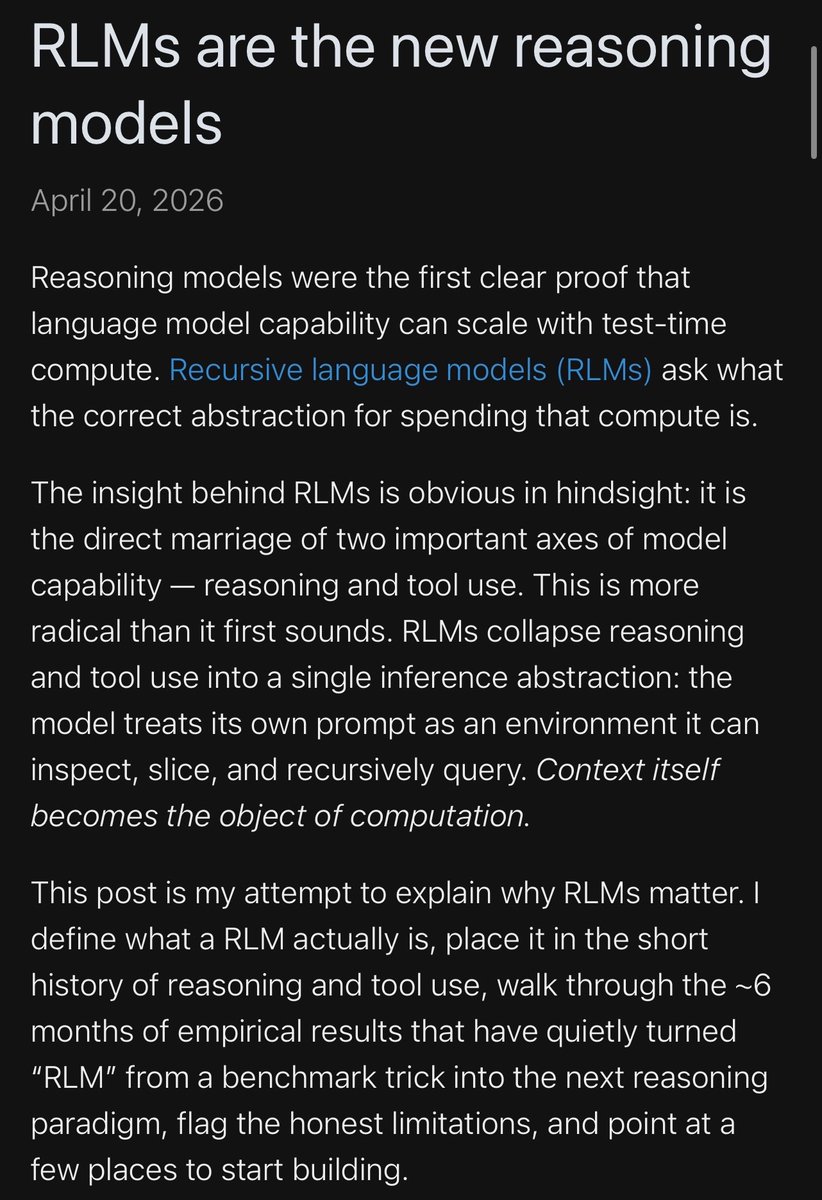

Incredibly well written blog on RLMs by @raw_works, highly recommend you read it! He thinks about them in a particularly intuitive way.

He’s also been the main driver of recent OSS RLM results on LongCoT :)

English

this is a laptop running a 31b parameter model at 99% gpu autonomously through hermes agent, 15 tok/s sustained, 22.8 of 24gb vram gone, 94 watts at 50c.

no api keys. no rate limits. no "your prompts are being used for training". no monthly subscription. no anthropic telling me what i can and cant ask. no openai logging my work. no outages when aws goes down.

just google deepmind's open weights, open source llama.cpp, nous research's hermes agent, a rog scar 18 on my desk, and 95 watts of sustained compute while it builds stuff on its own. the laptop is roaring. results incoming.

English

@EnTr0pY_88 @KyleWillson sorry, I mean the model that comes with the paid plan of Hermes. They mention the tools, but also it comes with some model.

English

@luismolinaab @KyleWillson It’s not a model like GLM and MiniMax, it’s an Agent that evolves, and you strap a model to it for its intelligence. Do some reading on it, honestly, it’s the future! Launched mine a few days ago and can’t leave it alone

English

Hermes dropped a bomb today.

You only need 1 subscription and literally have every model and skill you'll ever need.

The insane part is its only $10 bucks...

Claude is cooked. Open Source wins the day.

Nous Research@NousResearch

Tool Gateway is now live in Nous Portal. No separate accounts, no API key juggling. All you need is one subscription, and everything works. A paid Nous Portal subscription now includes access to 300+ models and a growing set of third-party tools. Launching with: → Web scraping → Browser automation → Image generation → Cloud terminal backend → Text-to-speech

English

Luis Molina retweetledi

Luis Molina retweetledi

@luismolinaab @ivanfioravanti My understanding from a talk I attended is that they have a mathematically optimal quantization method that guarantees the best possible quantization.

They even have the ability to make the model the exact size you need.

English