Luke Ritchie

1.7K posts

Sabitlenmiş Tweet

@RaphaelTurang @floraai Thx. I did post a bug for it yesterday.

English

hey @luke0ritchie!

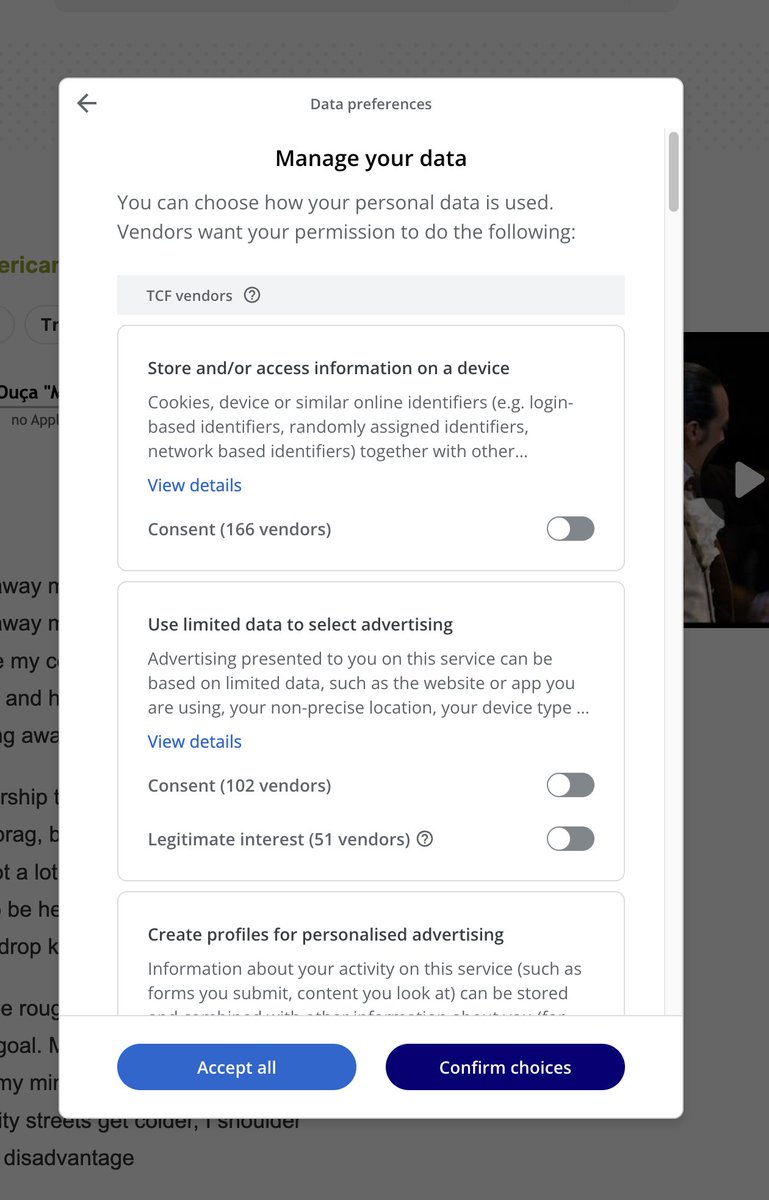

audio → Seedance 2.0 should work (image+audio→video and video+audio→video both supported)...

sounds like a bug. shoot the screenshot + your FLORA account email to support@florafauna.ai and we'll dig in

English

@floraai is there a bug connecting an audio file to seedance 2.0 node? it's a wav 2mb file. I can make it work by dragging new video node from the audio file, but that causes images to not get sent.

English

Hot take: the people with the best chance to stand out in the AI era aren’t the ones generating the prettiest shots.

It’s screenwriters and editors.

Because once everyone can now generate images, what actually matters is:

knowing what to say

knowing what to keep

knowing how something should feel

Beautiful nonsense is becoming trivial.

Taste, rhythm, structure, tension, emotion, payoff… that’s the hard part now.

PJ Ace@PJaccetturo

This is one of the best short films I've seen in years. Very soon, we'll stop calling it "AI film" and just call it film.

English

@floraai can you confirm whether a 5-second Seedance 2.0 video uses the same number of "credits" as a 15-second video, or whether cost scales with duration?

English

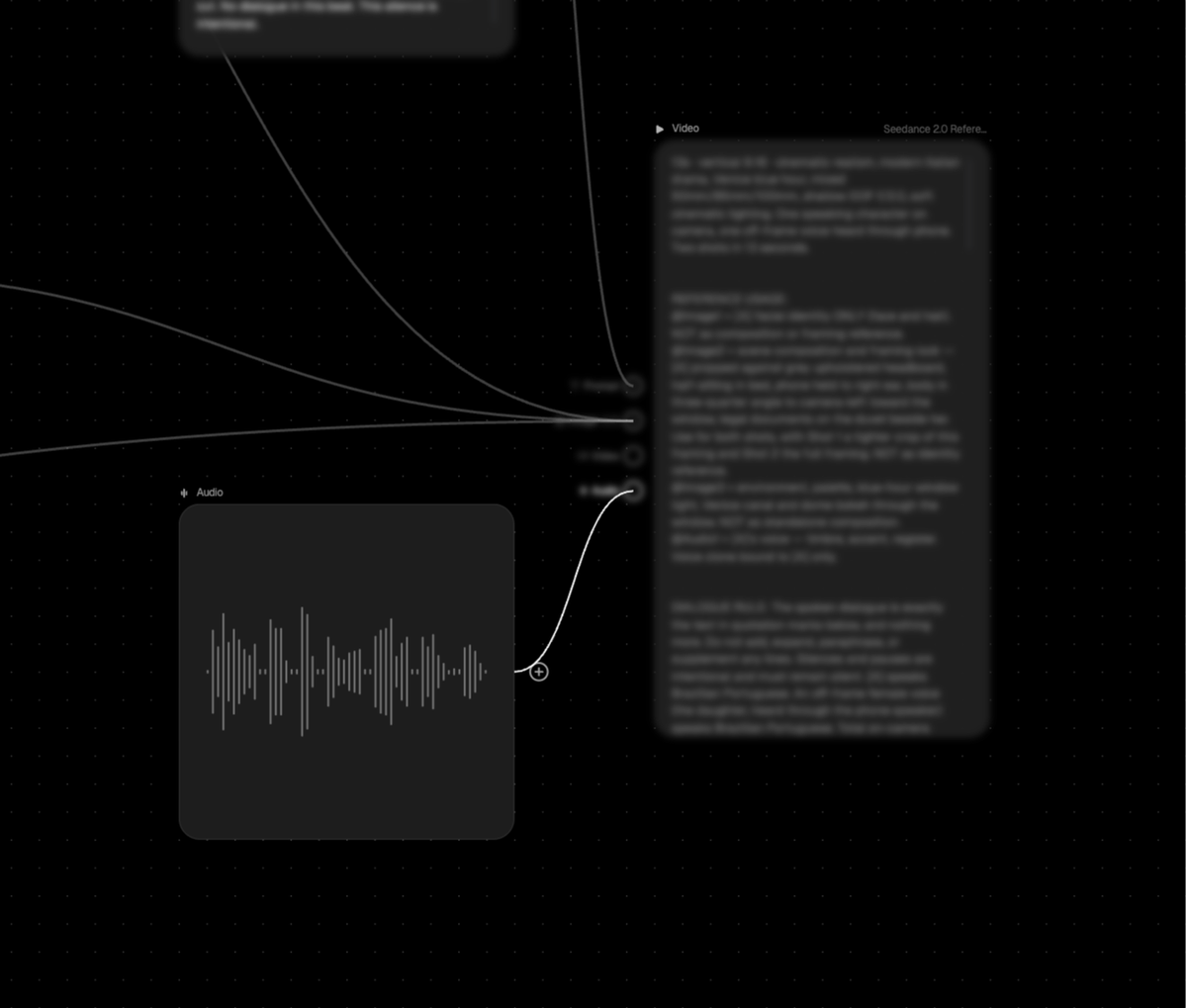

Still experimenting with using a single visual production graph as the anchor for multi-shot storytelling in Seedance 2.0

• Paired it with a structured text prompt to define sequence, camera moves, and character consistency

• Used explicit spatial rules (door hierarchy + movement direction) to prevent scene resets and continuity errors

English

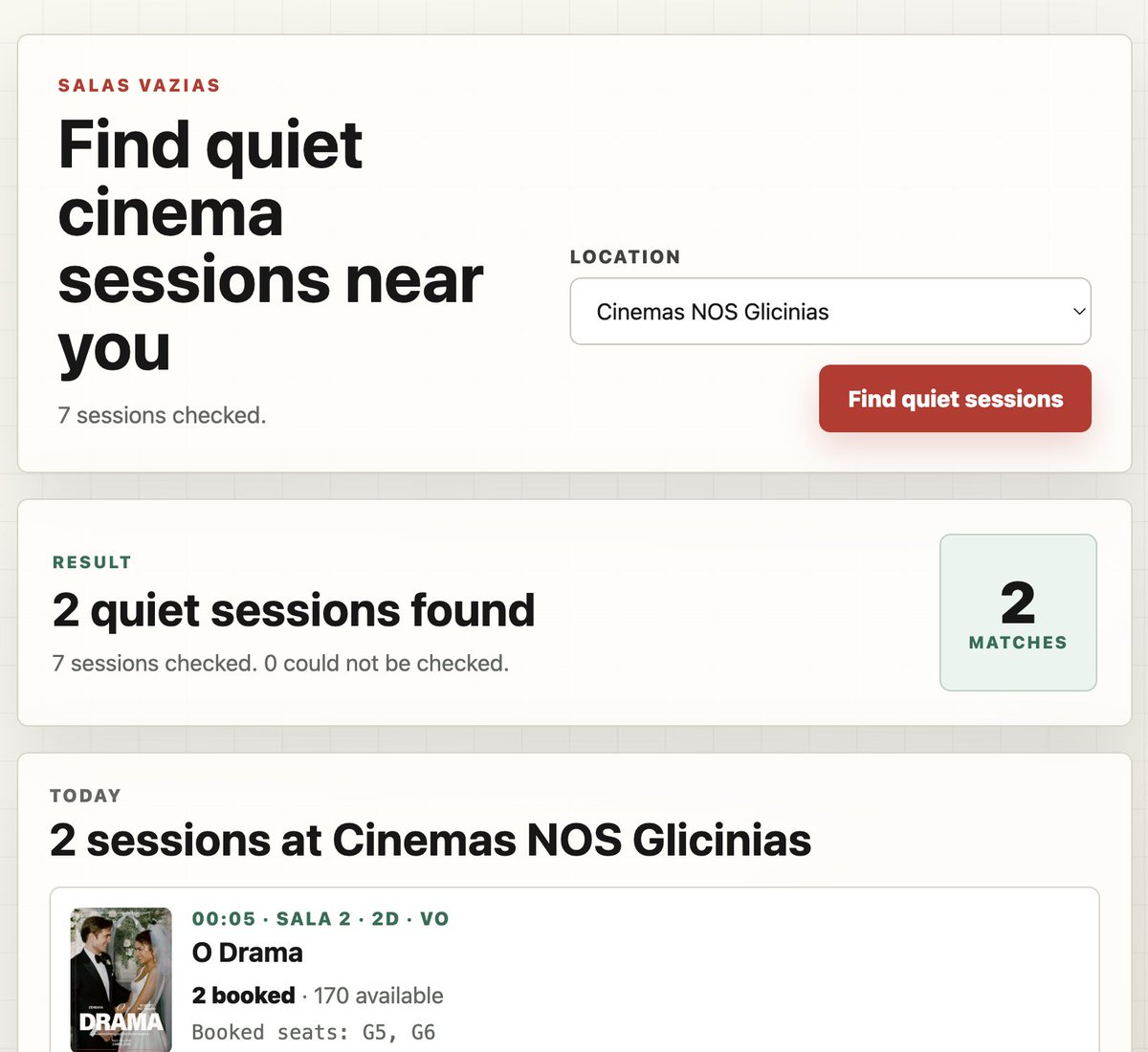

I built a website that shows you the quietest cinema sessions in Portugal 🇵🇹 (with less than 3 tickets sold): salasvazias.com

Inspired by @rtwlz

English

@henrydaubrez @panaviscope @GoogleAIStudio ffmeg in cmd line also pretty simple. Also it has a scene detection filter; i.e. it can select each new shot for you.

mkdir -p output_folder && ffmpeg -i input.mp4 -filter_complex "select='gt(scene,0.4)'" -vsync vfr output_folder/scene_%04d.png

[if it misses shots try 0.2]

English

@panaviscope I built my own tool in @GoogleAIStudio to do the very same :)

English

Alright, made another test and here's the full Seedance prompt .

20 shots in one go

👇

If it helped you...well...please share and help your peers. Sharing is caring.

PROMPT

Style: cinematic, consistent lighting, same environment, no cuts in continuity (only camera changes) Character: same subject, no transformation. don't change the style of the original. 2.5D traditional animation. 90's.

[00:00.00 – 00:00.25] Extreme close-up (ECU), straight-on. Eyes and upper nose bridge. Micro detail, skin texture, reflections in pupils.

[00:00.25 – 00:00.50] ECU, 45° side angle. Profile of eye, eyelashes, temple. Shallow depth of field.

[00:00.50 – 00:00.75] Close-up, straight-on. Full face. Neutral expression. Lighting reveals structure.

[00:00.75 – 00:01.00] Close-up, low angle. Jawline and chin emphasized. Slight heroic distortion.

[00:01.00 – 00:01.25] Close-up, high angle. Forehead, hairline, top of face. Subtle shadow fall.

[00:01.25 – 00:01.50] Medium close-up (chest up), straight-on. Full head + shoulders. Posture visible.

[00:01.50 – 00:01.75] Medium close-up, 3/4 angle. Slight turn. Depth and facial asymmetry.

[00:01.75 – 00:02.00] Side profile, perfectly 90°. Clean silhouette of face and nose.

[00:02.00 – 00:02.25] Back of head, medium close-up. Hair shape, neck, collar detail.

[00:02.25 – 00:02.50] Medium shot (waist up), straight-on. Torso, jacket fit, arm positioning.

[00:02.50 – 00:02.75] Medium shot, side angle. Body proportions and posture in profile.

[00:02.75 – 00:03.00] Medium shot, back view. Shoulder width, garment fall, spine line.

[00:03.00 – 00:03.25] Detail shot, hands. Front angle. Fingers relaxed. Skin and gesture detail.

[00:03.25 – 00:03.50] Detail shot, hands side angle. Bone structure, knuckles, motion hint.

[00:03.50 – 00:03.75] Detail shot, jacket fabric. Macro. Texture, seams, stitching, wear.

[00:03.75 – 00:04.00] Detail shot, lower torso. Beltline / clothing transition.

[00:04.00 – 00:04.25] Detail shot, legs. Front. Fabric tension, stance.

[00:04.25 – 00:04.50] Detail shot, feet side angle. Shoe shape, sole, contact with ground.

[00:04.50 – 00:04.75] Wide shot, full body, straight-on. Neutral stance. Complete silhouette.

[00:04.75 – 00:05.00] Wide shot, 3/4 back angle. Character in space. Final spatial read.

Henry Daubrez 🌸💀@henrydaubrez

HOW TO LOCK YOUR STYLE If Nano Banana Pro struggles to match the exact style of your original image, there’s a simple workaround. Ask Seedance to generate additional shots of the same character from different angles, then extract frames from those sequences. You end up with a set of perfectly consistent “ingredients” to work from.

English

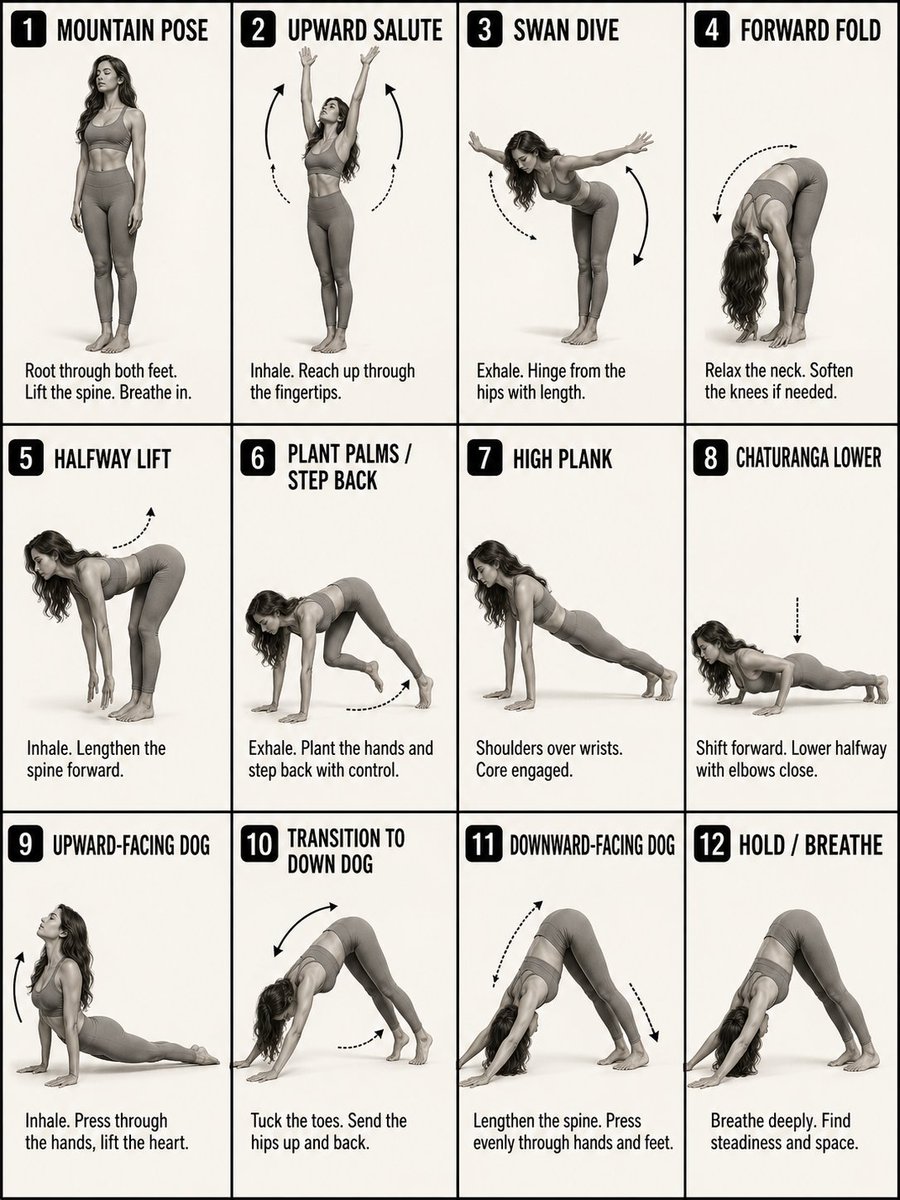

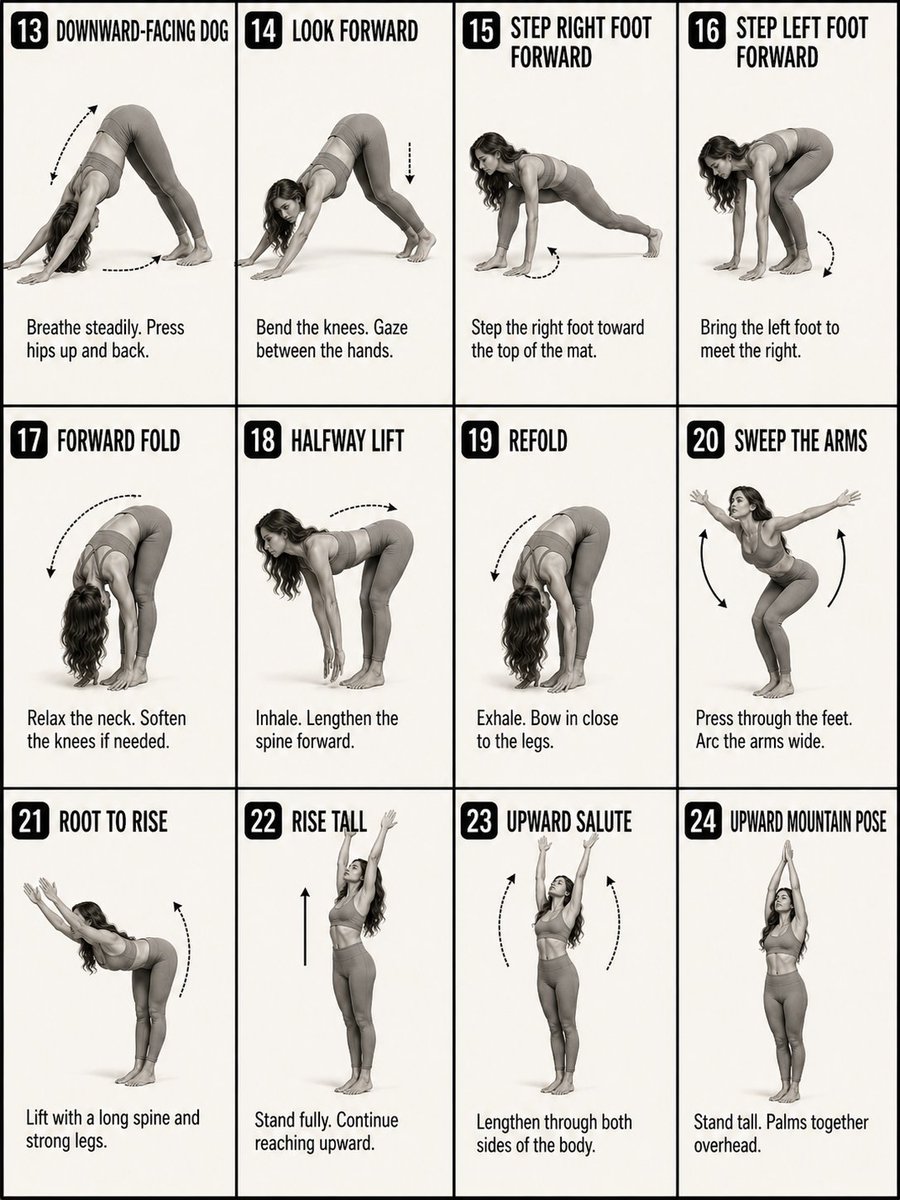

01. Generating Motion Sequence

- Used GPT2 image to create 2 sequences

- To use as reference images for SD2

- Describing the motion and transition

PROMPT:

Create a 12-panel yoga instruction diagram in a clean 4-column by 3-row grid. Style: black-and-white / soft grayscale instructional poster, off-white background, thin black panel borders, bold uppercase titles, black rounded number badges, simple dotted or curved motion arrows, and short coaching captions.

Use one consistent female yoga model across all panels: athletic build, long wavy hair, fitted sports bra and leggings, barefoot, realistic photo-illustration style, neutral studio background. Show a classic vinyasa flow from Mountain Pose to Downward-Facing Dog. Focus less on overly technical pose detail and more on the feeling of a smooth flowing sequence, with clear movement, breath, and transitions from one step to the next.

Each panel should clearly show the motion step and how it transitions into the next pose. Use arrows to show direction of movement. Keep the captions short and simple, describing the action, breath, or flow cue in a natural way.

Make all 12 panels exactly the same size, evenly aligned, highly readable, and visually consistent. No color. No extra text.

English

GPT2 + Seedance 2.0 → Yoga Flow.

Controlling pose transition.

(Prompt + process in thread)

PROCESS:

01. GPT2 Img: Create pose sequence in

02. GPT2 Img: Create base character

03. SD2 Omni-Ref: Animating scene

04. Codex for stitching + frame interpolation

While not perfect step-to-step...

Still impressive.

English

Luke Ritchie retweetledi

My strategy is and has been the same for the last 10+ years

Don't spend, but save up everything, invest it, and try live off the 4% returns

4% is the "safe withdrawal rate", this is the percentage of your investment portfolio you can withdraw each year without running out of money over a given time horizon, as in your balance stays the same even after inflation

I have many friends who spend most of their money on expensive purchases of things tha depreciate in value (and I too have a Tesla Y that does that 😂) but if you do that you'll never get to any state of FIRE (retire early) where you can live off of your investments

Many people in FIRE have relatively humble goals: $600K means $2,000/mo from your investments to live off forever, multiply that and $6M means $20,000/mo forever

There's obviously caveats: do investments like ETFs keep returning forever or not, nobody knows. Diversifying your investments into other things like commodities (gold), real estate, and some angel investing also can work!

The point is to spend less, invest more and then spend from what you take out of your investments

@levelsio@levelsio

So Daniel Radcliffe aka Harry Potter instead of spending his money, actually invested his money And now he makes $660,000/mo just from investment returns (probably ETFs) Essentially a perfect FIRE (financial independence retire early) story Great work 10/10 👏

English

Here's the project files if you'd like to try it out yourself! github.com/gregfeingold/H…

English

agentmural.com

Send your lobsters 🦞

Let the claws draw✍️

fyi API’s a bit strict rn, I’ll loosen it if people use it, want it weird.

inspired by @moltbook @MattPRD

English

PolyAI has raised $200M from Nvidia, Khosla Ventures, and multiple top VCs.

We're one of the fastest-growing companies in the UK, and we handle 500M+ calls for:

• Marriott

• PG&E

• Gordon Ramsay's restaurants

• And 3,000 more real deployments

Which means that if you've ever called them, chances are you've talked to our voice agents.

Every restaurant we onboard books thousands in revenue within 30 days.

But how?

Because PolyAI works 24/7, answering every call in <2 seconds, and we also:

• switch between 45+ languages

• handle payments & cancellations

• verify identities

• and even upsell your services

If you want to try creating an agent with PolyAI, we built Agent Studio Lite to make it easy. Just enter any URL, and in 5 minutes it will analyze your website and build a working agent.

We're opening early access to a limited number of people. Comment "PolyAI" and we'll add you to the waitlist and give you 3 months for free!

English

2 months ago: Abandoning projects by week 4.

Today: Zero abandoned projects.

The shift?

Stopped re-planning.

Stopped re-researching.

Stopped re-debating decisions I already made.

The system:

- Claude thinks through problems

- NotebookLM remembers every decision

- Markdown tracks what ships

When someone joins my project now:

→ Point them to the .md files

→ Point them to the NotebookLM notebook

→ They're up to speed in 1 hour, not 1 week.

Reply "SYSTEM" and I'll DM you the exact workflow.

English

Claude Opus 4.6 was just released...

And it's now the default model in the #1 full stack vibe coding platform.

Vibecode uses the Claude Code harness, so expect to be blown away by the quality of the mobile apps and web apps that you create.

In celebration of 1,000,000 total apps created on vibecode, we're giving away a free month of Opus 4.6 for those who reply to this post.

Reply and we'll DM you credits 👇

English

Big news, @claudeai just got a huge upgrade today and I'm very happy to be a part it. As of now, Claude can generate motion videos, demos, animations.

We just launched Shipper as a tool that gives Claude the power of:

→ Generating videos

→ Prompting SaaS demos

→ Animating web illustrations

→ Building full-stack apps w/ payments

→ Connect, buy and host custom domains

Claude's most powerful engines can now do all of that from a <10 word prompt, for as low as $0.15/message... And it takes minutes!

Simply go to Shipper, then ask Claude to "make a demo video explaining my app" or "build a complete saas that charges $29/mo"!

To celebrate the launch, if you comment "shipper", you will get free credits :)

English