mattt

45 posts

@tom_doerr look at how we used it in HACK26, i think results are quite nice!

github.com/maedmatt/Dream…

English

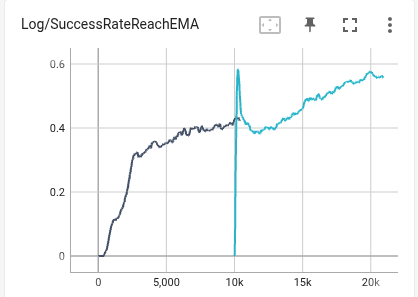

Most RL locomotion examples let the actor (the policy network that runs on the real robot) observe two ground truths that are not directly measured by hardware:

- linear velocity of the robot

- projected gravity (i.e. orientation of the robot)

The former can be inferred using a state estimator built using a small neural network trained to predict velocity, while the latter can be computed using Madgwick AHRS / Kalman filter.

Alternatively, it kind of makes sense to let the actor network learn to extract whatever internal representation it needs directly from raw sensor data, instead of using hand-designed estimators.

I removed base_lin_vel, similarly to @asimovinc's approach, as well as projected_gravity. Instead, I added the accelerometer data (which most RL examples do not seem to provide). I continue to give those ground truth variables to the critic as privileged info the actor can't see, which is known as an asymmetric actor-critic architecture.

Advantages:

1. Should minimize the sim2real gap, as there are less external components whose results may differ between the sim and the hw

2. The actor can learn the interim representation that works better for the task, not necessarily those that we decided to infer for it

3. Less hand-tuned parameters

At least in simulation this seems to work great. It might be luck, trivial or still plain wrong, but after 1500 iterations, the simulation reached the best run yet in terms of reward, lin/ang tracking, action std and more.

Asimov@asimovinc

English

my scaling law not gonna outrun cluster maintenance :(

C Zhang@ChongZitaZhang

Feeling the achievement 😃These rewards are tuned for anymal (quadruped) and tron1 (biped lower body), but directly transfer to G1 (full humanoid) without tuning.

English

Feeling the achievement 😃These rewards are tuned for anymal (quadruped) and tron1 (biped lower body), but directly transfer to G1 (full humanoid) without tuning.

C Zhang@ChongZitaZhang

cannot make reward weights more beautiful

English

@ChongZitaZhang have you thought about crafting custom rules or skills to making the agent guess on important implementations?

English

day3: setup terrains and multigpu training -- smooth. then insane debugging. The agent writes many bugs that are hard to spot.

e.g., when randomizing the armature of joints, it resamples a value (0.8~1.2x) based on the current armature, not default one. that soon escalates.

C Zhang@ChongZitaZhang

the vibe coding challenging day1 MDP setup day2 policy coded, need multiple rounds of human-agent interaction to fix things. Today won't be day 3, need to do other things, but I also just found many bad kp/kd and initialization designs in common G1 setup that hurts explroation.

English

Lots of great stuff in here by @KyleMorgenstein

> Low Kp = feedforward torque, not position tracking. Enables full exploration

> Kp = max_torque / joint_RoM, D ≈ Kp/20

> High Kp pushes policy to torque limits, kills exploration

> Train 2-5x past apparent convergence for smooth deployable policies

> noise_std super important, must decrease and stabilize

> Start with perfect sim, add randomization one factor at a time

thehumanoid.ai/deployment-rea…

English

@observie @KyleMorgenstein what’s the point of training 2-5x after convergence? if reward and policy are not changing (converged) then what’s changing ?

English

mattt retweetledi

cc hooks are seriously underrated. one thing i haven’t had time to fully explore yet, but i’m genuinely excited about, is pairing them with skills to gate edits behind the exact context claude needs to work effectively in a complex codebase, at the right moment

for example i’ve got a folder packed with pydantic data models that absolutely require deep, highly specific domain knowledge to update correctly. with a pre-tool-use hook, i can intercept any attempt to use the edit/write tool on that folder and require a skill invocation first

that way, either the main agent or a sub-agent can pull in the right domain rules and constraints before touching the code. this is super useful in large, complex codebases where different areas need very narrow, specialized knowledge for claude to be effective, and where dumping a pile of docs at planning time and hoping claude catches every nuance doesn’t always work

English

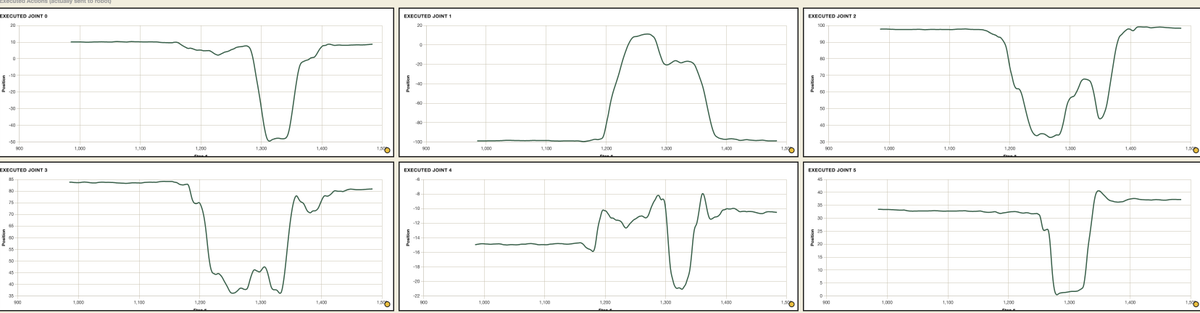

@jackvial89 What's controlling the robot? Are you filtering the actions predicted by the policy?

English

much smoother after applying a low pass Butterworth filter with a 3hz cutoff. this filters out high frequency small movements (aka noise) in the trajectory, it also naturally attenuates the signal so makes the robot move slower, I’ve added a bit of gain back to compensate and speed it up again after the filtering. still a bit jiggly, mainly in the shoulder pan but it seems to be mostly mechanical at this point

Jack Vial@jackvial89

60hz! real time chunking on an so101 with LeRobot. Not looking too bad. Bit of jitter throughout but no mode switching across chunks

English

@elliotarledge But you don’t have access to the source code don’t you? How can you optimise it?

English

timelapse #136 (16 hrs)

- getting more into polymarket/manifold

- finalizing up cuda book with manning

- optimizing battery materials discovery kernels (to learn about material discovery)

- got voice typing basically instant on my 3090

- burned $1200 in openrouter creds across my evals and opus 4.5 api spend lol

- got opus to optimize arc raiders performance on my linux rig

English