Markus Klock

1.3K posts

Markus Klock

@markusklock

I like networking, security and infrastructure in the world of computers. Network Consulting Engineer at Cisco. Founder and Head of Infrastructure at DatHost.

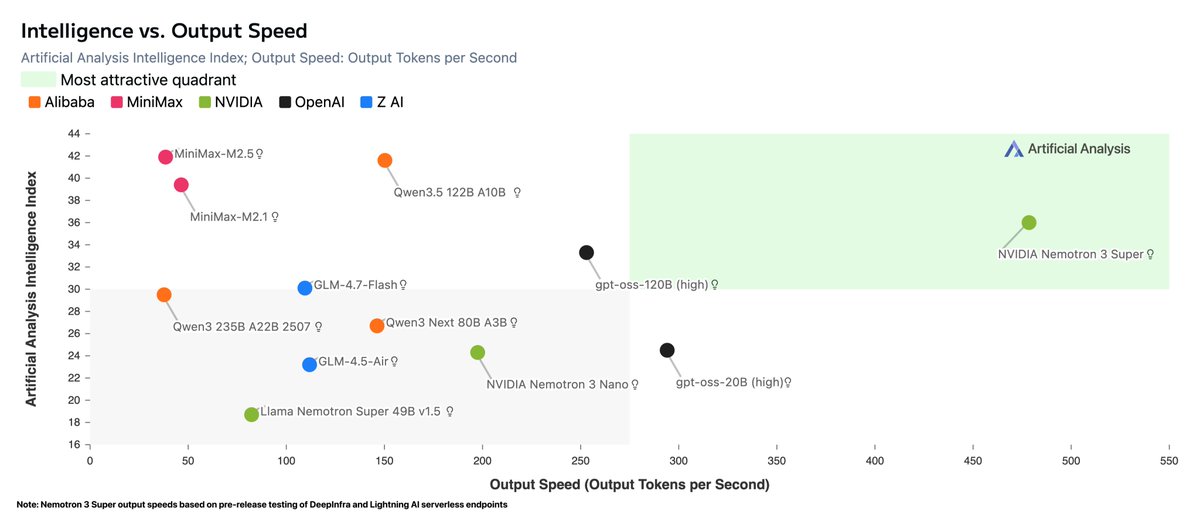

NVIDIA has released Nemotron 3 Super, a 120B (12B active) open weights reasoning model that scores 36 on the Artificial Analysis Intelligence Index with a hybrid Mamba-Transformer MoE architecture We were given access to this model ahead of launch and evaluated it across intelligence, openness, and inference efficiency. Key takeaways ➤ Combines high openness with strong intelligence: Nemotron 3 Super performs strongly for its size and is substantially more intelligent than any other model with comparable openness ➤ Nemotron 3 Super scored 36 on the Artificial Analysis Intelligence Index, +17 points ahead of the previous Super release and +12 points from Nemotron 3 Nano. Compared to models in a similar size category, this places it ahead of gpt-oss-120b (33), but behind the recently-released Qwen3.5 122B A10B (42). ➤ Focused on efficient intelligence: we found Nemotron 3 Super to have higher intelligence than gpt-oss-120b while enabling ~10% higher throughput per GPU in a simple but realistic load test ➤ Supported today for fast serverless inference: providers including @DeepInfra and @LightningAI are serving this model at launch with speeds of up to 484 tokens per second Model details 📝 Nemotron 3 Super has 120.6B total and 12.7B active parameters, along with a 1 million token context window and hybrid reasoning support. It is published with open weights and a permissive license, alongside open training data and methodology disclosure 📐 The model has several design features enabling efficient inference, including using hybrid Mamba-Transformer and LatentMoE architectures, multi-token prediction, and NVFP4 quantized weights 🎯 NVIDIA pre-trained Nemotron 3 Super in (mostly) NVFP4 precision, but moved to BF16 for post-training. Our evaluation scores use the BF16 weights 🧠 We benchmarked Nemotron 3 Super in its highest-effort reasoning mode ("regular"), the most capable of the model's three inference modes (reasoning-off, low-effort, and regular)

The BSD journey continues. After the extremely smooth OpenBSD serial install, I tried FreeBSD. The installer was, somehow, even faster. But the real shock wasn't the install speed. It was what I found when I opened the package manager config in /etc/pkg/FreeBSD.conf. This is what I saw: FreeBSD: { url: "pkg+pkg.FreeBSD.org${ABI}/quarterly", mirror_type: "srv", signature_type: "fingerprints", fingerprints: "/usr/share/keys/pkg", enabled: yes } It's... just simple. It's perfectly clear. I can see it uses variables like ${ABI}, which as a perfectly clear meaning, and that I'm on the "quarterly" branch. I instantly understand what's happening. Now, contrast that with my time-tested Debian /etc/apt/sources.list: deb deb.debian.org/debian/ bookworm main contrib non-free ... deb security.debian.org bookworm-security main contrib ... As a first-time reader (or even a 10-year user), what does "bookworm" mean? It's a codename. It tells me nothing about the version, the release, or its support status. I have to go Google it. Then I have to decode what "main," "contrib," "non-free," and "non-free-firmware" all mean relative to each other. The FreeBSD config is transparent. The Debian one requires tribal knowledge. The simplicity is just refreshing. Has the Linux world lost a bit of this over time?

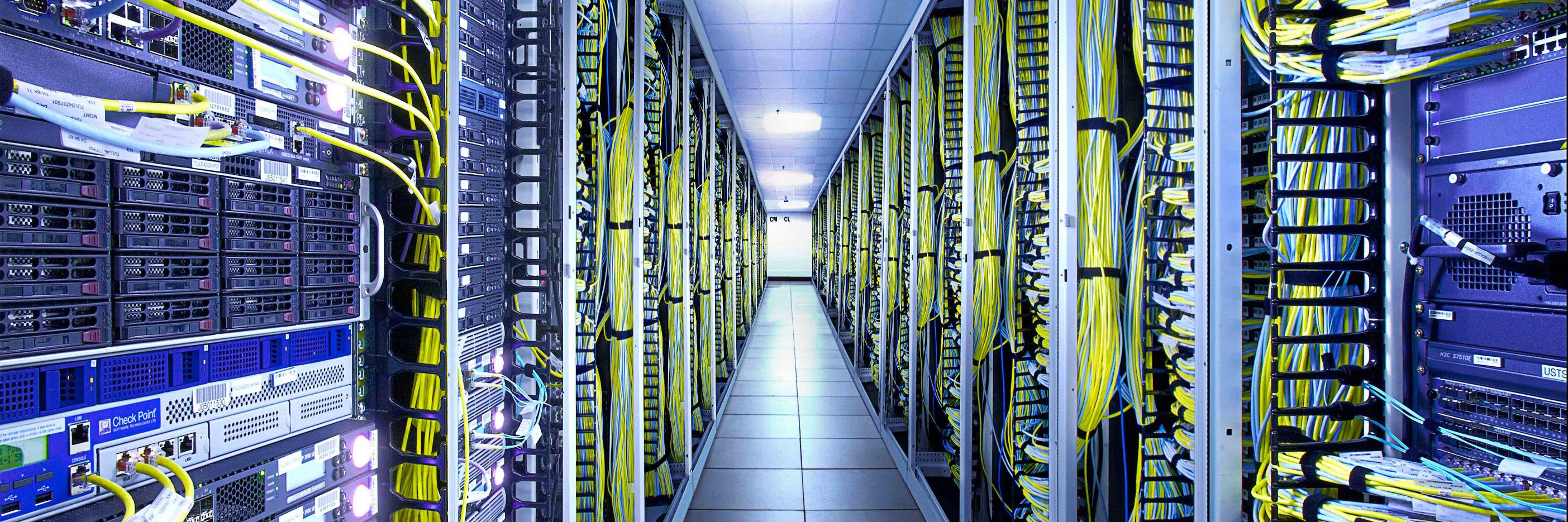

"Even a single millisecond matters in competitive gaming. Players can feel it. With DataPacket, we know the routing is optimized and any bad ping issues get solved." —Svante Boberg, CTO at DatHost We make sure that players worldwide in competitive titles can focus solely on the game while we handle the infrastructure behind it. Read the case study: datapacket.com/case-study/dat…