Mike Darché

494 posts

Mike Darché

@mikedarche

Co-founder / CTO @oneofnone_io

New York, NY Katılım Ekim 2012

1.3K Takip Edilen388 Takipçiler

@leerob @aakashgupta NGL as a power user I don’t really care who trained what. Composer 2 is great. Props to Leerob for jumping on this like a champ

English

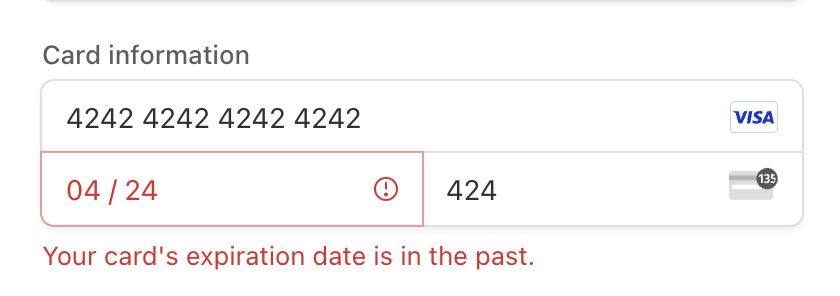

Cursor is raising at a $50 billion valuation on the claim that its “in-house models generate more code than almost any other LLMs in the world.” Less than 24 hours after launching Composer 2, a developer found the model ID in the API response: kimi-k2p5-rl-0317-s515-fast.

That’s Moonshot AI’s Kimi K2.5 with reinforcement learning appended. A developer named Fynn was testing Cursor’s OpenAI-compatible base URL when the identifier leaked through the response headers. Moonshot’s head of pretraining, Yulun Du, confirmed on X that the tokenizer is identical to Kimi’s and questioned Cursor’s license compliance. Two other Moonshot employees posted confirmations. All three posts have since been deleted.

This is the second time. When Cursor launched Composer 1 in October 2025, users across multiple countries reported the model spontaneously switching its inner monologue to Chinese mid-session. Kenneth Auchenberg, a partner at Alley Corp, posted a screenshot calling it a smoking gun. KR-Asia and 36Kr confirmed both Cursor and Windsurf were running fine-tuned Chinese open-weight models underneath. Cursor never disclosed what Composer 1 was built on. They shipped Composer 1.5 in February and moved on.

The pattern: take a Chinese open-weight model, run RL on coding tasks, ship it as a proprietary breakthrough, publish a cost-performance chart comparing yourself against Opus 4.6 and GPT-5.4 without disclosing that your base model was free, then raise another round.

That chart from the Composer 2 announcement deserves its own paragraph. Cursor plotted Composer 2 against frontier models on a price-vs-quality axis to argue they’d hit a superior tradeoff. What the chart doesn’t show is that Anthropic and OpenAI trained their models from scratch. Cursor took an open-weight model that Moonshot spent hundreds of millions developing, ran RL on top, and presented the output as evidence of in-house research. That’s margin arbitrage on someone else’s R&D dressed up as a benchmark slide.

The license makes this more than an attribution oversight. Kimi K2.5 ships under a Modified MIT License with one clause designed for exactly this scenario: if your product exceeds $20 million in monthly revenue, you must prominently display “Kimi K2.5” on the user interface. Cursor’s ARR crossed $2 billion in February. That’s roughly $167 million per month, 8x the threshold. The clause covers derivative works explicitly.

Cursor is valued at $29.3 billion and raising at $50 billion. Moonshot’s last reported valuation was $4.3 billion. The company worth 12x more took the smaller company’s model and shipped it as proprietary technology to justify a valuation built on the frontier lab narrative.

Three Composer releases in five months. Composer 1 caught speaking Chinese. Composer 2 caught with a Kimi model ID in the API. A P0 incident this year. And a benchmark chart that compares an RL fine-tune against models requiring billions in training compute without disclosing the base was free.

The question for investors in the $50 billion round: what exactly are you buying? A VS Code fork with strong distribution, or a frontier research lab? The model ID in the API answers that.

If Moonshot doesn’t enforce this license against a company generating $2 billion annually from a derivative of their model, the attribution clause becomes decoration for every future open-weight release. Every AI lab watching this is running the same math: why open-source your model if companies with better distribution can strip attribution, call it proprietary, and raise at 12x your valuation?

kimi-k2p5-rl-0317-s515-fast is the most expensive model ID leak in the history of AI licensing.

Harveen Singh Chadha@HarveenChadha

things are about to get interesting from here on

English

@DrewAustin @openclaw Learning this the hard way too. Openclaw is only as good as your PM skills and your rule system around it

English

I spend a lot of time wrestling with my @openclaw agent, and I rationalize it to myself because its all learning, but its annoying and both an opportunity and a pain in the ass.

English

@Shpigford @openclaw Loving MiniMax M2.5 for the main agent and for most coding tasks ($20/mo). I still pair with Claude Code for a second set of eyes on anything complex

English

what's the best low-cost model for use with @openclaw?

want to do some testing around running an instance as inexpensively as possible.

trying to fine the right balance between cost and functional.

English

Mike Darché retweetledi

I never run out of content to post anymore.

Built an automation that monitors 50+ news sources, scores articles for relevance, and writes social posts automatically.

It finds trending topics in my niche before they explode everywhere else.

Saves me 15-20 hours monthly and keeps me ahead of every trend.

Comment "NEWS" and I'll DM it to you (must be following)

English

@rauchg You are all very quick to hate on a dude whose contributions to the internet have empowered and improved the lives of millions.

I don't know Guillermo but he doesn't strike me as someone who stands for any of the shit being thrown at him in this thread.

English

🇺🇸 🇮🇱 🇦🇷

Enjoyed my discussion with PM Netanyahu on how AI education and literacy will keep our free societies ahead.

We spoke about AI empowering everyone to build software and the importance of ensuring it serves quality and progress.

Optimistic for peace, safety, and greatness for Israel and its neighbors.

English

Fired up to lock in a new 2-year deal with @CBSSports. Calling 15 games a year, appearing on Inside College Football studio show + CBS Sports HQ… and still on the call for every Army home game at West Point.

Calling Army football has been one of the best experiences of my career — fired up to keep it rolling.

English

We set the #0 priority for @vercel infrastructure for the year as: the fastest, most efficient, and observable build & functions compute

We shipped vercel.com/fluid for speed & efficiency. Very excited about the big strides the team is making in Observability:

Vercel Developers@vercel_dev

Vercel Observability now includes an overview page that provides a high-level view of your application's performance. vercel.com/changelog/over…

English

Mike Darché retweetledi

if you hate on @levelsio for the plane game you’re just a dork

English

Mike Darché retweetledi

Mike Darché retweetledi

In 2024 we met some amazing people, were involved in world renowned events, collaborated with both incredible creators and brands alike, and created a One of a kind experience that we are bringing to 2025. 🙌#oneofnone #connectedgoods #coonectedculture #1ofx #HappyNewYear

English

Mike Darché retweetledi

@jarrodwatts Always appreciate your summary posts, thank you! The future sounds bright for Ethereum if they stick to this roadmap 🚀

English

This is my first of many posts that explain what’s happening in the Ethereum ecosystem.

If you’d like to see more, please

- Consider following me

- Engage with the post below for the algo

x.com/jarrodWattsDev…

Jarrod Watts@jarrodwatts

Beam Chain was the biggest announcement at Devcon, introducing 9 major upgrades for Ethereum. But most people still don’t understand them... So, here are 9 tweets to explain the 9 upgrades: 🧵

English

Mike Darché retweetledi

Mike Darché retweetledi

Mike Darché retweetledi

Mike Darché retweetledi

Great seeing a One of None powered collab between @viin7estate and @siegelmanstable in the wild at the Cannes Lions International Festival of Creativity! Thank you @stagwell for hosting Sport Beach and allowing us to be apart of an amazing lineup of events! 🙌

English