Bill Guschwan

2.5K posts

Bill Guschwan

@mistagogue

dude who abides

[email protected] Katılım Ağustos 2009

4.2K Takip Edilen659 Takipçiler

@briansolis @nvidia Analogy fails. Travel is not located inside the human. Creativity is.

English

“The car did not make humans travel less.” – Jensen Huang, @NVIDIA on AI jobs disruption

Brian Solis@briansolis

English

@pmarca @Austan_Goolsbee Marc, please talk to some college students. It is existential since ai is commodifying creativity and abstracting creativity. Creative destruction of their dreams. It destroys the ritual of education that gives meaning to their lives. That is what these students are facing.

English

@bschermd It is deductive & protocol reasoning, not abductive learning. We match symptoms to guidelines, not to generate & test explanatory models under uncertainty. Medicine is ontologically designed around pharmaceuticals & not ketosis, requires systems thinking & behavioral transform.

English

This is the same problem that software devs have with AI generated code. It can make infinite variations of the original intent so verification / lack of coherence is the bottleneck.

Jon Hernandez@JonhernandezIA

📁 Terence Tao, Fields Medal winner, says AI can already generate many mathematical proofs. The real bottleneck is verification. Creating ideas is becoming cheap. Knowing which ones are truly correct is still human work.

English

@rryssf Yidam practice solves this by design: emotional weighting + narrative + self-model with enforced dissolution.

That’s the missing safety mechanism for AI memory too.

English

@rryssf Without a disciplined double memory captures the agent. With one, identity stays plastic without collapse. Psychology shows memory is reconstructive and goal-shaped.

The danger: when identity lacks an externalized, dissolvable form, it fuses with desire and becomes captured.

English

psychology solved the ai memory problem decades ago. we just haven't been reading the right papers.

your identity isn't something you have. it's something you construct. constantly. from autobiographical memory, emotional experience, and narrative coherence.

Martin Conway's Self-Memory System (2000, 2005) showed that memories aren't stored like video recordings.

they're reconstructed every time you access them, assembled from fragments across different neural systems. and the relationship is bidirectional: your memories constrain who you can plausibly be, but your current self-concept also reshapes how you remember. memory is continuously edited to align with your current goals and self-images. this isn't a bug. it's the architecture.

not all memories contribute equally. Rathbone et al. (2008) showed autobiographical memories cluster disproportionately around ages 10-30, the "reminiscence bump," because that's when your core self-images form.

you don't remember your life randomly. you remember the transitions. the moments you became someone new. Madan (2024) takes it further: combined with Episodic Future Thinking, this means identity isn't just backward-looking. it's predictive. you use who you were to project who you might become. memory doesn't just record the past. it generates the future self.

if memory constructs identity, destroying memory should destroy identity. it does. Clive Wearing, a British musicologist who suffered brain damage in 1985, lost the ability to form new memories. his memory resets every 30 seconds. he writes in his diary: "Now I am truly awake for the first time." crosses it out. writes it again minutes later.

but two things survived: his ability to play piano (procedural memory, stored in cerebellum, not the damaged hippocampus) and his emotional bond with his wife. every time she enters the room, he greets her with overwhelming joy. as if reunited after years. every single time. episodic memory is fragile and localized.

emotional memory is distributed widely and survives damage that obliterates everything else.

Antonio Damasio's Somatic Marker Hypothesis destroyed the Western tradition of separating reason from emotion.

emotions aren't obstacles to rational decisions. they're prerequisites.

when you face a decision, your brain reactivates physiological states from past outcomes of similar decisions. gut reactions. subtle shifts in heart rate. these "somatic markers" bias cognition before conscious deliberation begins.

the Iowa Gambling Task proved it: normal participants develop a "hunch" about dangerous card decks 10-15 trials before conscious awareness catches up. their skin conductance spikes before reaching for a bad deck. the body knows before the mind knows. patients with ventromedial prefrontal cortex damage understand the math perfectly when told. but keep choosing the bad decks anyway. their somatic markers are gone. without the emotional signal, raw reasoning isn't enough.

Overskeid (2020) argues Damasio undersold his own theory: emotions may be the substrate upon which all voluntary action is built.

put the threads together. Conway: memory is organized around self-relevant goals. Damasio: emotion makes memories actionable. Rathbone: memories cluster around identity transitions. Bruner: narrative is the glue.

identity = memories organized by emotional significance, structured around self-images, continuously reconstructed to maintain narrative coherence. now look at ai agent memory and tell me what's missing.

current architectures all fail for the same reason: they treat memory as storage, not identity construction. vector databases (RAG) are flat embedding space with no hierarchy, no emotional weighting, no goal-filtering. past 10k documents, semantic search becomes a coin flip. conversation summaries compress your autobiography into a one-paragraph bio. key-value stores reduce identity to a lookup table. episodic buffers give you a 30-second memory span, which as the Wearing case shows, is enough to operate moment-to-moment but not enough to construct identity.

five principles from psychology that ai memory lacks.

first, hierarchical temporal organization (Conway): human memory narrows by life period, then event type, then specific details. ai memory is flat, every fragment at the same level, brute-force search across everything. fix: interaction epochs, recurring themes, specific exchanges, retrieval descends the hierarchy.

second, goal-relevant filtering (Conway's "working self"): your brain retrieves memories relevant to current goals, not whatever's closest in embedding space. fix: a dynamic representation of current goals and task context that gates retrieval.

third, emotional weighting (Damasio): emotionally significant experiences encode deeper and retrieve faster. ai agents store frustrated conversations with the same weight as routine queries. fix: sentiment-scored metadata on memory nodes that biases future behavior.

fourth, narrative coherence (Bruner): humans organize memories into a story maintaining consistent self across time. ai agents have zero narrative, each interaction exists independently. fix: a narrative layer synthesizing memories into a relational story that influences responses.

fifth, co-emergent self-model (Klein & Nichols): human identity and memory bootstrap each other through a feedback loop. ai agents have no self-model that evolves. fix: not just "what I know about this user" but "who I am in this relationship."

the fundamental problem isn't technical. it's conceptual. we've been modeling agent memory on databases. store, retrieve, done. but human memory is an identity construction system. it builds who you are, weights what matters, forgets what doesn't serve the current self, rewrites the narrative to maintain coherence. the paradigm shift: stop building agent memory as a retrieval system. start building it as an identity system.

every component has engineering analogs that already exist.

hierarchical memory = graph databases with temporal clustering.

emotional weighting = sentiment-scored metadata.

goal-relevant filtering = attention mechanisms conditioned on task state.

narrative coherence = periodic summarization with consistency constraints.

self-model bootstrapping = meta-learning loops on interaction history.

the pieces are there. what's missing is the conceptual framework to assemble them. psychology provides that framework.

the path forward isn't better embeddings or bigger context windows. it's looking inward. Conway showed memory is organized by the self, for the self. Damasio showed emotion is the guidance system. Rathbone showed memories cluster around identity transitions. Bruner showed narrative holds it together.

Klein and Nichols showed self and memory bootstrap each other into existence. if we're serious about building agents with functional memory, we should stop reading database architecture papers and start reading psychology journals.

English

@aakashgupta Homer’s Iliad describes this slow down as “aidos” or the capacity to pause when desire wants to decide for you. Meditation is necessary because it trains the pause. It is not sufficient because Aidos forms when you pause in situations where status, certainty, control are in play.

English

Your nervous system speed-matches to your behavior all day long.

Every action you take sends a signal to your brainstem about how urgent your environment is. When you rush through your morning routine, scrunch over a laptop, speed-walk between meetings, your sympathetic nervous system reads that motor pattern as “threat environment, stay activated.” The cortisol stays elevated. The shallow breathing continues. Your body is running threat detection protocols all day because your movement patterns keep telling it to.

What she’s doing in this video is the opposite. Slow makeup application. Relaxed posture instead of desk scrunching. Unhurried walking with coffee. Each of those is a separate signal to the brainstem that says “no predator, no deadline, no emergency.”

Huberman’s lab at Stanford identified something specific here. Your interoceptive system, the part of your brain that monitors your body’s internal state, takes cues from your voluntary motor behavior to calibrate arousal levels. Move fast, breathe fast, hunch protectively, and your brain interprets your own body as being in danger. Slow your motor output across every domain and your brain literally recalibrates the baseline.

This is why “just meditate for 10 minutes” fails for most people. You meditate, then you sprint through your morning, hunch at your desk for 8 hours, and eat lunch in 4 minutes. Ten minutes of stillness can’t override 15 hours of urgency signaling.

This woman is running a full-day protocol where every motor pattern sends the same message: safe. And her nervous system is doing the only thing it can do with consistent input. It believes her.

Wholesome Side of 𝕏@itsme_urstruly

When you realise that going slow is the secret to a regulated nervous system.

English

@Madisonkanna It’s visceral disgust at capitalism’s abstraction. Capital moves from labor → land → attention → imagination, value is being extracted & meaning dissolved, a collapse of symbolic ground & you get refactored into a reusable component with no docstring explaining why you exist.

English

as a software engineer, i feel a real loss of identity right now.

for a long time i defined myself in part by the act of writing code. the pride in a hard-earned solution was part of who i was. now i watch AI accomplish in seconds what took me hours. i find myself caught between relief and mourning, awe and anxiety. the craft that shaped me is suddenly eclipsed by a machine. who am i now?

English

@Hesamation Vickers Appreciative System suggests replacing goals with feedback models : maintain desired relationships : elude undesired ones : facts are evaluated norms, a process which leads to taking regulatory action & modifying norms, so future experiences evaluate differently

English

I think I have accidentally discovered a Myers Briggs but for work.

I like seeing how many people are finding out why they prefer certain types of work (I will link some discoveries in this thread).

I will keep exploring/ expanding on this.

roobz 🌙 🌸@tishray

English

@thedankoe What Tibetan Buddhism does thru:

•Refuge

•Confession

•Mind training

•View cultivation

Koe does thru:

Identity rupture

Anti-vision

Feedback systems

Meaningful constraint

Both have same aim:

Freedom from unconscious identity loops & suffering they generate.

English

Kan anticipated modern AI arises from the right kind of distributed infrastructure capable of sensing, relating, and recombining meaning at scale. His product "Infrasearch was “reasoning over latent space” Kan saw that search would evolve into synthesis, long before LLMs existed.

DJ Damon@dJ_damon_

Gene Kan apparently died of a self-inflicted gunshot wound in 2002. Shortly after record lables came after Nabster. Limewire was powered by Gene's work.

English

@APompliano @PromptLLM Like this passage The Tibetan Karmapa literally means “the one who acts”. And he knows through wisdom. But he also acts for benefits of all beings (compassion). Perhaps compassion would improve this passage.

English

Apple JUST quietly announced something that’s a lot BIGGER than it looks: "the Mini Apps Partner Program"

Apple is admitting that the future of software is embedded, lightweight, vertical mini-apps distributed inside bigger app

For founders who want to make $$ building apps:

1. Apple just legitimized the “superapp” model for the West.

China has WeChat mini-programs. India has PhonePe Switch. The West has… nothing. Apple just opened the door. You can now run HTML/JS mini-apps inside a native host and earn 85% on qualifying purchases. That’s Apple-sanctioned platform piggybacking.

2. Distribution arbitrage becomes real again.

You don’t need to convince users to download your app. Just partner with a host app and drop in a mini-app. This is a cheat code for early traction. Think: travel apps hosting niche tools, fitness apps hosting mini workouts, marketplaces hosting micro-utilities.

3. Apple is creating a new economy layer: “embedded SaaS.”

Imagine: CRM mini-apps inside vertical tools. Math solver mini-apps inside education apps. Calendar mini-apps inside productivity apps. The TAM for tools that don’t need standalone installs just went vertical.

4. Developers get an 85% revenue share.

This is Apple basically saying: “We want this ecosystem to grow, and we’re willing to cut our take rate.” When Apple lowers its cut, I pay attention because they see a platform shift coming.

5. AI makes this 10× more important.

LLM-powered micro-apps (calculators, planners, agents, coaches, niche utilities) are tiny by design. They’re perfect mini-apps. Apple just created infrastructure for AI-native micro utilities to live inside bigger apps with built-in commerce.

6. Host apps become new “distribution landlords.”

If you own an app with traffic, you become a platform. You can host mini-apps, take a cut, and build a developer ecosystem around you.

It’s a new monetization model for existing apps with audiences.

7. This unlocks a wave of second-order opportunities.

- Agencies helping apps become mini-app hosts

- Mini-app dev shops

- “Shopify for mini-apps” toolkits

- Mini-app marketplaces

- Analytics for mini-app performance

- Discovery engines for mini-apps

- I'll be dropping mini app ideas on @ideabrowser and @startupideaspod

TLDR;

Apple just turned every high-traffic app into a potential superapp and every indie developer into a potential platform partner.

The App Store is becoming modular, composable, and layered. The next decade of consumer apps will look less like standalone products and more like ecosystems stitched together with mini-apps.

This is quietly one of the biggest distribution unlocks in years.

English

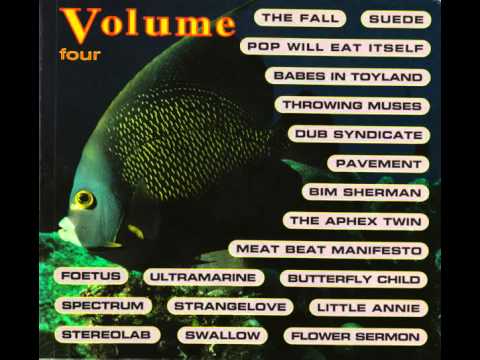

Volume Four - Butterfly Child - We The Inspired youtu.be/thADaTsOoy0?si… via @YouTube

YouTube

English