Mohamed Abdelfattah

631 posts

Mohamed Abdelfattah

@mohsaied

Assistant Prof @CornellECE and cofounder/Chief Science Officer at @mako_dev_ai. At the intersection of machine learning and hardware. Father. Muslim.

@klazizpro was meeting @mohsaied at @mako_dev_ai @cornell_tech

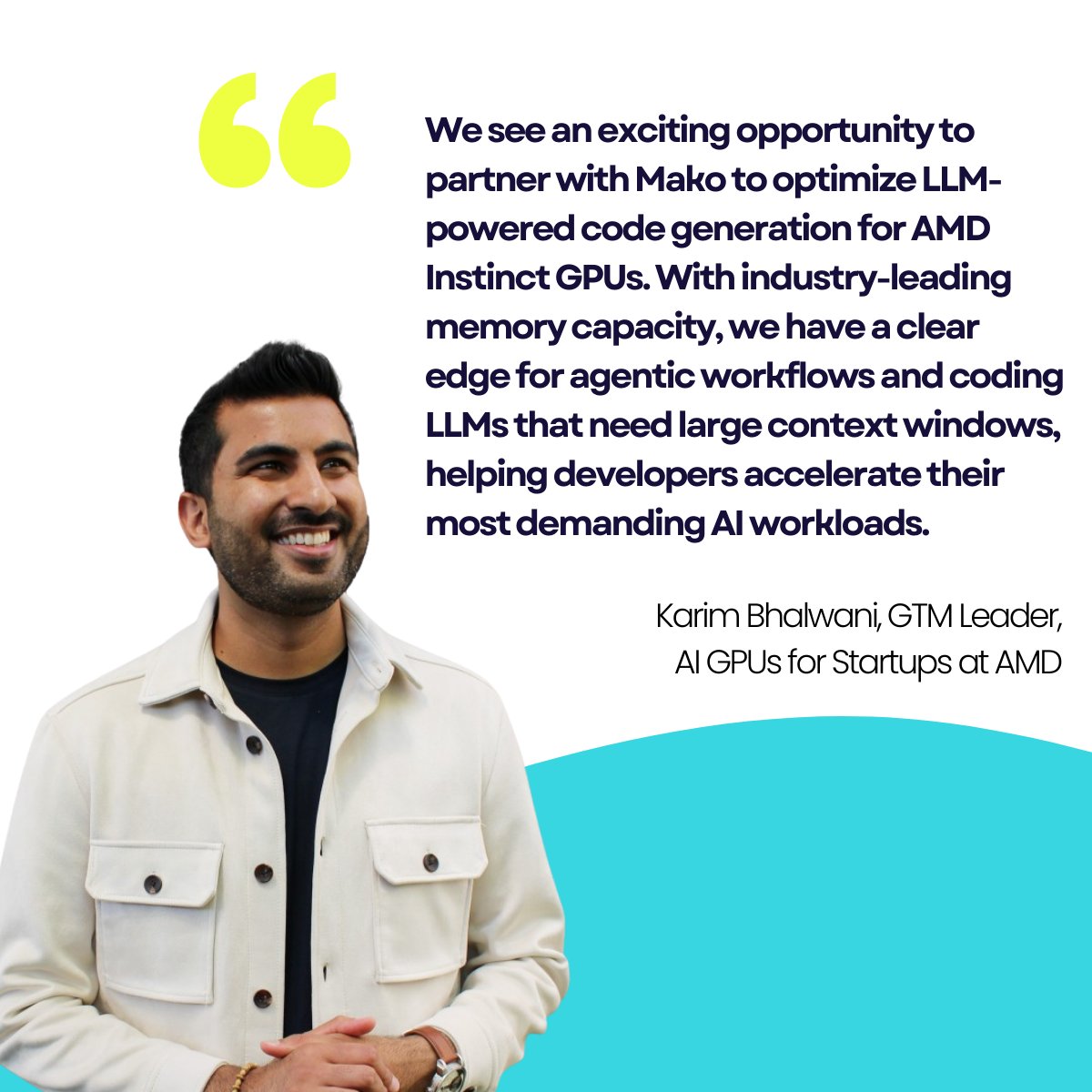

We’re thrilled to welcome @mako_dev_ai to the M13 portfolio. Mako is changing how AI models run at scale. For years, NVIDIA’s CUDA has been the default programming interface for GPU workloads, giving developers power but also locking them into one way of working. Now, as AI hardware diversifies, from AMD to custom accelerators, the industry needs a performance layer that works everywhere. That’s what Mako delivers. Co-founders @wAIeedatallah, @mohsaied, and Lukasz Dudziak are building AI-native infrastructure that automates GPU kernel generation and tuning. This lets developers deploy models faster, hit better price-performance, and run on any GPU with no rewrites, no hand-tuning. It’s like what Kubernetes did for the cloud but for AI compute. M13 led Mako’s $8.5M+ seed round with @neo, @flybridge, and angel investors including AI pioneer Jeff Dean. We’re excited to be part of infrastructure history in the making. For more about Mako: @ChristineMHall talks to the Mako team and @kalomarNYC about its bold vision for GPU freedom. m13.co/article/meet-m…

MakoGenerate now supports custom problems, meaning you can generate #CUDA or #Triton kernels for any @PyTorch reference code you have! Lets walk through an example using @GPU_MODE's latest contest: Triangle Multiplicative Update (TriMul) module

Seeing text-to-text regression work for Google’s massive compute cluster (billion $$ problem!) was the final result to convince us we can reward model literally any world feedback. Paper: arxiv.org/abs/2506.21718 Code: github.com/google-deepmin… Just train a simple encoder-decoder from scratch to read the cluster’s complex state as text, then generate numeric tokens. We’re also seeing strong results on classic tabular data and "exotic" inputs like graphs, system logs, and even code snippets. Feature engineering will no longer exist! Authors: @yashakha, Bryan Lewandowski, Cheng-Hsi Lin, Adrian N. Reyes, Grant C. Forbes, Arissa Wongpanich, Bangding Yang, @mohsaeid, @SagiPerel, @XingyouSong

The latest research paper from @eyelinestudios, FlashDepth, has been accepted to the International Conference on Computer Vision (#ICCV2025). Our model produces accurate and high-resolution depth maps from streaming videos in real time and is completely built on open-source models and data. We hope it will be applied to various online applications, like robotics and on-set video composition. It has already been integrated into a few internal tools for visual effects and real-time depth estimation, segmentation, and matting tasks. Congrats to the team: @gene_ch0u, @wenqi_xian, @stevenygd, @mohsaied, @Bharathharihar3, @Jimantha, @realNingYu, @debfx ! All models and code have been released: GitHub: github.com/Eyeline-Resear… Project page: eyeline-research.github.io/FlashDepth/ Paper: arxiv.org/abs/2504.07093