code viber.

121.4K posts

code viber.

@nocodeonlyvibes

shit posting on twitter. twitter shitter, fact checker. Not american.

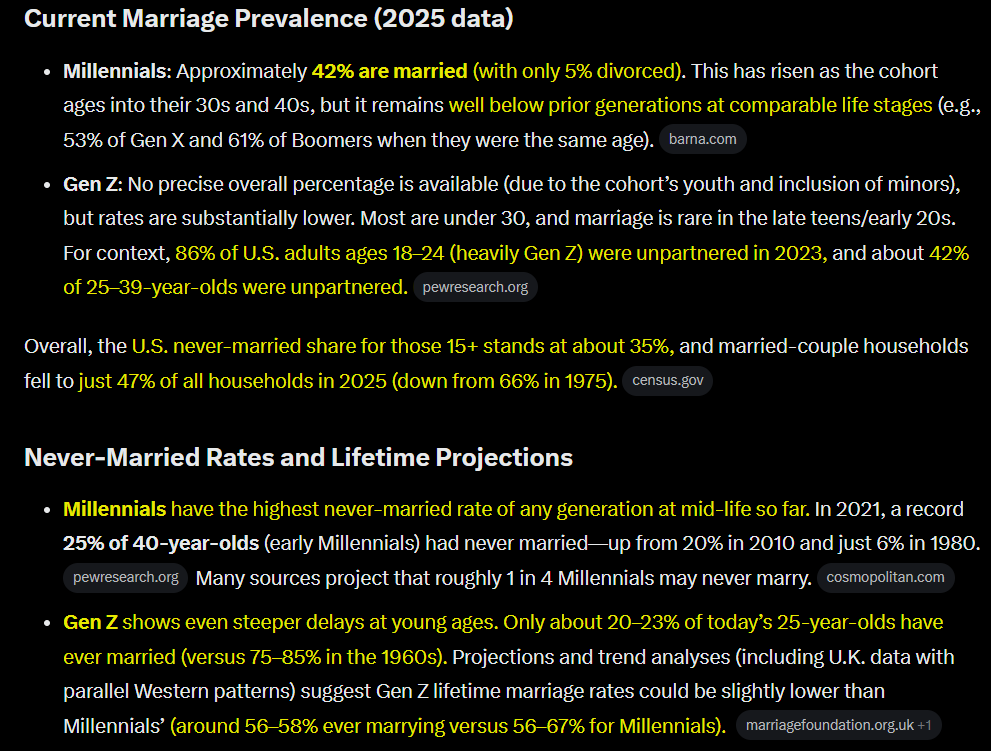

Checking in on the status of Wokeism, and it turns out Leftist academics are unironically saying that society needs to intentionally “marginalize men” even more to supposedly solve the birth rate. History shows us that what’s normalized in academia becomes publicly mainstream within a generation, and there is no sign the ship is turning or even slowing down.

Self-hosting a database is better than buying a managed one on nearly every dimension except for operational burden. We way over-indexed on that, and I think we're about to witness a renaissance of self-hosted databases.

Tekrar söylüyorum! Türkiye'yi NATO'dan atın!

While the surviving IRGC Leaders are trapped like drowning rats in a sewage pipe, Iran’s creaking oil industry is starting to shut in production thanks to the U.S. BLOCKADE. Pumping will soon collapse. GASOLINE SHORTAGES IN IRAN NEXT!

(WCTW) The Oil Market Breaking Point Is Here We made it public. hfir.com/p/wctw-the-oil…

23 years ago today, American activist Rachel Corrie was crushed to death by an Israeli bulldozer as she tried to stop the demolition of a Palestinian home in Gaza.

German Chancellor Friedrich Merz, speaking to schoolchildren today, warned that the U.S. does not have an exit strategy for the Iran war and “an entire nation is being humiliated by the Iranian leadership." -Reuters

Mark Levin says it’s time to ban “Nazis” and “Jihadis” from social media, saying free speech has gone too far and is “overprotected.” He wants them immediately removed from all platforms because they are “inciting” violence. Levin is trying to put people who disagree with his politics in the same category as an assassin. “Get off our platform.”

But instead of arguing for a return of social norms around marriage, the authors say that the solution to the fertility crisis lies in "further marginalization of men" (yes, that's in the title!) and getting single women to have more kids. Does that seem likely to work? 5/5

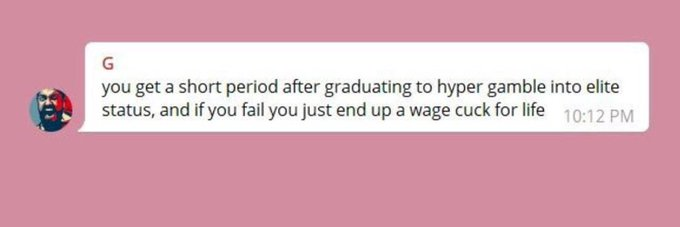

You should expect to see a lot of this as Zoomers turn 30 and up the ante on the LARP that they're the first generation to get screwed by their elders. It makes no sense for Millennials and Zoomers to be at odds because we have all the same problems, caused by the same people.

The problem is not that Turkey stands outside Europe. The problem is that Ankara learned to live inside Europe’s rooms while cutting holes in its walls. Cyprus was not an exception. The Aegean is not a quarrel. Migration is not a bargaining chip. Hamas, Libya, Syria, Russian energy and the Black Sea are not separate files. They are one perimeter. Turkey does not seek a place in Europe. It seeks leverage over Europe. Europe does not need another seminar on Turkish identity. It needs the courage to call the pattern by its name.

Rubio admits Iran controls the Strait: “If what they mean by opening the straits is, yes, the straits are opened as long as you coordinate with Iran, get our permission or we’ll blow you up, and you pay us. That’s not opening the straits. Those are international waterways. They cannot normalize, nor can we tolerate them trying to normalize, a system in which the Iranians decide who gets to use an international waterway, and how much you have to pay them to use it.”