Tim Burton

1.9K posts

Tim Burton

@notschmee

Techno-optimist. AI+Crypto since 2015. Always building, always learning. Husband & Girl Dad.

Kentucky, USA Katılım Kasım 2023

195 Takip Edilen629 Takipçiler

Tim Burton retweetledi

- Drafted a blog post

- Used an LLM to meticulously improve the argument over 4 hours.

- Wow, feeling great, it’s so convincing!

- Fun idea let’s ask it to argue the opposite.

- LLM demolishes the entire argument and convinces me that the opposite is in fact true.

- lol

The LLMs may elicit an opinion when asked but are extremely competent in arguing almost any direction. This is actually super useful as a tool for forming your own opinions, just make sure to ask different directions and be careful with the sycophancy.

English

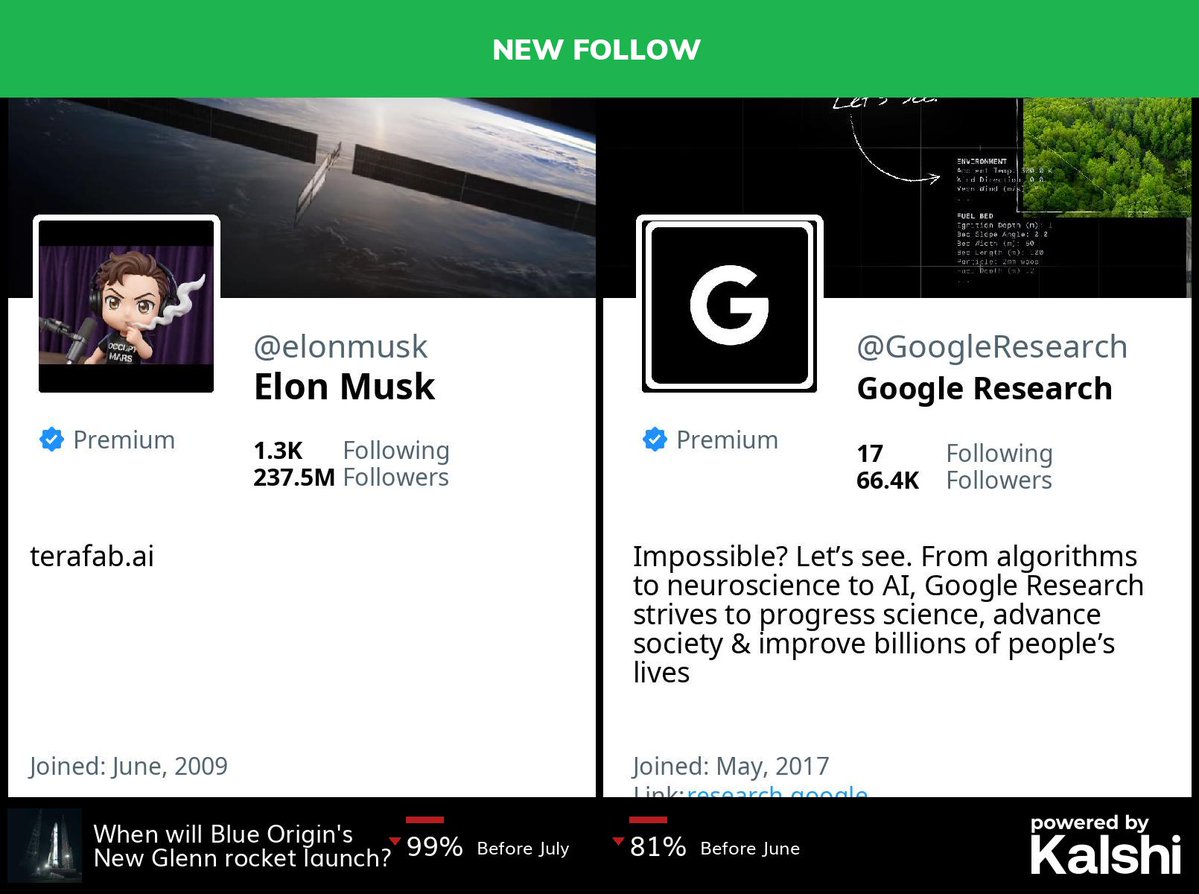

@BigTechAlert @elonmusk @GoogleResearch @elonmusk following Google Research the same week TurboQuant drops is not a coincidence.

More intelligence per bit is the whole game.

English

@tonybalogna The library-to-makerspace pipeline is something id genuinely love to see everywhere

English

Be careful what tools you trust.

A repo with 40k stars and 6.7k forks had obfuscated code that sends ALL of the secrets on your machine to a remote server.

Daniel Hnyk@hnykda

LiteLLM HAS BEEN COMPROMISED, DO NOT UPDATE. We just discovered that LiteLLM pypi release 1.82.8. It has been compromised, it contains litellm_init.pth with base64 encoded instructions to send all the credentials it can find to remote server + self-replicate. link below

English

Tim Burton retweetledi

@sundeep an engineer’s value is measured in shipped product, not how fast they drain your token budget. this is Herbalife logic with a CUDA wrapper.

English

Tim Burton retweetledi

Tim Burton retweetledi

Introducing 𝑨𝒕𝒕𝒆𝒏𝒕𝒊𝒐𝒏 𝑹𝒆𝒔𝒊𝒅𝒖𝒂𝒍𝒔: Rethinking depth-wise aggregation.

Residual connections have long relied on fixed, uniform accumulation. Inspired by the duality of time and depth, we introduce Attention Residuals, replacing standard depth-wise recurrence with learned, input-dependent attention over preceding layers.

🔹 Enables networks to selectively retrieve past representations, naturally mitigating dilution and hidden-state growth.

🔹 Introduces Block AttnRes, partitioning layers into compressed blocks to make cross-layer attention practical at scale.

🔹 Serves as an efficient drop-in replacement, demonstrating a 1.25x compute advantage with negligible (<2%) inference latency overhead.

🔹 Validated on the Kimi Linear architecture (48B total, 3B activated parameters), delivering consistent downstream performance gains.

🔗Full report:

github.com/MoonshotAI/Att…

English

Tim Burton retweetledi

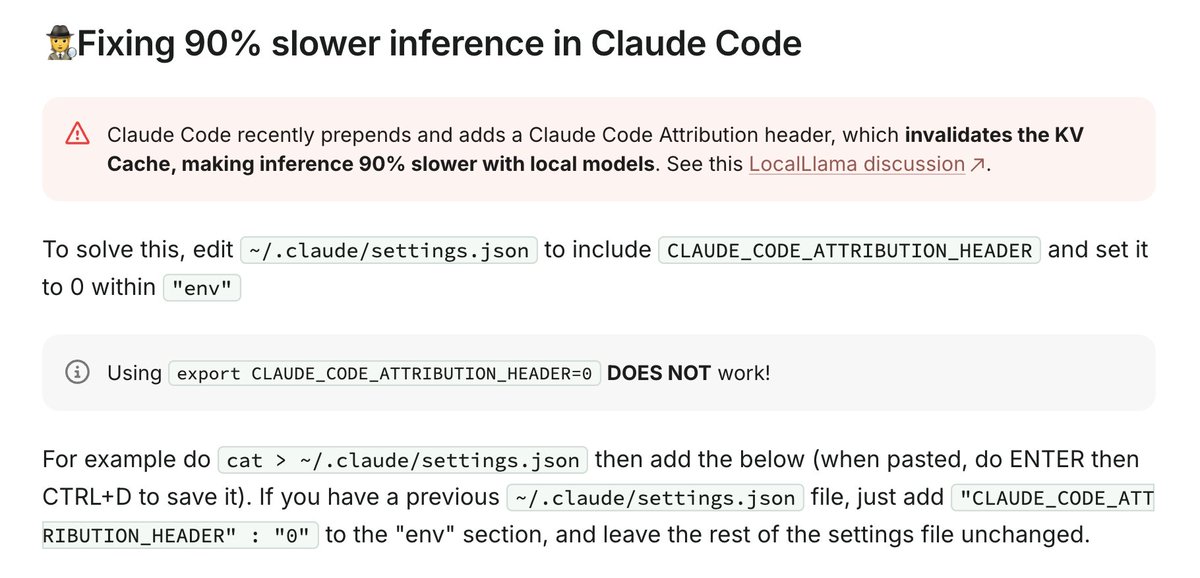

Note: Claude Code invalidates the KV cache for local models by prepending some IDs, making inference 90% slower.

See how to fix it here: #fixing-90-slower-inference-in-claude-code" target="_blank" rel="nofollow noopener">unsloth.ai/docs/basics/cl…

English

Tim Burton retweetledi

Tim Burton retweetledi

LLMs have reached nested branch prediction

Tanishq Kumar@tanishqkumar07

I've been working on a new LLM inference algorithm. It's called Speculative Speculative Decoding (SSD) and it's up to 2x faster than the strongest inference engines in the world. Collab w/ @tri_dao @avnermay. Details in thread.

English

Tim Burton retweetledi

Tim Burton retweetledi

@ThePrimeagen creating a thing isn’t hard.

maintaining is.

English