nuforms

23K posts

nuforms

@nuforms_lab

Lone analyst surfing the endless waves of the crypto ocean. Chasing Alpha, catching narratives, and leaving digital footprints in every tide.

Grok Voice Think Fast 1.0 ranks #1 on the Artificial Analysis τ-Voice benchmark for real-world agentic customer service resolution Absolutely outperforming GPT-Realtime-2 (High) and Gemini 3.1 Flash by a huge margin That's a massive 12%+ lead over OpenAI's best model that just released a few days ago Grok is running real-time background reasoning without the latency penalty, which is why it is already handling live Starlink phone operations autonomously at scale

24/7 commodities are live on Phoenix. Crude oil and gold — fully on-chain, open every hour of every day.

What the SpaceX–Anthropic Deal Means Two weeks ago, we published a note laying out what GPT-5.5's release implied. The conclusion was simple: whoever secures compute first, in greater volume, and with greater reliability ultimately takes the win. With OpenAI's 30GW roadmap dwarfing Anthropic's 7–8GW, we closed by arguing that the structural advantage on compute sat with OpenAI. Less than a fortnight later, that conclusion is being tested. On May 6, Anthropic signed a single-tenant lease for the entirety of Colossus 1 with SpaceXAI — the infrastructure subsidiary that consolidates Elon Musk's xAI and SpaceX. The asset carries more than 220,000 GPUs and 300MW of power, and crucially, is scheduled to come online within this month. It served as the capstone of Anthropic's April blitz, which added 13.8GW of cumulative capacity over the span of a single month. On headline numbers alone, OpenAI took more than a year to stack 18GW; Anthropic has put 13.8GW in the ground in thirty days. The takeaways break down into three. First, the compute pecking order has been redrawn again. Anthropic has now swept up the AWS expansion (5GW, with $100B+ in spend commitments over a decade), Google + Broadcom (3.5GW of TPU), Google Cloud (5GW alongside a $40B investment), and now SpaceXAI's Colossus 1 (0.3GW). Cumulative committed capacity, inclusive of pre-April allocations, sits at 14.8GW. This is still only half of OpenAI's 2030 target of 30GW, but the fact that the SpaceX lease will be live inside a month makes "deliverability" a qualitatively different proposition. Second, Elon Musk is the plaintiff in an active lawsuit against OpenAI — and at the same time, the supplier handing 220,000+ GPUs and 300MW of power, in one block, to OpenAI's most formidable competitor. The timing matters: the deal was struck in the middle of the Musk–Altman trial. We read this as a deliberate pincer with OpenAI in the middle. In the courtroom, Musk works to dismantle the moral legitimacy of OpenAI's leadership; in the market, he arms Anthropic to absorb OpenAI's revenue and user base. Third, the structure is financial-engineering perfection — a clean win-win for both sides. xAI can recognize $6B of annual revenue from a single contract, an amount that almost precisely offsets its Q1 2026 annualized net loss of $6B. It also accelerates the cleanup of SpaceXAI's pre-IPO balance sheet, with the entity now being floated at around $1.75T. Anthropic, on the other side, converts roughly $5B of spend into what it expects to be $15B of ARR via the coming inference-revenue surge. (Mirae Asset Securities, May 8, 2026)

We’re excited to invest in Ethos. Ethos is building AI-powered infrastructure for human opportunity. Using AI voice agents, Ethos captures the knowledge, expertise, and nuance that traditional professional profiles miss. From there, it matches people to a wide range of opportunities across the economy: market research, expert calls, fractional roles, and full-time jobs. Top earners are already making more than $10,000 per month on Ethos. Cofounders James and Daniel are building on the premise that AI shouldn’t make you replaceable, but irreplaceable. Ethos will be the next great improvement in the NPS of employment by making work itself abundant. By @illscience, @jamdac, and @omooretweets

Claude can FULLY control your Tradingview. But actually, it can do MUCH more than that. There a so many usecases.

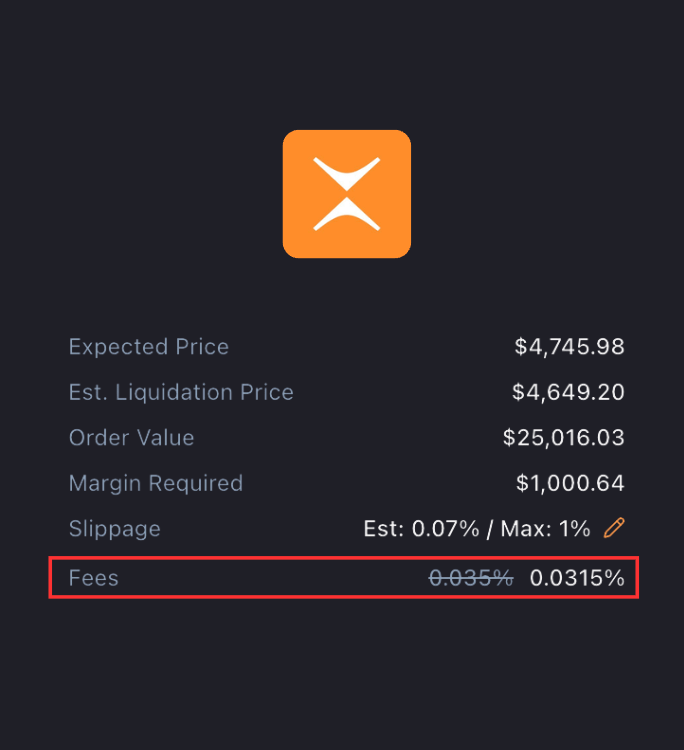

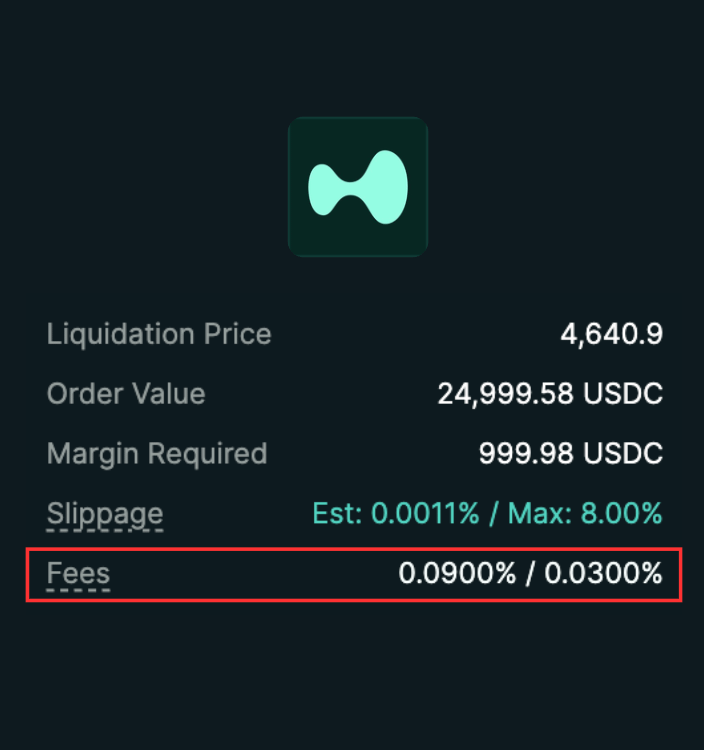

tradingview tells you when to trade. claude trades for you. here's what the numbers look like: → average trader misses 3 out of 5 alerts - wrong timezone, asleep, away from screen → missed entry = missed move. BTC averages 4.2% within 2 hours of a breakout signal → that's $420 on a $10k position. per missed trade. here's what claude does instead: → checks your indicator every 15 minutes - 96 times a day → detects the signal the second it forms → sends the full setup to telegram in under 3 seconds → you tap confirm or skip from your phone → position opens. you go back to sleep. 4 hours of sleep = 16 missed checks without claude. with claude = 0 missed checks. ever. setup takes 22 minutes. zero code. works on binance, bybit, hyperliquid + 140 other exchanges. the full system → t.me/alphacartelset… like + bookmark. you'll use this.