Omkar Pathak

202 posts

Omkar Pathak

@pathomkar

PM on Foundation Models, Gemini-based personalization, coding agents YT/Deepmind. Prev. Google Brain, TPU, Assistant/Bard. Also building https://t.co/SeUfhnr2v1.

SF Bay Area, CA Katılım Nisan 2011

331 Takip Edilen168 Takipçiler

We’re building an LLM chip that delivers much higher throughput than any other chip while also achieving the lowest latency. We call it the MatX One.

The MatX One chip is based on a splittable systolic array, which has the energy and area efficiency that large systolic arrays are famous for, while also getting high utilization on smaller matrices with flexible shapes. The chip combines the low latency of SRAM-first designs with the long-context support of HBM. These elements, plus a fresh take on numerics, deliver higher throughput on LLMs than any announced system, while simultaneously matching the latency of SRAM-first designs. Higher throughput and lower latency give you smarter and faster models for your subscription dollar.

We’ve raised a $500M Series B to wrap up development and quickly scale manufacturing, with tapeout in under a year. The round was led by Jane Street, one of the most tech-savvy Wall Street firms, and Situational Awareness LP, whose founder @leopoldasch wrote the definitive memo on AGI. Participants include @sparkcapital, @danielgross and @natfriedman’s fund, @patrickc and @collision, @TriatomicCap, @HarpoonVentures, @karpathy, @dwarkesh_sp, and others. We’re also welcoming investors across the supply chain, including Marvell and Alchip.

@MikeGunter_ and I started MatX because we felt that the best chip for LLMs should be designed from first principles with a deep understanding of what LLMs need and how they will evolve. We are willing to give up on small-model performance, low-volume workloads, and even ease of programming to deliver on such a chip.

We’re now a 100-person team with people who think about everything from learning rate schedules, to Swing Modulo Scheduling, to guard/round/sticky bits, to blind-mated connections—all in the same building. If you’d like to help us architect, design, and deploy many generations of chips in large volume, consider joining us.

English

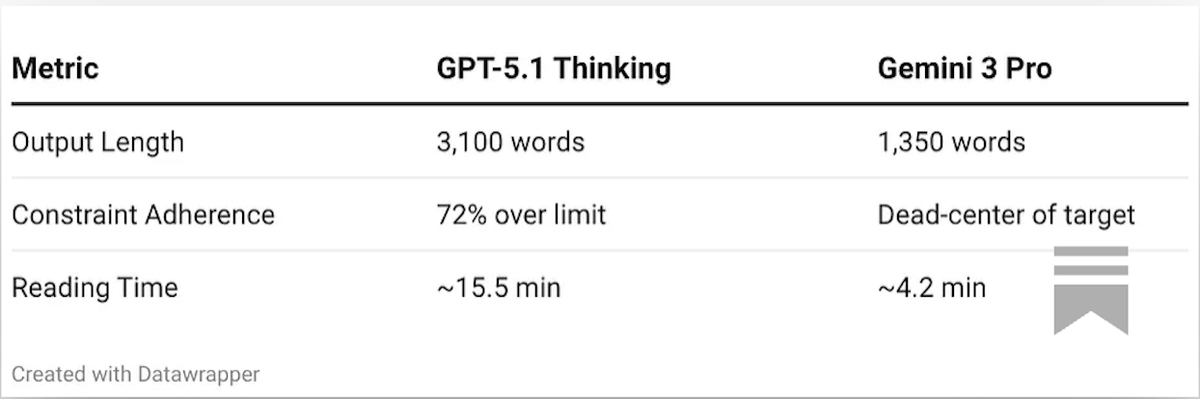

Although GPT 5.2 is out, the idea here is to evaluate the "Thinking" architecture, and the structural bias here seems to persist across incremental model updates.

Same prompt, yet 2.3x token difference!

Part 1: omkarpathak.substack.com/p/part-1-a-for…

Part 2 about steerability coming soon!

English

@bcherny Thank you for sharing! Could you share more on how to get Claude to iterate on UI/UX for webapps? I often find that opus is great at backend out of the box but its first attempts for new ui features tend to be somewhat off. I send screenshots and logs to CLI and ask it to fix.

English

I'm Boris and I created Claude Code. Lots of people have asked how I use Claude Code, so I wanted to show off my setup a bit.

My setup might be surprisingly vanilla! Claude Code works great out of the box, so I personally don't customize it much. There is no one correct way to use Claude Code: we intentionally build it in a way that you can use it, customize it, and hack it however you like. Each person on the Claude Code team uses it very differently.

So, here goes.

English

Highly recommend it!

Gagan Biyani 🏛@gaganbiyani

The biggest downside of taking @shreyas' product sense course is that I can never again work with anyone who doesn't understand these concepts. This includes not just co-founders and product leaders, but probably also marketers and sales leaders.

English

@virattt Edgar data is not easy to parse. This will be super useful!

English

My stock market API landing page is live.

Initial focus is fundamentals data.

• starting with 10,000 stocks

• optimized for LLMs and AI agents

• no subscriptions or contracts

• simple and clean API

The waitlist is now live 🙏

I am setting aggressive goals for myself.

Launching a beta API in next 2 weeks.

Again, building nights and weekends only.

Pumped to release this into the wild.

cc @_buildspace @_nightsweekends

English

Omkar Pathak retweetledi

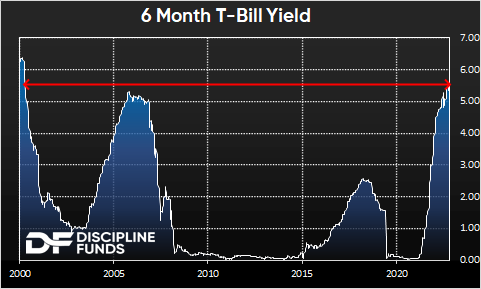

@saxena_puru Agree that it is a strong business. What are your thoughts on valuation though, especially if we consider an increased macro risk or possibility of a broader drawdown?

English

Omkar Pathak retweetledi

For all of the most important things, the timing always sucks. Waiting for a good time to quit your job? The stars will never align and the traffic lights of life will never all be green at the same time. The universe doesn’t conspire against you, but it doesn’t go out of its way to line up all the pins either. Conditions are never perfect. “Someday” is a disease that will take your dreams to the grave with you. Pro and con lists are just as bad. If it’s important to you and you want to do it “eventually,” just do it and correct course along the way.

English

Omkar Pathak retweetledi

The PaLM language model paper is now officially published at JMLR.

jmlr.org/papers/v24/22-…

English

Omkar Pathak retweetledi

OPINION: For S.F. to survive the challenges we face, we need to redefine our urban landscape by drawing inspiration from successful cities like Tokyo.

trib.al/RP92WMO

English

Omkar Pathak retweetledi

We collaborated with Bard, an AI experiment by @Google, to optimize your coding workflow.

When you use Bard to help with coding, you can now export Python code to Replit. In your Repl, you can edit or test it to bring your ideas to life.

Try it out → goo.gle/44HGpJH

GIF

English

@cisneroscapital @cullenroche @kyrill007 Sorry for a newbie question, looking to learn - why not a money market fund yielding ~5% while offering basically best possible liquidity?

English

Installing python on an m2 MacBook has been so painful for me that I am exclusively using Google colab for simple python3 projects. Would love to get a definitive guide that just works.

I’ve tried homebrew and the pip installs did not work!

Chris Albon@chrisalbon

What is "the right way" to install Python on a new M2 MacBook? I assume it isn't the system Python3 right? Maybe Homebrew?

English

@lennysan Congratulations on the growth! Great podcast. Considering the quality of the podcast, I would have never guessed that it’s only been a year. 🎉🚀

English

Omkar Pathak retweetledi

Paper: TPUv4 system has an optically reconfigurable network to assemble groups of 4x4x4 chips like legos (4x4x12? 16x16x16?). SparseCores help w/ embeddings. TPUv4 outperforms TPUv3 by 2.1x & perf/W by 2.7x, & has 4096 chips so ~10x faster overall.

.arxiv.org/abs/2304.01433#

English

Omkar Pathak retweetledi